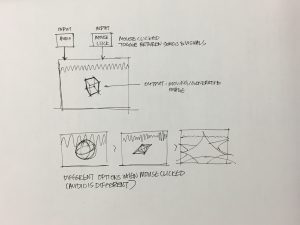

Sophia Kim and I plan on collaborating for this final project. We want to further explore interactive sound implementation and WEBGL. We started off exploring currently existing work and discovered code that integrates sound and visuals together. They utilized the frequency and amplitude of imported songs to alter the imported image. We started off looking at how static imported images are altered based off of sound with this video and we branched off static images by looking at more dynamic and generative shapes through WEBGL. We found this interactive particle to be really interesting and cool and we definitely want to play around with geometries. Since our current skillsets are not developed enough to create something as complicated and fleshed out as this particle equalizer, we want to stay confined to shapes like ellipses, boxes, and custom shapes generated by basic math functions like sin, cos, and tan. From this, we want to play around with the idea of interacting with multiple human senses to create an experience. The audience is able to have a more heightened experience because of the mix of visuals and audio. The use of sound can make visuals easier to comprehend. In a way, the visuals will almost be a method of data visualization of the structure of the song.

![[OLD FALL 2018] 15-104 • Introduction to Computing for Creative Practice](https://courses.ideate.cmu.edu/15-104/f2018/wp-content/uploads/2020/08/stop-banner.png)