For this analysis on data visualization artists, I wanted to look into the work of American scientist, Martin Wattenberg. With experience in working at IBM and co-leading Google PAIR, Wattenberg carries an interest in the different connections technology can make and the narrative and beauty that emerges from those connections. One such project that takes computation and captures the narrative/ storytelling of it that I find particularly interesting is Wattenberg’s Shape of Song exhibition.

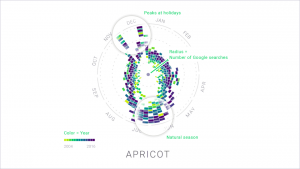

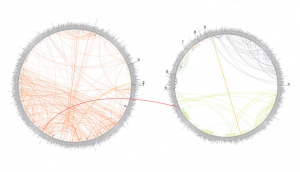

(The above image shows a matrix of Wattenberg’s entire Shape of Song collection. The program to create these intricate forms was written in Java)

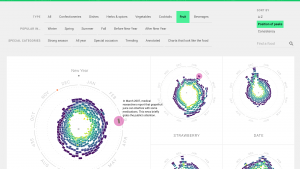

The purpose of the Shape of Song exhibition was to create works through the connection of different characters in a musical piece. As seen in the image below these connection would occur in the form of arches, which vary in size and span according to the distance between relating parts in the musical composition. Wattenberg achieved these forms by implementing the music in the form of a MIDI file, which was then separated and analyzed in subsets of “tracks.”

Once this process is done in several iterations through the course of the entire piece, the resulting work is a large set of connecting arches with different dynamic qualities.

(The image above shows a diagram of the Goldberg Variations. The AABB structure of the composition can be seen in the way that the piece can be broken up into two separate parts.)

(The image above shows one completed work from the Shape of Song exhibition that captures the different connections and repetitions of musical components in Vivaldi’s Autumn, Four Seasons composition. )

What I find most interesting about this form of data visualization is the way in which it looks to find connections in something that seemingly has a set beginning and end. The way in which the data is visualized in this manner shows another interesting way of interpreting music and how it is structured by taking something that we hear and mapping it into something that we can see.

Read More:

http://turbulence.org/Works/song/method/method.html

http://www.bewitched.com/song.html

![[OLD FALL 2018] 15-104 • Introduction to Computing for Creative Practice](https://courses.ideate.cmu.edu/15-104/f2018/wp-content/uploads/2020/08/stop-banner.png)