I went to a coffee shop downtown the other day and was in a corner by myself.

After reading a few pages of a book I noticed a boy come in alone and sit down in a chair at the table next to me.

He opened a pad of paper and started writing.

Every so often he glanced over his shoulder to make sure nobody was coming.

He was in deep thought.

Then he put his pen down and a moment later got up and left.

A short time later a woman entered the coffee shop alone.

She sat down at a table across from me.

She put her coat down on a nearby chair and leaned back in the chair.

I thought, “Aw, that’s nice,” and continued reading my book.

When it seemed like she’d waited a long time for her drink she left the shop again and started walking down the street.

I thought, “Hmm … interesting.”

Then a man sat down at my table and started reading.

After a few minutes of quiet conversation I thought, “That is a lot of coffee.”

He leaned back in the chair and closed his eyes.

I thought, “He’s napping,

A Duck

Wall to wall carpeting

The duckling was sitting on the roof of the duck house, watching the birds fly by. As the sun started to set, the ducklings could see the carpet in the distance, stretching all the way to the horizon. They knew that they would have to find a way to get over the wall to get to the carpet, and they were excited to do so.

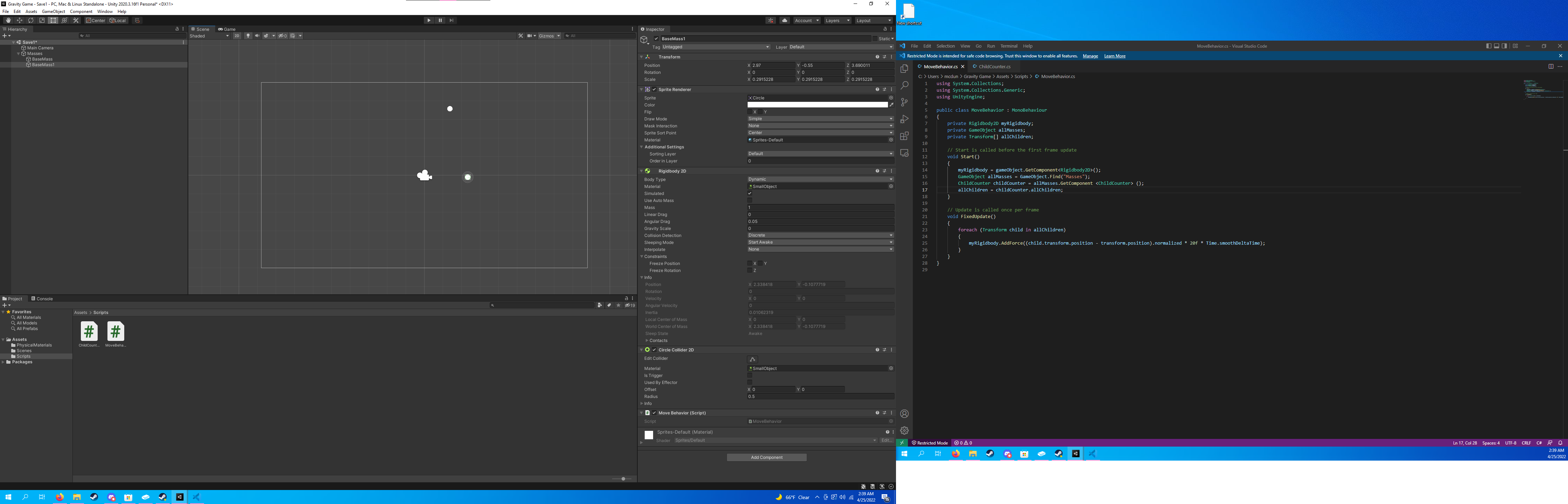

I find it interesting how these two different tools approach the same idea differently. One just asks for themes while the other asks for the start of a story. I wonder if both algorithms use the given information in the same way.