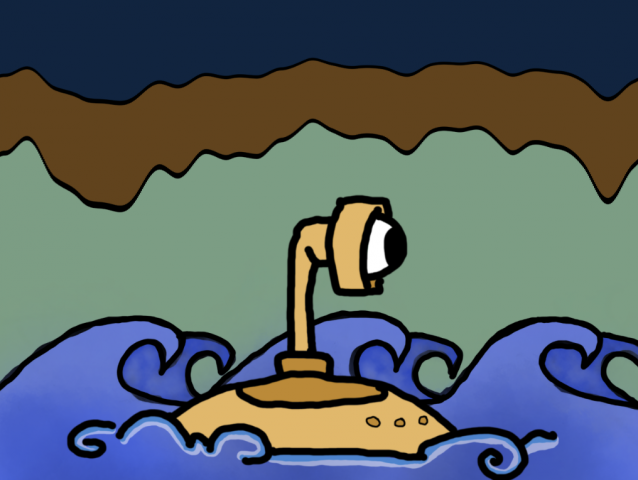

A couple of things to note at the start… totally bombed the easiest part of this project… making the canvas a 2:1 ratio. I drew the water and submarine as a square, then remembered it was supposed to be a 2:1 ratio, but the thought of redrawing all of it made me want to die, so I cropped it to a 4:3 ratio which is random but the best I could manage. Sorry 🙁 Second, I managed to do this in like one afternoon, which is pretty evident I’m sure. But, I’m trying to do my best with the time that I’ve got, and I do want to come out of this class knowing I at least gave every project a go.

The original inspiration for this project came from the atmospheric train ride from a past student that we looked at in class. A refresher:

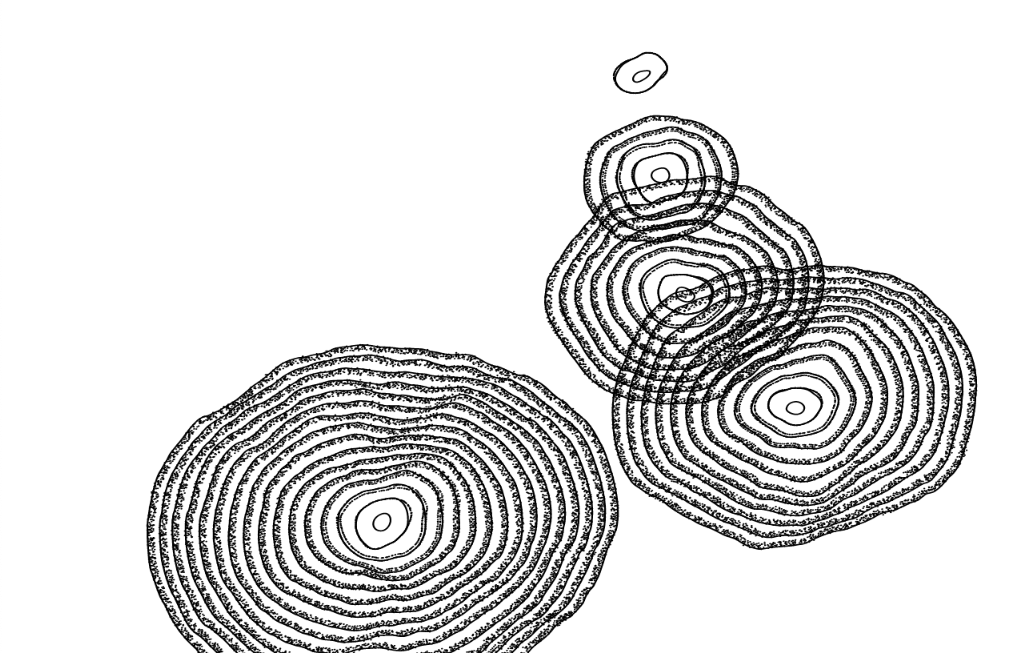

I really liked this idea of a .png image and looking through a hole of some kind into the outside world. My immediate thought was to make the inside of a submarine, but I wasn’t really interested in making underwater creatures. It eventually morphed into a submarine expedition around the world, based on my boyfriend’s father who did just that in his time with the military. When looking at the final product, I’m dissatisfied because I think I accidentally left behind the one idea I found charming to begin with: a hole, a window, something surrounded.

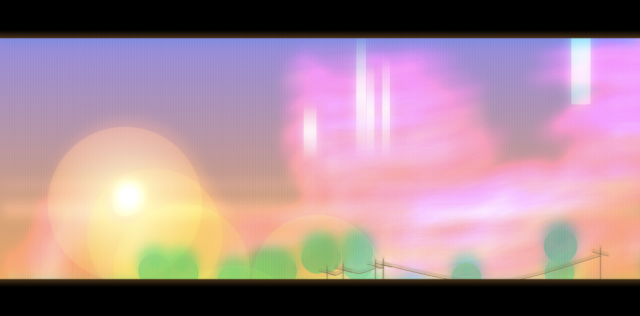

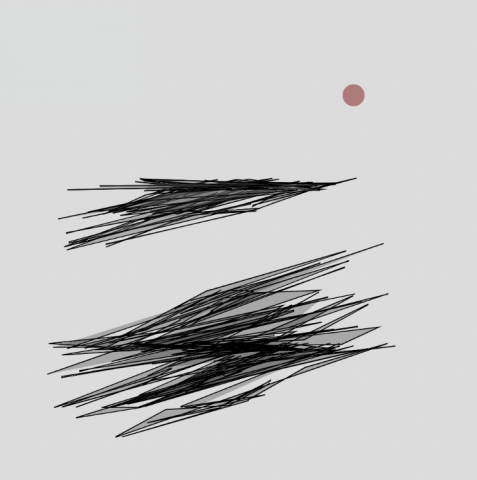

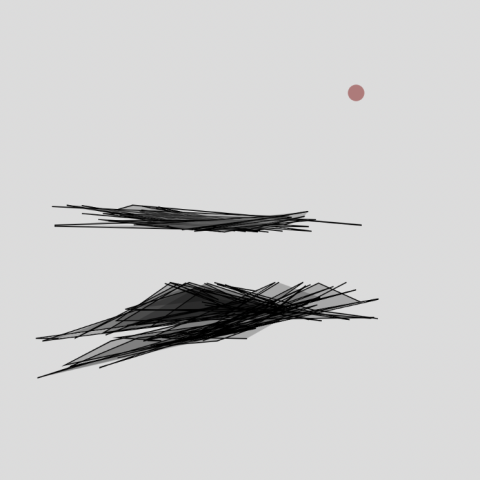

In this sped up gif, you can see that the submarine and waves kind of bob up and down. I orginally had them static and felt like something was super off and weird. Giving them a little life (independent of each other too) definitely helped. You can also see that the sky cycles through day and night. I really like that I did this… it sort of gives this idea of the passage of time, how long this submarine expedition and adventure is really taking.

I can only really draw in a cartoon-ish way, so I also added the faux black outlines to the mountains to match my drawing, which definitely helped make everything more united, but after talking with Golan about trying to broaden my horizons and make less “cutesy” stuff, I wish I gave myself more time to try that. Hopefully I can do that with the creature project!