I wanted to go for Golan’s idea of making a one button game. For this I wanted to maximise the fun for this minimal input, my initial reaction was to do something rhythmic or reaction based like geometry dash. However, the mechanics there are a little too simple and basic to the point I’d mostly have to focus on the looks of the piece instead which isn’t what I want to do.

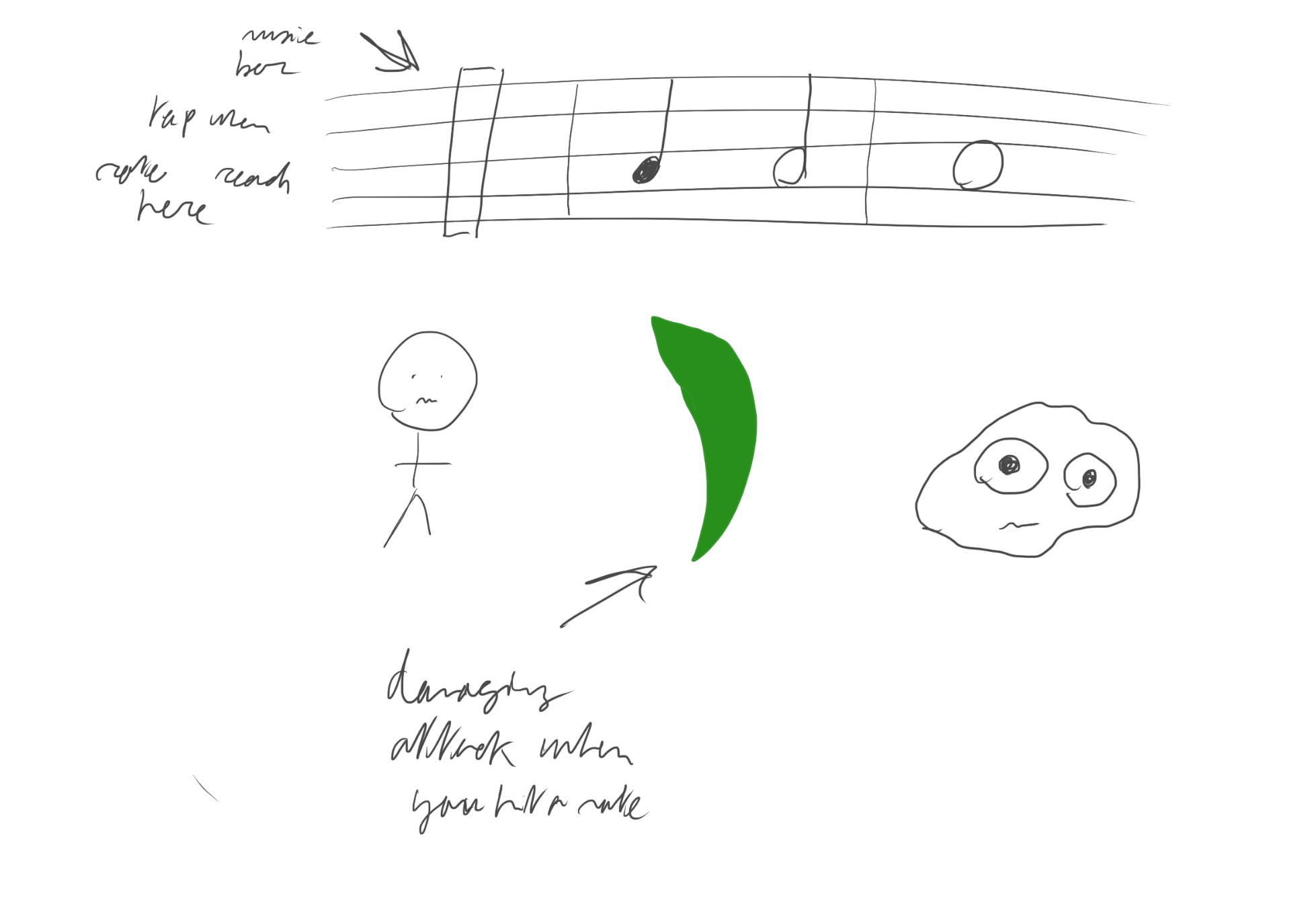

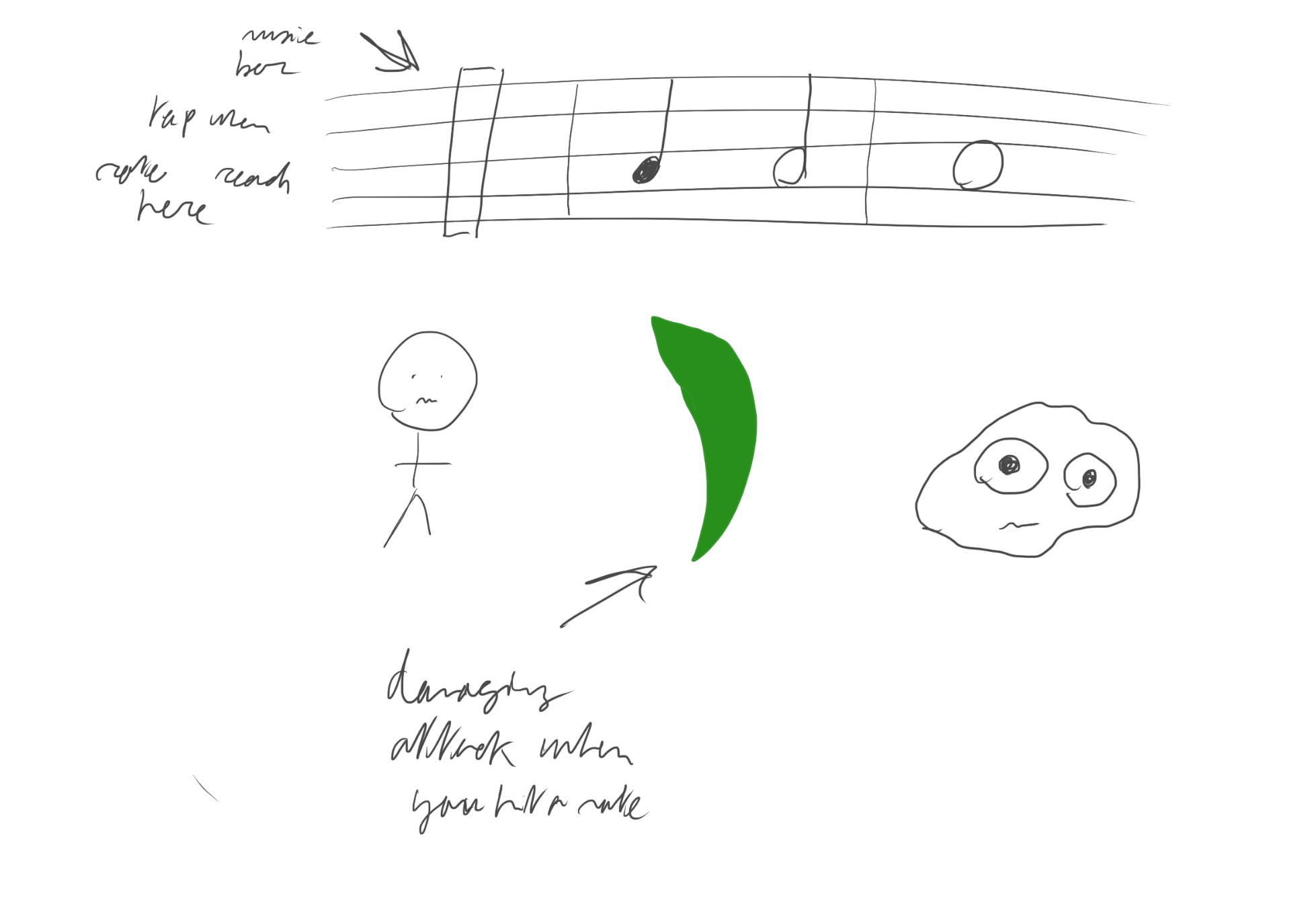

The conclusion was to mix up this rythmic aspect with a combo game, inputs could be based on timing with a moving bar or sheet music where holding or tapping a note could make a difference in full damage attacks or weakened ones. I think the hardest part would be getting all the sound effects for the notes to play when you tap, I’d have to figure out if there was an open database with notes to use already. I think timing wouldn’t be too difficult with the use of timers and maybe an overarching metronome that controls all the time in the game.

Some reach goals could be adding a defending part where you match the notes of the enemy, a fury attack which could be similar to a solo (but with no way to control the pitch of the sound it would just be a one button mash), making it look nice (proper effects on hit, a pulse metronome in the corner, etc…)