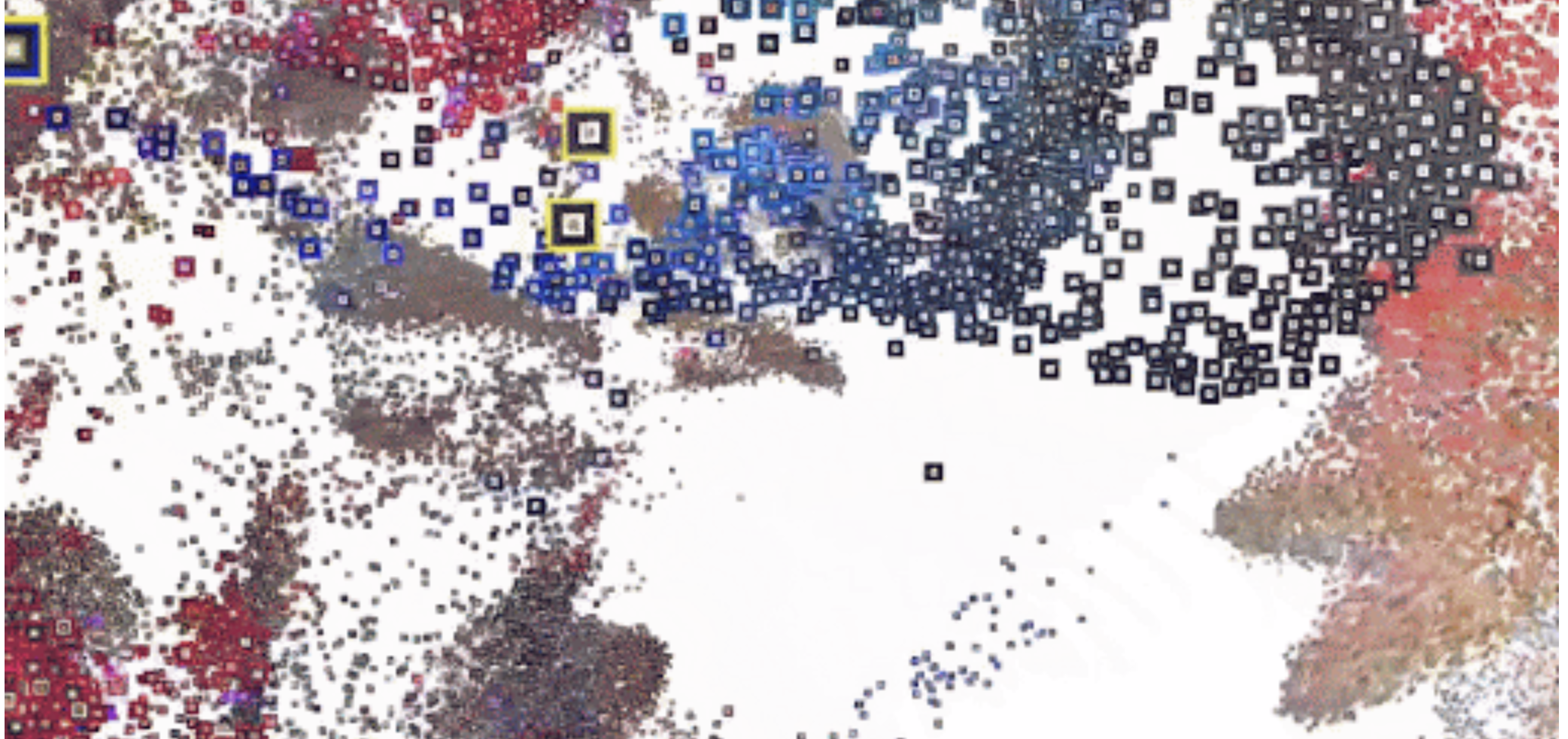

Emoji Scavenger Hunt

This project was commissioned by Google in 2018 by the Google Brand Studio. The project uses neural networks to identify real-life versions of emojis captured by one’s phone camera. More info

This project truly baffled me because of its implications. For people who were already adults at the conception of the Emoji / people who spent their later development years growing with the Emoji, it is taken for granted that emojis are cartoonish representations of real-life objects. For example, the thought process is that there is a dog emoji which represents a real-life thing: a dog; however, this project inverses this logic: that there is a real-life version of the dog-emoji: a dog. The thing which is considered first is flipped. This subconsciously gives more weight to the emoji rather than the object the emoji represents. The “real” thing is no longer the dog, but the emoji.

This writing is just an extremely deep dive into an objectively shallow thing, but regardless there is more to say. Thinking back, it was mentioned in the above paragraph that current adults/older adolescents accept that emojis are representations of real-life things. However, this is not the case for younger children who have seen or experienced more through the phone than in real life. This is especially true due to the pandemic. Those who are around 0-7 right now have likely grown up more online than in person, and therefore have seen more emojis than the “real” versions of emojis. It is therefore likely that they consider the emojis to be more “real” than their “real” counterparts, meaning they consider first the emoji and then the object, meaning logic is backwards in respect to ours (the adults/older adolescents).

This project, the Emoji Scavenger Hunt, forces this backwards perspective onto its user. While it may initially seem like a harmless and fun iSpy game, it is also on some level a commentary on the difference in perspective or logical journey of the older versus newer generation in respect to technology.

I like the organic/illustrative style of this generative work. And it’s very speculative, it makes the viewers wonder what images are being fed to the algorithm to generate this image.

I like the organic/illustrative style of this generative work. And it’s very speculative, it makes the viewers wonder what images are being fed to the algorithm to generate this image.