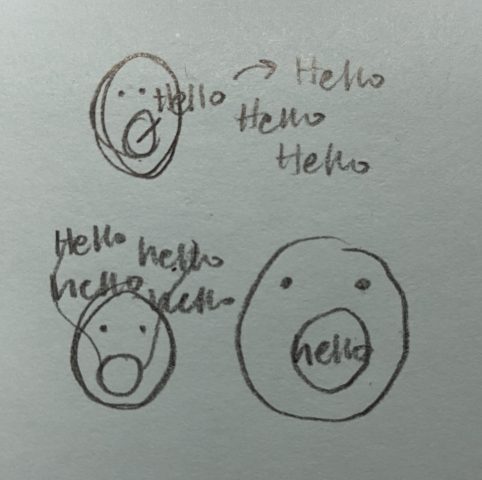

My concept for the Augmented body project was to have an altered way of speaking. I wanted for people to type in their words and have the words come out of their mouth without them having to actually voice themselves. I wanted to make this because sometimes writing or typing out words is easier than saying them. When you open your mouth and press a letter on the keyboard, multiple of that letter will float out of your mouth.

I wanted to have the things floating out of your mouth be words, but I ended up doing single letters instead. As I started coding for words, I ran into lots of problems (that probably could be fixed if I had more time). I was not able to get full words as input since I had a difficult time figuring out input text boxes combined with the web screen display (every time I had the input text box on screen, it would cover the webcam and vise versa). I settled for detecting single letters pressed and outputting that instead of full words. Now if the user wants to type a word, they must view the word by reading the floating letters from farthest from the mouth to closest (so disjoint letters).