created by Muhammed Azhar

created by Muhammed Azhar

Short description

- Creator’s Vision:

- WHAT? Development of an affordable and more efficient method to help visually impaired people navigate in space with greater speed and confidence.

- HOW? By wearing a technological artefact that detects nearby visual inputs (obstacles) and translates them into haptic and auditory outputs (vibrations & sound).

- WHY? Already existing methods (white cane, dog, smart devices) for visually impaired bodies are either expensive and difficulty to carry with or difficult to be learned and practiced.

- Innovation:

-

- ultrasonic waves for the detection of spatial obstacles

- visual information is encoded and further elaborated by another sense or senses (touch proprioception & audition)

- wearable, easy to carry

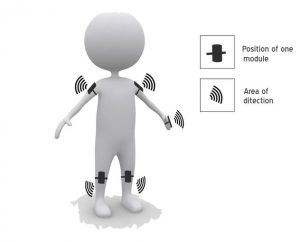

- Details: There are five modules across the whole body, all of which are equipped with ultrasonic sensors. Two on the shoulders, two on the knees and one on the hand. Using them one’s can detect the existence of physical materials in a five dimensional view. The distance between the user and the object determines the intensity of the vibration and the rate of sound beeps. The smaller the distance, the more intense the outputs.

Arduino pro mini

ultrasonic sensors that send and receive waives

vibrating motors

buzzers

- Contributions:

- Wearable technology for visual impairment

- An experiential example of sensory substitution and intermodal transfer of information

- Low-cost/affordable

https://www.youtube.com/watch?v=t3j2ZW9T8CI#action=share

Sharing my thoughts:

- Good points:

- The multiple modules make an excellent point as our body’s bones operate like vectors and different body parts may be aligned within different directions.

- Vision and touch constitute spatial modalities of perception. That makes it successful to elaborate a visual input through a haptic output

- What I would do differently:

-

- Add an extra sensor module on the head or the neck

- Integrate a GPS module

- Add a sensor that ‘recognizes’ different materials from a distance and uses sound outputs for their perception.

Comments are closed.