Manuel Rodriguez & Jett Vaultz

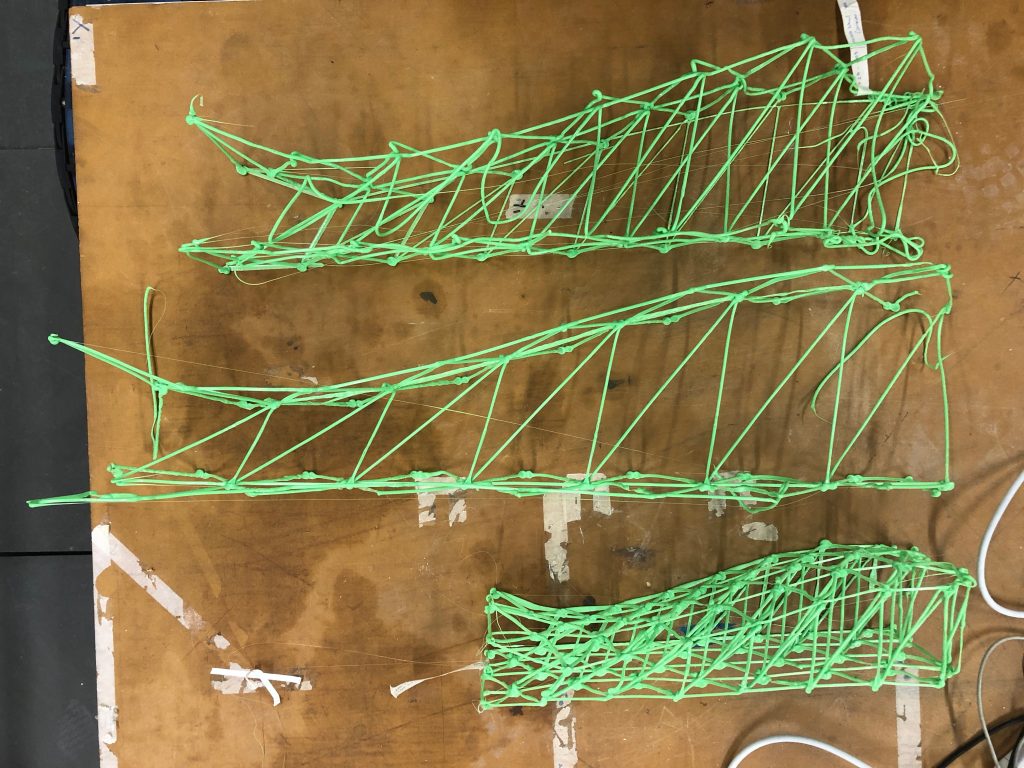

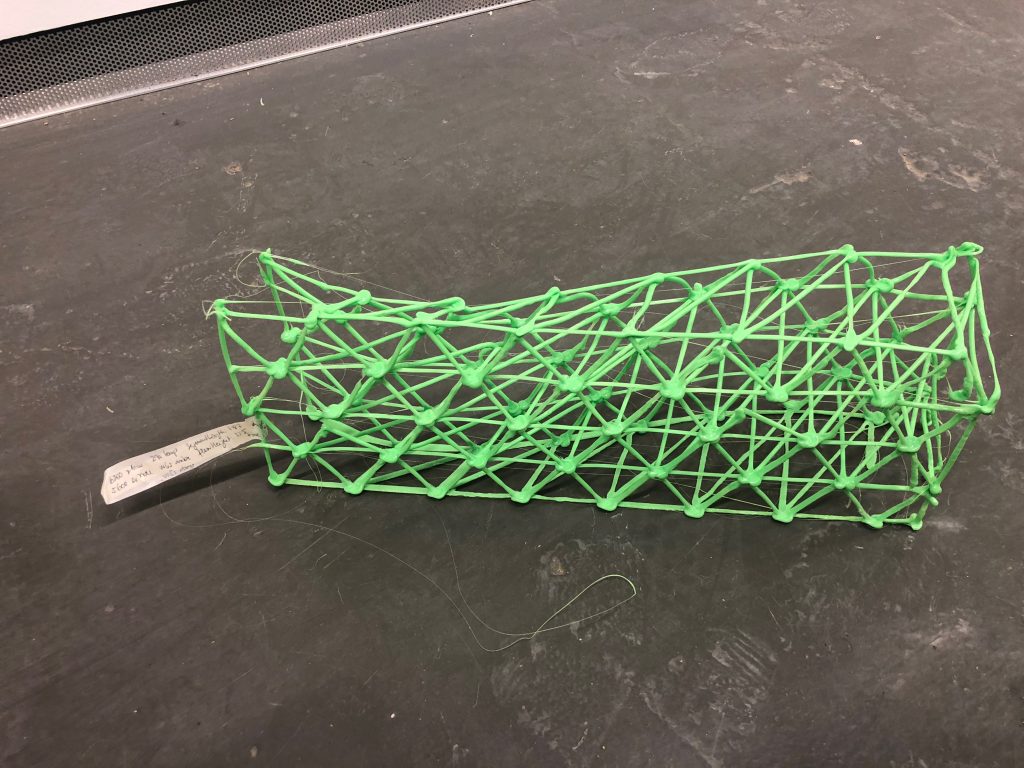

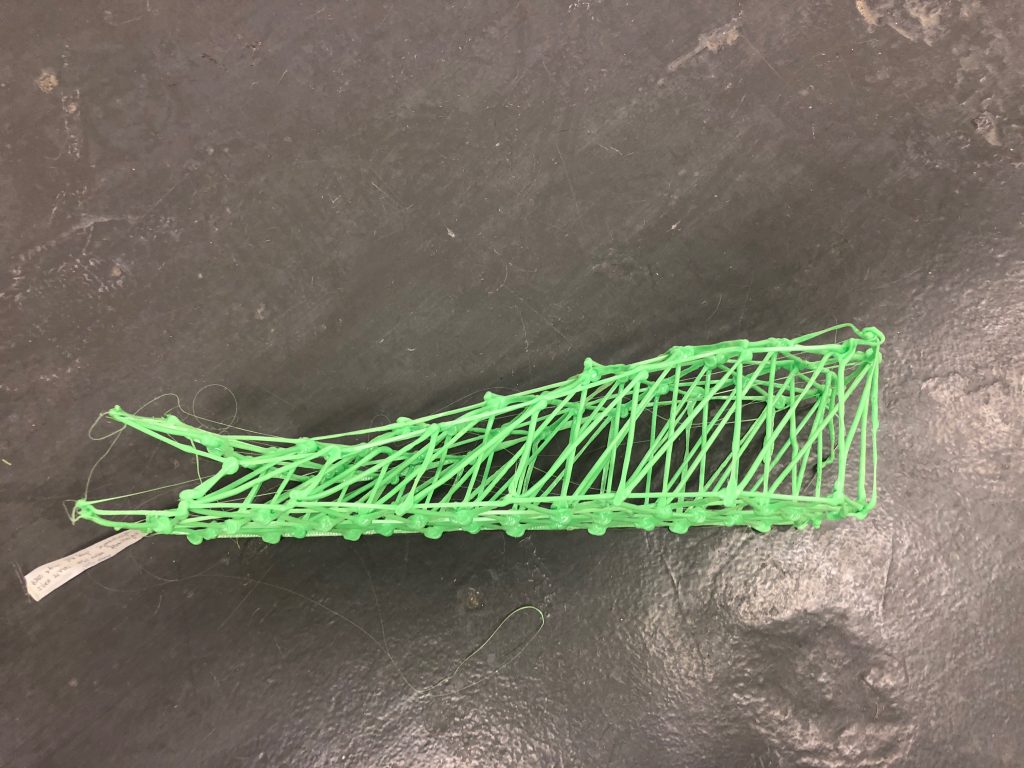

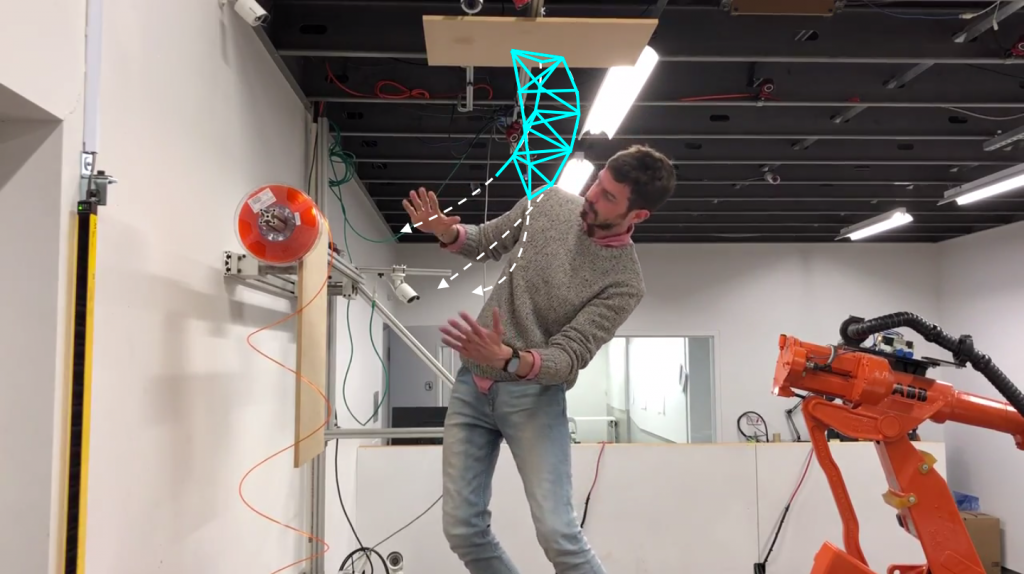

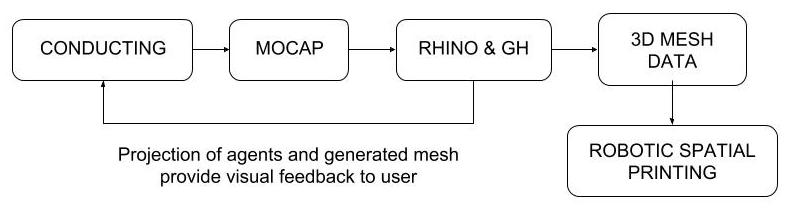

This project is a hybrid fabrication method between a human and virtual autonomous agents to develop organic meshes using MoCap technology and 3D spatial printing techniques. The user makes conducting gestures to influence the movements of the agents as they move from a starting point A to point B, using real-time visual feedback provided by a projection of the virtual workspace.

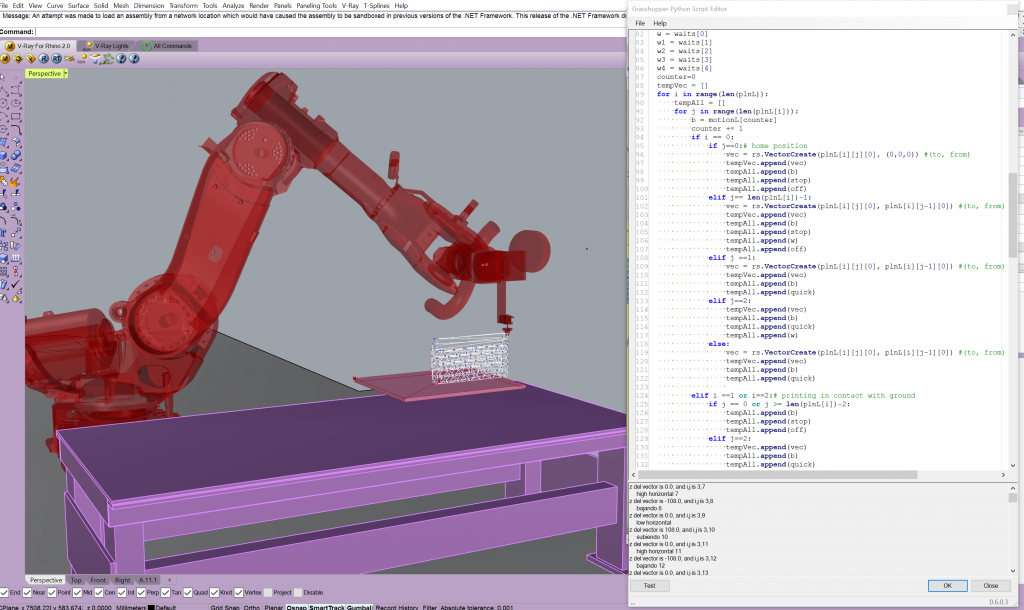

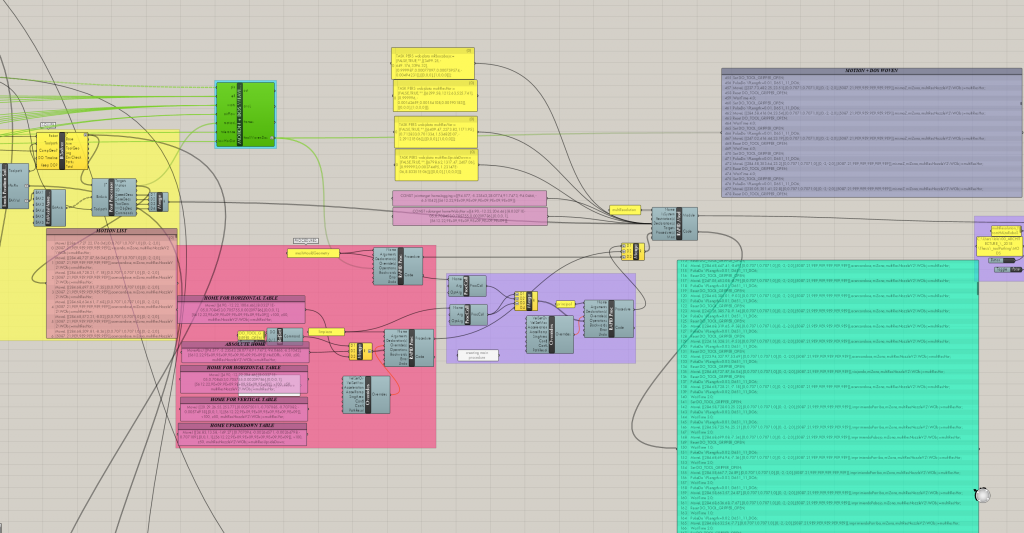

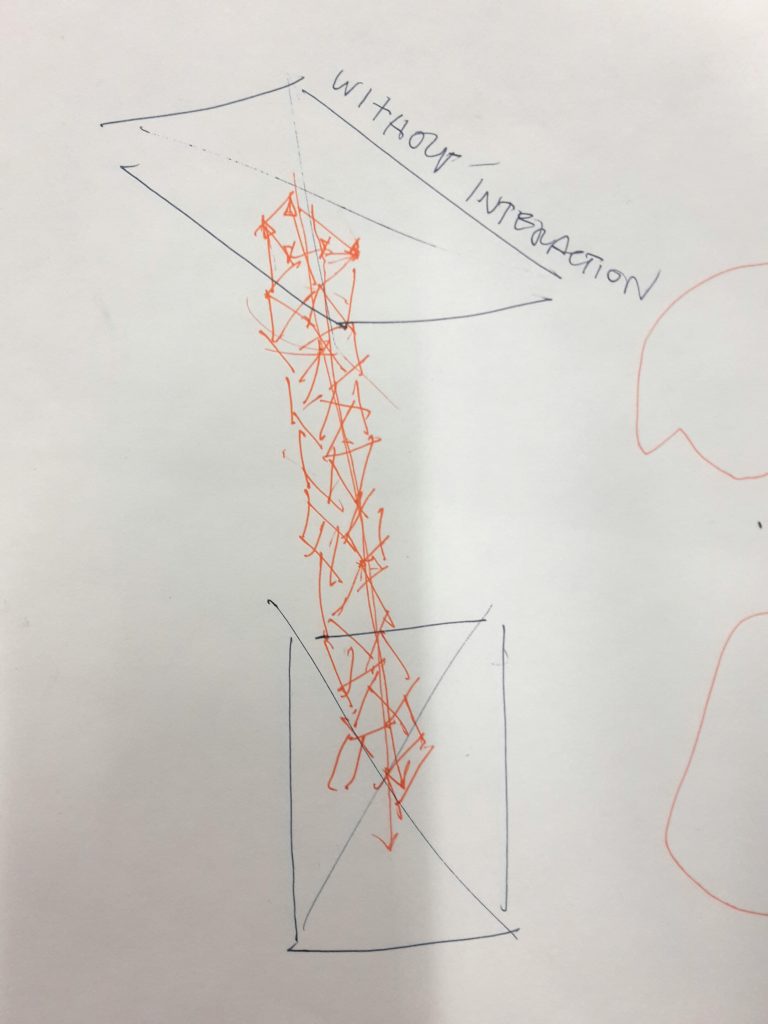

For our shortest path prototype, we discussed what the agents’ default behaviour might look like, without any interaction or influence by the user, given starting and end points A and B. We then took some videos of what the conducting might look like given a set of agents that would progress in this default behavior from the start to end points, and developed a few sketches of what the effects on the agents would be while watching the videos. In Grasshopper, we were able to start developing a python script that dictates the movements of a set of agents progressing from one point to another in the default behavior we had established.

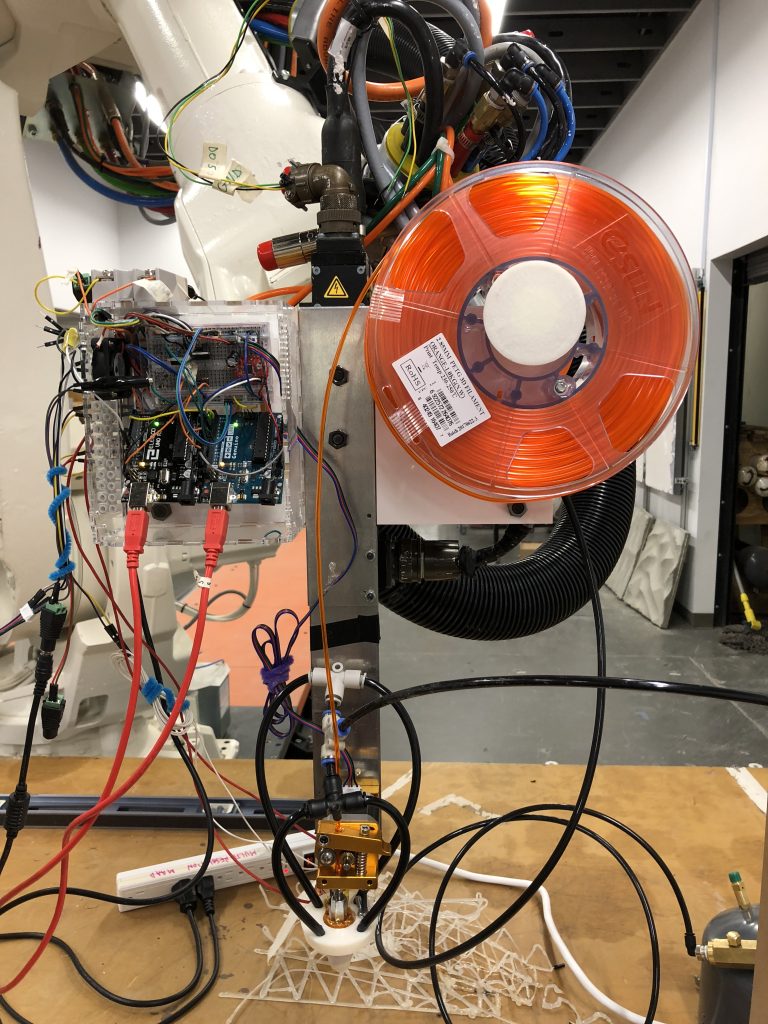

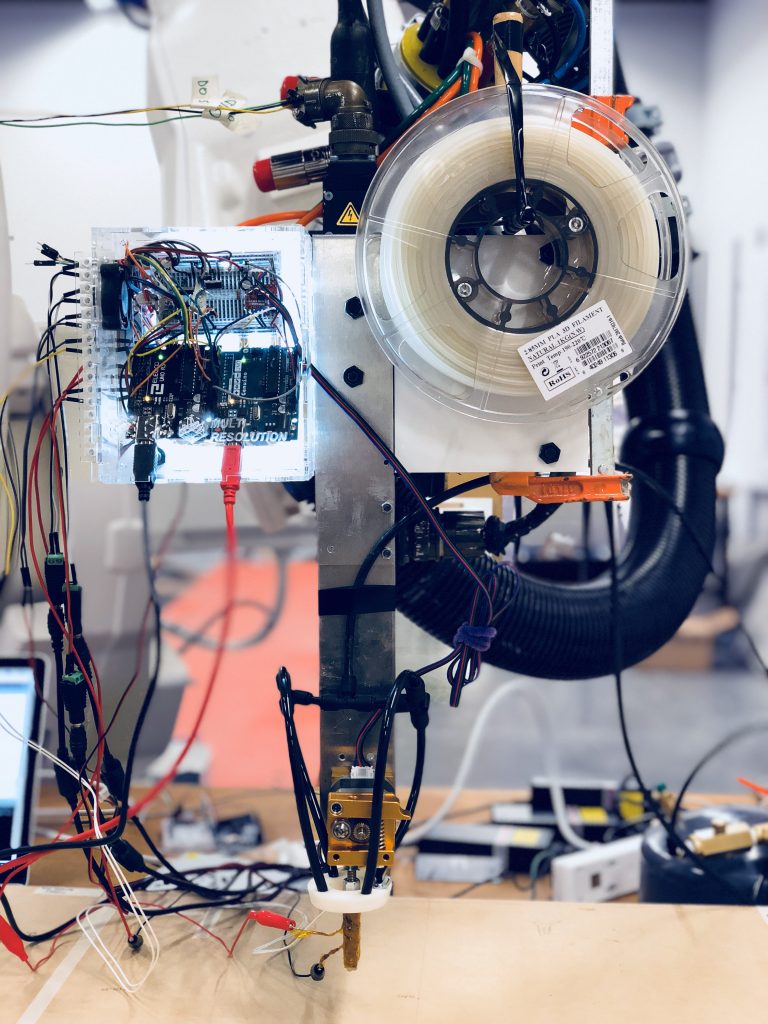

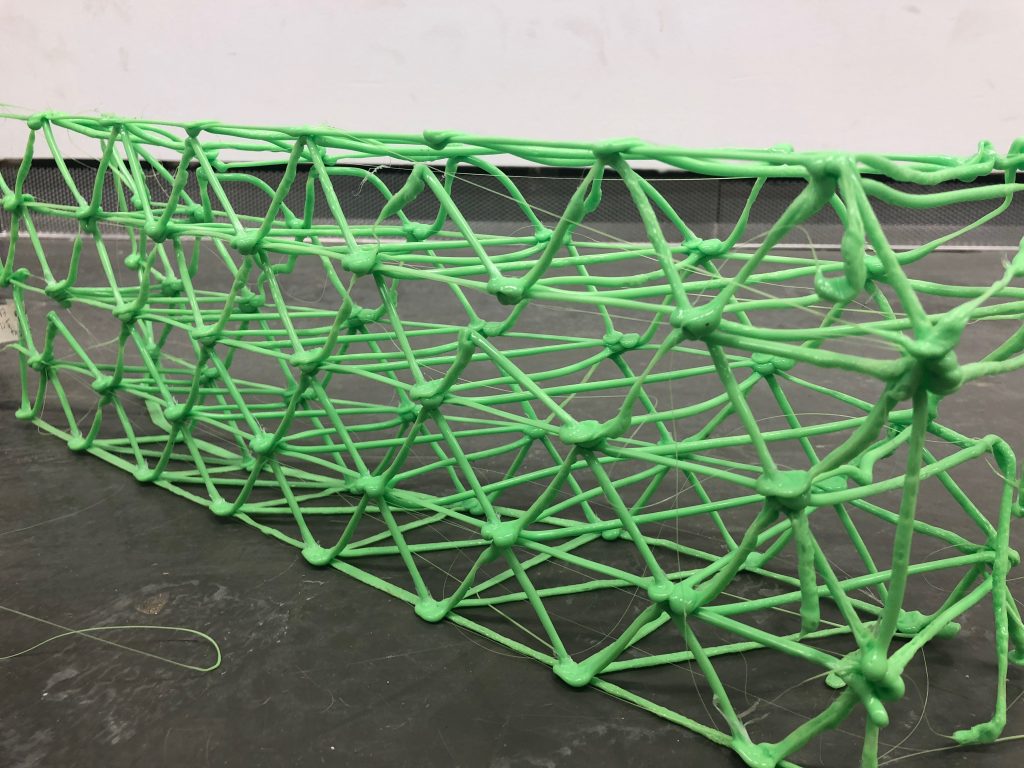

Our next steps moving forward would be to flesh out our python script so that we can generate a plausible 3D mesh as the agents progress from start to finish, first without any interaction. Once we have this working, we’ll work on building a more robust algorithm for incorporating user interaction to create more unique and complex structures. The resulting mesh would then be printed from one end to the other using the spacial printing tools in development by Manuel.