Throughout this semester, I’ve tried to tackle a bit more of the electronic / tech-augmented genre of music. I’m not sure that I even really understand it yet, but what I do understand is that there is so much to learn. I enjoy the idea of soundscape design, so I attempted something along the lines of a strange, insect-y kinda percussive little diddy.

Author: jdbrown@andrew.cmu.edu

Individual Project: Joshua Brown

For my individual project, I explored the art of delay.

https://soundcloud.com/oshuarown/caprice-of-meaningless-children

I wrote a piece for flute, viola, and double bass featuring a good amount of rhythmic pizzicato, exported it as a MIDI, and then played around with Logic’s Delay Designer in order to create a rather synergetic piece which maximized areas of the original while still keeping the flavor in-tact. In addition to that, I put together a small cavernous back-track to give the piece a little more sonic dimension. To cap it all off, I included a synthesized voice repeating the phrase “let’s all have meaningless sex and meaningless children,” which has been stuck in my head for a while.

In performance, I brought the piece’s individual parts together in MAX and created a pretty simple spatialization element, to make the piece a little more dynamic.

Go w/ Chocolate!

For our Go sonification, our group (Kayla, Joey, Dan J., and I) chose to incorporate a tasty element into the game. We used Hershey’s chocolate and oreo drops as the black and white Go pieces. On the board, we mounted two DPA microphones on the board in order to capture the sound of the game. Once a player captured a piece, we sent them over to a microphone to eat the chocolate sensually, and each of the microphones were processed through Ableton Live.

*Josh lost the video because he’s a dumpster fire, but suffice it to say that it was a good time.

Markov Chains in Cm

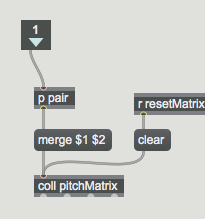

For our project (Joshua Brown, Garrett Osbourne, Stone Butler), we created a system of Markov chains which were able to learn from various inputs – MIDI information imported and analyzed through the following subpatch, as well as live performance on the Ableton pad.

Our overall concept was to create a patch which would take in a MIDI score, analyze it, and utilize the Markov systems to learn from that score and output something similar, using the captured and edited sounds we created.

Markov chains work in the following way:

Say there are three states: A B C

When you occupy state A, you have a 30% chance of moving to state B, 60% of moving to state C, and 10% chance of remaining in A. A Markov chain is an expression of this probability spectrum.

In a MIDI score, each of the “states” is a pitch in the traditional western tuning system. The patch analyzes the MIDI score and counts each instance of the note C, for instance. It then examines each note that comes after C in the score. If there are 10 C’s in the score, and 9 of those times, the next note is Bb, and the 1 other time is D, the Markov chain posits that when a C is played, 90% of the time, the next note will be Bb, and the other 10% of the time, the next note will be D. The Markov chain does this for each note in the score and develops a probabilistic concept of the overall movement patterns among pitches. Here is the overall patch:

The sounds were sampled from CMU voice students. We then chopped up the files such that each sound file had one or two notes; these notes corresponded to the notes in the c harmonic minor scale. The Markov chain system would use the data it had analyzed from the MIDI score (Silent Hill 2’s Promise Reprise, which is essentially an arpeggiation of the c minor scale) to influence the order / frequency of these files’ activations. We also had some percussive and atmospheric sounds which were triggered via the chain system as well. We played with a simple spatialization element, so as not to overwhelm.

This is what we created:

The audio-visuals patch was made by taking the patch we made in class (the cube visualization which responded to loudness of a mic input) and expanding it to include a particle system that was programmed to change position when a certain peak was reached. the positions were executed using the Ease package from Cycling ’74.

Golan Levin Response: Pyrotechnics

Levin introduced us to a lot of interesting projects and possibilities, but the ones that caught my attention most immediately were those which dealt with sonic visualization. Sure, we have waveforms and oscilloscopes and whatnot, but I’m talking low-tech, real-world physical objects here. I was very interested in the Reuben’s Tube, being a vaguely fire-obsessed chap myself.

So I did a little digging and found this dude: https://www.youtube.com/watch?v=2awbKQ2DLRE

There’s a little group o’ folks in Denmark who’re teaching children about physics through cute little visualizations, and this one caught my eye. It’s a Reuben’s Plane, I guess; it’s interesting in that the flames make a sort of pixel art pattern, almost akin to the cymatic plate experiments. I love the idea of physical representations of sound and the ways in which sound can influence the physical environment. I like the concept of making the invisible, visible through its interactions with the visible world. I’ve also recently become very interested in the interdisciplinarity of music and sound art. There are so many out-there projects one can produce if he just knows a little about physics or…fire. Music is a lovely medium because anything can become a piece of the pie: field recordings, fire, physics, fire, biology, or even fire!