RoboZen

Cecilia Ferrando, Cy Kim, Atefeh Mhd, Nitesh Sridhar

5/10/2017

Abstract:

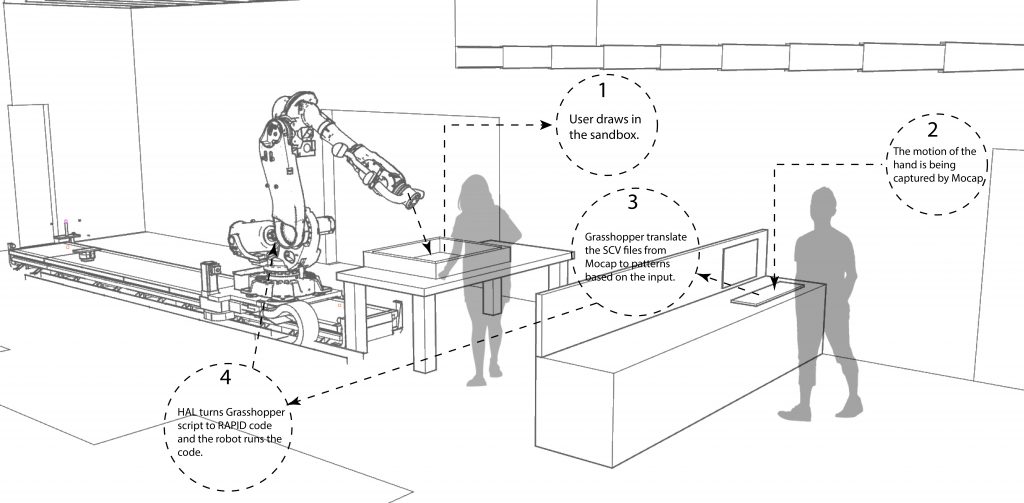

Our human-machine workflow involve using a sandbox as a zen drawing template. By using sand as the drawing medium, it is easy to create and reset patterns. Modeling sand is on one side a predictable action, on the other it also entails an unpredictable component due to the complex behavior of granular materials.

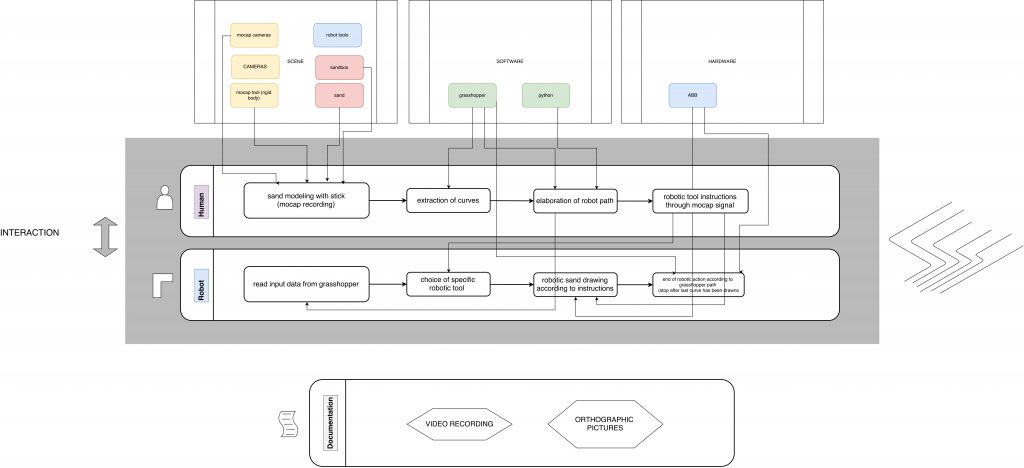

The workflow was initially designed as follows:

As a pattern or shape is being drawn, Motive captures the motion of the hand tool moving through the sand. Grasshopper takes in a raw CSV file as an input and it preprocesses it in the following way:

- points are oriented in the correct way with respect to the Rhino model

- the points that are not part of the drawn pattern (for example the ones recorded when the hand was moving in or out the area) are dropped

- curves are interpolated and smoothed out

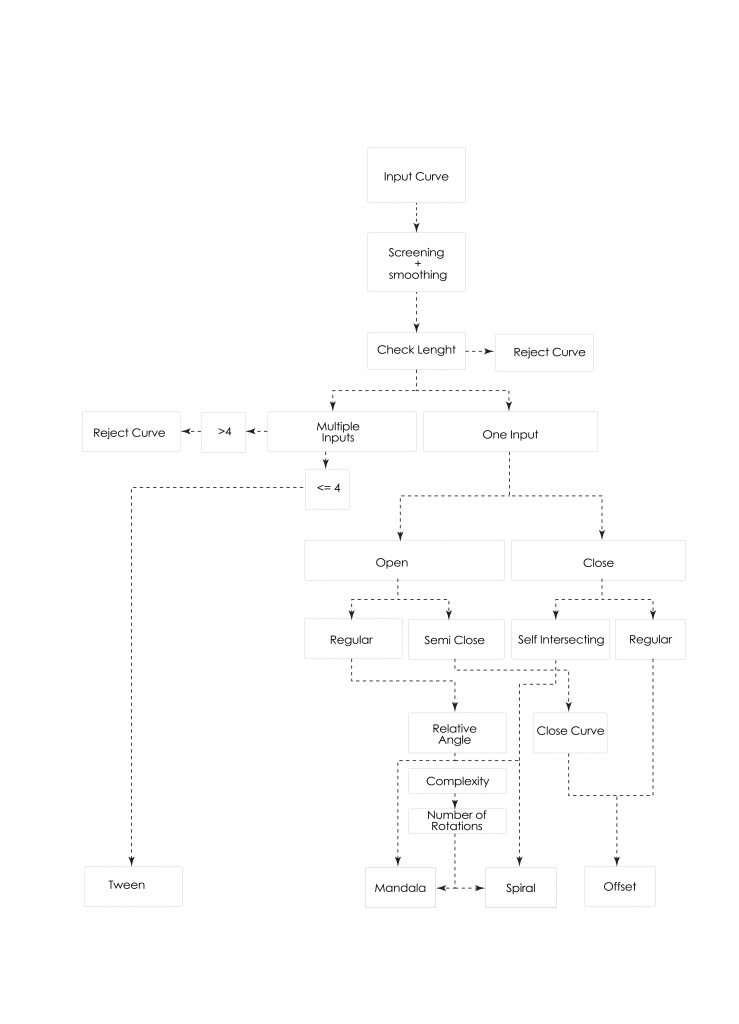

The preprocessed input curve (or curves, in the case of a multiple curve), is passed to a GhPython component that dispatches the input according to determined parameters that are intrinsic to the input. In particular, the code checks whether the input curves are:

- closed, open or self-intersecting

- long or short with respect to the dimensions of the box

- positioned with large or small relative angle with respect to the center or the box

- complex or simple

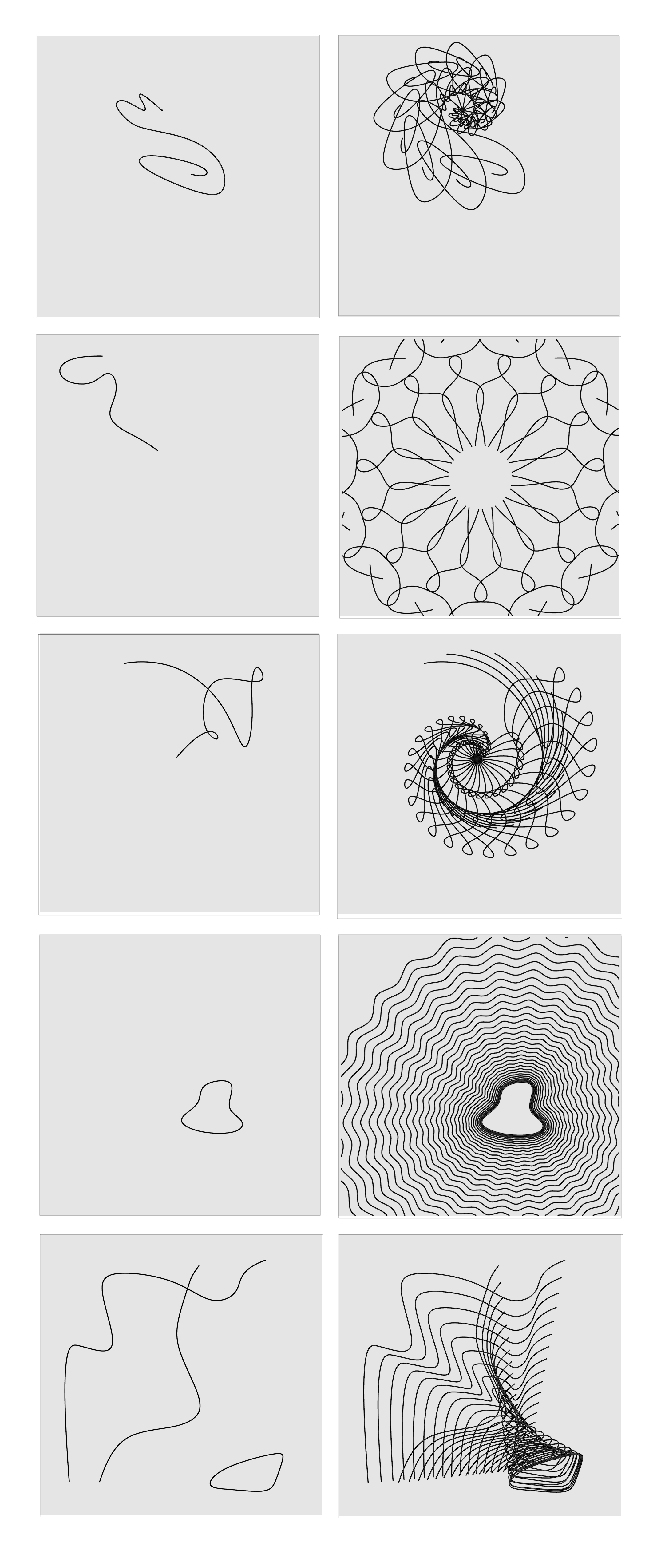

Depending on the nature of the input, the curves are dispatched to the final implementations in the following way:

Within the mandala/radial symmetry mode, the software reflects and rotates the source curve into the form of a mandala with rotational symmetry that has as a center the center of the box. Differently, the spiral mode rotates the figure with respect to the endpoints of the curve, with a variable scaling factor that decreases the size of the input curve as it is patterned outwards.

The user might choose to draw multiple figures on the canvas. A maximum of four figures is allowed by the system. In this case, a tweening mode interpolates between multiple curves by computing a number of intermediate instances between consecutive curves. These patterning modes will also treat closed curves as objects, aiming to avoid them rather than intersecting with them or trying to cover them. In this way the patterning is reminiscent of the rake lines that are seen in zen gardens which avoid the rocks and small areas of greenery that are located within the garden.

When the offset mode is activated by a closed curve, the implementation follows the same structure as the offset command in Grasshopper, except that a zig-zag transformation is applied as the figure is offsetted outwards. Also, the offset distance is variable.

Objectives:

The purpose of the Zen Robot Garden is to create an experience where the user can view the robot drawing patterns in the sand both as a meditative experience (such as creating a mandala or a zen garden pattern) and as an educational experience to learn how symmetries, scaling and other geometric operations can entail very different results based on a set of different input parameters. There is a component of surprise in seeing what the final sand pattern looks like, and this is due to two elements:

- the hard-to-predict aspect of the completed pattern

- the self-erasing and complex behavior of the sand as it is being modeled

As the users continues to explore the constraint space of the sandbox curves, they can implicitly infer the parametric rules and therefore learn how to bring out different patterns.

The ABB 6-axis robot uses a tool with a fork-like head to act within the same sandbox as the user, in order to create small, rake-like patterns in the sand similar to those that can be seen in a true zen garden. The collaboration between the robot and human user allows the user to create complex forms out of simple shapes and drawing movements. Watching the robot perform the task puts the user in a relaxing state similar to the meditative function of a zen garden.

Implementation:

We chose to use a sandbox because sand is easy to work with and draw in but also simple to reset. Additionally it creates a collaborative workspace for the robot and human user, allowing them to explore the medium together. Watching the robot slowly transition through the sand, displacing old curves in place of new ones, creates a relaxing atmosphere and echoes the impermanence and patience shown in true zen garden pattern making.

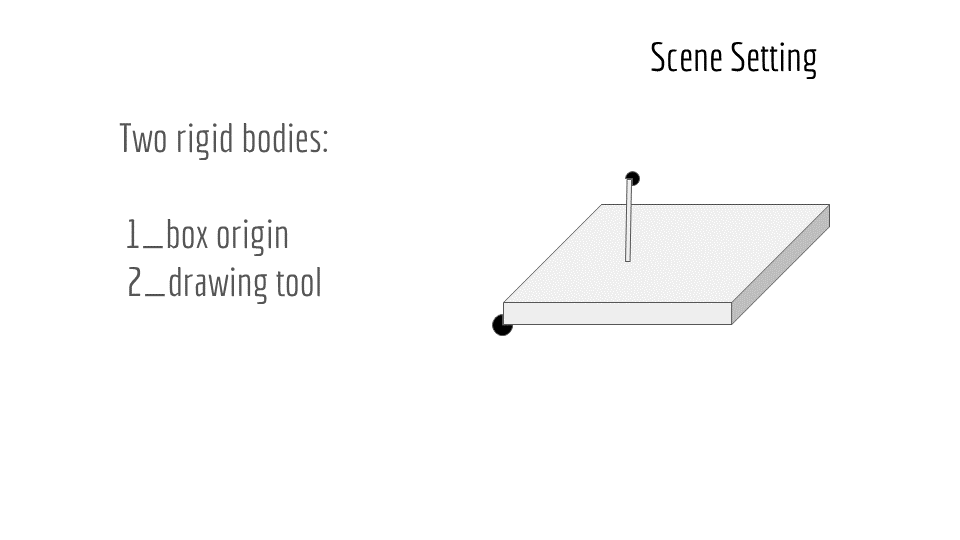

We started by having a rigid body for the sandbox as well as the drawing tool to help capture the plane of the box. We also captured it as a work object for easy use with the robot. The drawing tool was used in repeated takes to capture the movement of the user’s hand as a curve that mirrored what they had drawn in the sand.

Outcomes:

We were able to create a logical system that allows the user to discover different outputs based on their input drawings without having to open up settings or menus. The transformations and output parameters used to create the final curve patterns are directly based off of properties of the input curves, which creates a process that seems opaque and surprising at first, but can be uncovered through repeated use.

The most difficulty we had was in terms of taking a curve output from Motive’s motion capture and bringing it into Grasshopper, as some of the capture settings can vary between takes and it was difficult to create a parametric solution that covered all the curves while still leaving them unchanged enough to be analyzed and reinterpreted for the robot output.

Contribution:

All the group members collaborated in shaping and fine-tuning the initial concept for the workflow.

Cecilia and Atefeh designed the Grasshopper script which takes the motion capture data and extracts the user’s input curves. They also coded the script that generates the curve output based on the parametric properties of the input.

Cy created the HAL script which takes the output curves and generates the robot’s motion paths from the curves. She also CNC routed and assembled the sandbox.

Nitesh designed and 3D printed the tooltip for the robot, and helped set up the work objects and robot paths, and supplied sand.

Inspiration:

Procedural Landscapes – Gramazio + Kohler

Photo Documentation: