Last semester I recorded myself reading the first page of my favorite book ‘The Hobbit’ by J.R.R. Tolkien. I recorded myself a few times so that I was able to change my inflection. I thought that it would be interesting to recreate the the song ‘Concerning Hobbits’ by Howard Shore using only generative processing of my voice. After a few hours of work, I realized this was a much larger task than what I anticipated and the outcome was very abstracted from the original music. I was still very excited with the amount of experience I gained in experimenting in both LogicX and Protools. After the first 30 seconds of the song were abstracted I overlaid my voice recording and added in the underlay of the original song to be compared. It was interesting how abstracted everything became and I hope to edit this project much further in order to create the entire song.

Category: Projects

Some kind of atmosphere

In this short experiment, my goal was to create an atmosphere with some elements of a familiar setting, and other elements that would cause the listener to question the environment. I exclusively used field recordings, but processed each sample to varying degrees, allowing some elements to remain recognizable and other elements to be transformed into curious effects.

Back to the Garage

What does it mean to push sound to it’s limit? Sound doesn’t have limits except maybe 20-20kHz. The imagination however is extremely limited.

This piece doesn’t even begin to push any of these.

But the sound design for music workshop was a valuable reminder that paying attention to how sounds are arranged on the micro level can yield very good results.

I also explored in a more limited way how many directions i could move a sound at once.

Pure Data: random drone reverb

One element I am interested in including in the Metamorphosis section of the installation is some tracks inspired by the flapping of a butterflies wings. This PD patch attempts to create a couple “fluttery” drones, and randomly reverberate these drones to create a more dynamic feeling. Download the txt file and change the extension to .pd and it should open. randomreverb

Personal Project: Sonification of an Eye

Sonification of an Eye

I have always been fascinated by eyes. The colors, shapes, and textures of a person’s iris are as unique to that individual as their fingerprints, and so identifiable that biometric systems are able to identify one person from another with ease.

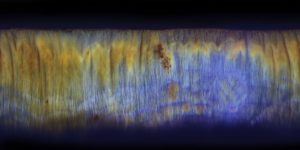

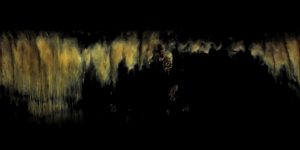

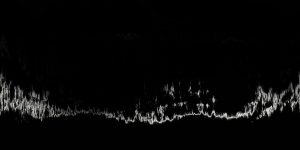

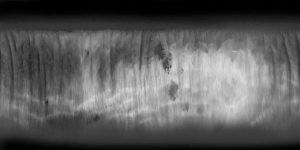

For my project, I sought to sonify the iris, to create an experimental composition based on the characteristics of my own eye. First, I needed to photograph my eye. I did this simply using a macro attachment for my phone. With a base image to work with, I then converted the image of my eye from polar to rectangular coordinates. This has the effect of “unwrapping” the circular form of the eye, so that it forms a linear landscape. These “unwrapped” eyes remind me somewhat of spectrogram images; this was one of the observations that inspired this project.

Next, I sought to sonify this image. How could I read it like a score? I settled on using Metasynth, which conveniently contains a whole system for reading images as sound. However, the unprocessed image was difficult to change into anything but chaotic noise, so to develop the different “instruments” in my composition, I processed the original image quite a bit.

Using ImageJ, a program produced by the National Institute of Health, I was able to identify and separate the various features in my eye into several less complicated images. These simpler images were much easier to transform into sound. Separating the color channels of the image allowed me to create different drones; identifying certain edges, valleys and fissures allowed me to develop the more “note like” elements of the composition.

These are just a few of the layers I created for use in my soundscape; there were seven in total. In Metasynth, I was able to import these images and read them like scores. Each layer has a different process, but this is the general premise: I would pass a wave or noise (I chose pink noise for most tracks) into a wavesynth, grainsynth, or sampler. I would define the size of the image, and a tone map to divide that image into frequencies based on the pixels of the image. For instance, a micro-32 map would divide my 512 pixel high image into 16 notes. I am still working on an understanding of exactly how Metasynth works, but from this point the synthesizer can read the image like a score. Through experimentation, I developed a loop from each of the 7 images I had processed from the photograph of my eye. Each loop represented one rotation around the contours of the eye.

In Audacity, I took all of these loops and mixed them into an experimental composition. As a visual aid to what is being played, I re-polarized the images to produce the video I played in class. The sweeping arm across the image is representative of the location on the eye that is being sonified at that time, however since I was creatively mixing between layers in the composition (vs just fading between one image layer to the next in the video) it wasn’t always easy to understand the connection between image and sound. A further improvement to this project would be to create a video where the opacity of the image layers is representative of the exact mixing I was doing in the audio track.

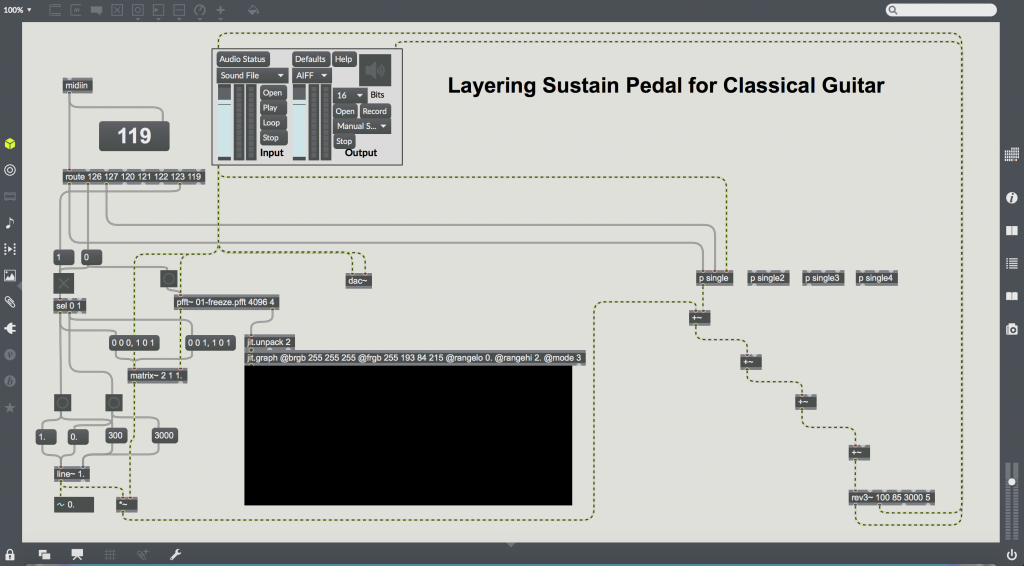

Personal Project: FFT Foot Pedal for Classical Guitar

Because the sound produced by a nylon string guitar decays quickly after a string is plucked, the instrument is not very useful for long sustained notes. Inspired by the sustain pedal present on most pianos, I created a software patch in Max 7 that allows the users to use a MIDI foot medal as an enhanced sustain pedal for guitar. By pressing on the foot pedal buttons, a guitarist can freeze their current sound and artificially sustain it, and overlay up to 5 sustained sounds on top of each other. The guitarist can also use the pedal to slowly fade out all currently sustained sounds, or just the last one they added.

Hardware For this project I attached a bridge pickup to a classical guitar, and sent the analog signal through a MOTU 4pre and into my laptop. For the foot pedal, I used a Logidy UMI3 MIDI foot controller.

Software I used Jean Francois Charles’ freeze patch as a starting point for my patch to save a matrix of FFT data for a given sample. This sample is then repeatedly resythesized using an inverse FFT to produce the drone, with some rev3 reverb added on top. Several drones are able to be sustained at once by adding the signals. Starting and stoping the drones is done gradually using the line object.

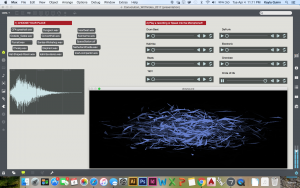

Convolution Simulator

Using Max I created my own convolution simulator. I played around with a simulator from an outside source that was shown to me last semester. I then used old Impulse response recordings from around campus to change the location on will. I also downloaded some other IR recordings from free sound sites.

I also added a visual component to the Patch because I wanted to know the waveform of the Ir was doing, and what the sound looked like after going through the IR.

I also tried to get a variety of sounds to play through each space, from drums, drones, and low frequencies, to horns, speech,kalimba, songs, and ambient noise. I also added a ADC to the patch so people can set up a microphone and create their own noise and hear was it would sound like if they were standing in a different room.

Heres and example of the changing sounds you could play with:

The patch was set up to be user friendly, so that an audience member use and listen to the patch with little to no assistance.

The Patch code can be found here:

https://github.com/kqherself/ESS-S17/blob/53ebd459209eb936e9ea7ba1643abf3e0055aa3e/Convolution

----------begin_max5_patcher----------

4846.3oc6cs0aiibk9Y2+J3HrujE1B08pXdZlzyjcB1IaOnaDDDLInAkTIIN

lhTghxc6IH929VWHonr0khR7lMhaz1R7hTc9py85TG9ud2Milj7U4lQd+due

w6la9Wu6laLGRefaxe+MiVE70oQAaLW1noIqVIiyFcq8bYxulYN96CSmFI8R

l6EENWVb50oxMpqNHKLI9yoxoY1uJhOZL8VOeFXLV8CDeqGjBFCt0Co9s2+n

3tCxltLLdQ8uy3sqBiijYlwL7.ClpGOblgBRl7q2IHi18IjrMq3i.jezMYOF

IMW8H8A92u6c5ecqiX25nfGmDjV7UX+7ydbszRaiFcq2nPE1tC.NB7InZJ12

GN1W8C1+VOLhY.A+y.etcmWL7QOL7gZD3KV9E0WxK371LWiqO4A891njj0dv

ihuaBWDGDoQ4IAwKF4.PAAXt8ul+fPGCjPuDL7ItBFyjyC1FUJSsI3A4rOq9

HTilOGjkkFNYalUJ8lRnQAVAaBmlsMNTSJ+dOBImI8lQyShhR9xhnjIAQYxU

qSpvBqOa5pf3roIoZxMexr7rqRlYGTlO9QEGNIMbQnB7hjwKxVZwHDxmJ.i4

9LAWISNJKb58aJQ0c2S4P.pkQKN65PEnejAw+baPTX1iGXbjEtRtIKUptW6f

PMeXNkgWptbTpOqMAKjufkJKMXyx6Tp5VGLMKIc7WBd33BsmSZEqnZE7.Yjw

.FiIfpWifufc5.LgPLyHaJPFdvCbSGjGzAAUH.bXlSXiHodLb8SamrJHMLVd

kHJlnQTAbLS+CQoPC6FdhsHIQXzBhaL7zm1Gv4OFjc2mVFrVN6tOljr55.U5

NCpbvNNUL0MjkZQVAw7mCbWWLzh6Cn8dkBnUSBtaSlLUlbcHKjhGKTXKR7Lr

ERbAa4V4dBaLVaylb3a7RgWApOf2+OY1RYZTP7r2GrQ8cdk5CfJIYEBSzXCl

MFp.XNxxFV.7mEkoFUsbwNTFIZP8s8BW7mT1ujexNxFGDN+ZT4B2Aw.gxnuu

uPn8jzYDlAyckRIMjCw9MIBCaSD9Xg8.8288dDnyWAcT..vTh7Hpxwa0qA90

MhGEJar1gXMaDO9rWCQ7HnpvSJvPA6BC6QvLF4PTTyF1iOueC6QyDpEuag.f

7gUBzlQqePPh23AAQPbekHMTPcKDHnug40GMjhC5XZ2P+NueVwj4E3oFcIoy

TnrWRp2mVKCt2SI2l3oLi68mCmllrdYRr7a9luo3yHR4k+zjswlOH7oktYZF

qBoafEezFT7eoZtIKllDkjlSyJMhTfPoQDLFp7pBXeEV4ckNfqynSf4m+oW7

8kqVkvaBUBnpfYkwrwWt89U0uqFWA7QSWmx8qj6kmKQcPfRMoFKEbiMLCtVK

6VbalSZzjz4+ZvjkxgjJ1rfnRuRqmMKNVTZ2uQsYA5WaVSyY.aI6Vbg0cIAW

y7JpucK3a8j2wwJMebHAydUl5tioX6GhTCpzj3xu3ypZSQYlPXf0U0FMOATF

drlT+F6Um9MHgTFGX8zuwTwtW3fYitTD8rO4SShe3y+ZR7hnG0I2nETwwnhR

jCAA0VEm3stq4Bth6bLCxg5LU7Vy27uO3OtM9dGUxQ2kp75oiiPQFlLBog0w

AG0Cd89GjApulyELhnLcMWtauXHswc6EzGP1+qMQ3ibOBtK2fJzG1zfF+0Wr

BbgITAsrZ8LkRrK9RSZEk2yVQsJ4ZoXDnHe6ZVAqssS9acamH.mNlI7Y97Wu

lNaJwy8xO0EGIOlfKWaolTFkH5UYz+9nu8i+k+524cm2mlFjJm4kk3MQ5EDk

DKGuZM9uOpEDcwhcqSGFT+zRyYu488E6SGiDPD8+H+tm2IWbjpH65W1jhtv9

055KKxgVPTEwPVwSVskRQu0sxR8IbsUVeB7UYR3NFKV3hmNGGzo3bxYYfLrI

P.7YpSqCIXwayhA3Xj8zfHoGXrZdhO16Nn5EicohKN..PyCDhaKmJf+oQ.1K

Q.LnGPf4YIqtTJlYo3bkrjZOki86iJrAbgyvPteN8hzYlgSTlin0OGdDTeLK

+axzjiKfOOJIHSagn7Em0TAhXWjPhEUH0e1mbj5qB2l.QVvZ0WkxTJ3Zz1AI

F8abfQlmIpOsS5Al.EsGFeRRWcESShiMz4oHeqiALqJOFn9jOsOz0Kim8jW1

WRFcbJCyJKSNBFXR.KkoHQV8IwVcEgNIIphh6DjHLupIIF4VFu9TVqletrjE

KhjGkCc+XGNX82aqlYh0SVRwucl7nfcfWZvJoxQ6OKiClXoIPOv2l68+2Frc

VXx7PkCKee51U+jxq+lNKaEnGwpXCgw017F08.jdk44uJBT+wXnu.QdS44uR

mgmhqJ6NY7CmRuAP4hOSAB.ydLPXz5yo0V8AE0lpOTrNYJ78XxBmWB..DiE.

.fnK2WDRnWjiKQKRqV73y2Fa4iNqCcE+2EBGMFWR340MJ.XClATGRmTeEnji

pcHX1r0IJs9aJpePvs4+Rw8gFK3H.WnOjuxNsNAuBphKU.QX8Ao.h4JYDEKJ

B36yrGU6+t4DP.nxGoU7pAkqBVs9LhUbAYLRqZQWMiDaPTh5aTlRGxRUbhkO

5JjmXuxjm3JamzqURh29RRPexNYAkKgDDkxMxSHyAwP3XHGfPlCxPVIG043b

7XeLCZuZFUWxAMlH01USjmNIxmcBfps+JvXtYeA4aqoh5GpBUT+4fVMYvqxR

leYIvXODQmS7hrXbAotg1potQIto9Xqw7uwIurp4U6f6lIlYcDrzOE3bVbN.

8y.CL1hLuHuIWmDyd3CQAG9vb9CeiV6ZgOs55jbzMAdP5BYlG3xjOXXtovor

zOkui+n9Ua.qW1il+Su+K3ER66Ian7IwUcCGh1w8Qxstd9eZ9FmpQ3+Iu93+

oXPE4+qj+ucyvWS6e.lRGSfWt0.VeYM3XbBSmewpB1SLXOUA0ugpv3u9bSnH

Xk7Zy6RXGFZ9LhlsYZx5SkU3hvUn4UntdxmV+rdyZ0bCe+lnvYmPtWmbXGqu

jcQnfs47jhqPlEYEDdhRkv1pAfPxNokC8Qc1xZZn4HoBvVqScwpvYgwIYxKS

IBlm2.bDld6fQ+gtMBTWFJNrG7kXcvz6UQtBtRRWwGTP5z5WIM79Xch0S3gw

tHfc64WKFLMWDQXx5C4Bl86ikHdVvzmNglRRda0vXdjZSU2EXYjS5g0GbcRz

iO4o+8lGiyVdmh1hlckqE993AZWT0Pre8QEZezQPzKmlmdilTisLQ82dNJaq

FwgleGSfwsIuj72NiDAzvBnCUzHua00o+syS6PTerX4sQSHDhy24xBvk1DBg

f23kPIT3q.IeJ10JcVKjn+Q7ZXS9A+cdu+G+vG9zO3829ve4id+7O8cu+GNX

G1.cl12Dw3WooMMUoAav4lbQzNMXCLjc3FrAF2D65A9vnAabrXlWJCRylnLC

bss5PqZv79Wja8hOfspQgnh9sUS0jsHv9HQj+Pjb8RkxhqrK7w10L3zwYUTZ

VB2ZcjHFXrVCCyubim32bcgOLuO.1edaZrLJ5Jw0c6BcMGWArBAN0bCU2hsP

XY4MGtFDS6krl+9j3oxzreL3pwUFrjesZmNU3FrBIF1UXdGOk1bcZORuz5Xe

+xjM2+30AoHpsHLelF.tabpfbesqz+BgTTywtJ5CX8Cay1DNS94+m.kycWIC

qkIUWxOU3WgP2Zfr.VdkjVoKm1bMQVLqWZ+lJiVAat6uljd+lkIqqBuGz4sK

.yInRG61S6KF3Bp6iIufkFY8Qi1D8jydA0+9swKjIwWq1WQo12bmubRKgu0a

q8ZirMGabufmu+O9c2sHU4I6xfFzWgpo73.auoC.sBqFhp8AYfeyognUcsch

gd1sZCODJ+xCgaBmDVDdYw2Sx74ajOacEJItnjo2KmMKMXwloop3qqFFyzHU

nuYKSS1tXY0iay++xWdC1S7vKOwjEEweWdjjT8RkT4HmM4dAoKxqMrQeq5ia

qtL78PmLdwJaWhcywHqlL+ipOBb.OTJmJCVUjbiUIaTpamMVMsrdWt4d1bcC

l2WotuYRAfqo5gsM0IJwtyXpe4yi6kcFiml+0AxhaHHb8WWPjeOjfuzpjUM2

aiJmZJ3r08Q6xFWvYh04.b1n9nZX9ueRqDhdM40GqhxS+LBIeYvI0OY9H3qS

JGZyc6UP3sZ3VmMe746aeca56xRGOpUKl7nvGjiWDDFWRCODjVp1e2Y+E3+v

gLxu6U6s+UiB23PSlvlqdhsdkyWPFxocoAA3iQ4ZFnZidkcZaeaWNoN3boyg

pXM9RAD.NQ8..O37Bs.lRCOjKS10EnbAANwRC7PPzVYx7hCWb7piknj3Embx

ZuqVQWoYG3xOz0VL8BNv4Vo.R84tiuaSUs+4CLrbrCd1v3vrvfnROzJWqgpW

zV0EUxXSrm2tv.M6CpHkMIOn5e+oOd2Ocqm8cH869XCXphUwSaR889.0KaKY

uIp+E4EcZ+ttc2eM++z4RytmrILXtjccqeSzQxDIoMQhv34IOoXEN+1Pwnd6

1Csw8OwKbolMfPPY2ZBbYkrC7HYEyuMgtIamOWl9jQNxSuM1.mGDcXwbs41N

2uWH7B.i93wqWEv3mZNvHuLdJAi5WWjvdonn2rVEvtEKtbsrEoRNOxNQ86eY

vVMN.io0YoIqOpOUUO4L4zDc706WkiGr.ZOCpXS8Xde1JuivvpyF6CBG4f+N

GZAi08BceJ22juEJEwomZoiKlQhjKjwyxK6mj0JVBukxT4ntwWoJSBmyOoic

oEyOjC5niICU4X206CyYJQT0ehBlJuTIpbdG6xi4W+87peO3rxNUqduHIqd2

o7jqIzzliLU69jPgeswm9nISt5q+pmBiF+g0mxOF2T0ZMzHp+lSrO5jXq1Fk

Ep6f7IQOHex3SutmK4dDrtjQh7mrsJma400g1V0Oj1nqOV+dpLj.Zol9Htea

5iSR2tYobSi2sWxwq7V22EzSkQuwqTwW6s7kSjChMg+l7h2hXkwWixerVTeu

gaUi2JiwRMSPc53cu7EEAYWmxnn5SKR89ennChYTJcktRa88nzKwc1W2Crot

qVCcDCkrSNIXFOlpgHe0BKlSLTk936OyrIYa5zBbu3ALn2NxZlhgWIFULN+k

R6UUtlkgylIqJCMZV3FcPBFhAbPVjFc3namac03g6v34YC5Vc7PcY7zcCGxv

h6gLv3dvCqYK3vZ1BNvls.tLaA6twiCCmyo4YU3d8YHkgMS6S.iIlcXb02t6

apwn.wvx3hXfYbQ3hwEQ2YbQ3jwER2MdPCJ0m5IB3YFMvta1hOrDt3CLgKtK

BW7Nb5hMrltXCroKWz8vICvwCraFOt3JIG0c3C1AsglwSGgOtXqf2cNuwcw4

VdGJuCFX7OfgE+CyEyWrtyYClKluXcm9PlK1Kd1ftUGOzgk7Ei353A1cimyI

ew5P7wE6WrtS+LCMv3efCL9GnK7Ocm8BlK1udFSVqNdFTglRcIWQrtyadpSw

l1g3C2AtYZ2IsScJXvtyZJ0EqEztSZm5h0BZ248C0EsOzty5E0goKT2NbNG5

f.c1vg3h1Gd2oMj3B9P5NtYhSKSV2ocl3hzNo6hEzowCtCwG3fxYCBXXMbFT

4UcfsBzXWbLD2cplwhAEuClOrFNCqnJvNYWuCGOCq0CDOrTKicQsLr6hovEi

ncWDEng0pkhFXqVJxEMOHZ2NdftLd5FWBQCKuLPNIb0cQTfbQyLlVyhUBZ6u

pDeaKND.8KeaKTrRFZ.eN8m0kFP1drT9ykcHFZdzNP2qBjaXZ.cNZfT24AeQ

4f9V6aAsLMb15rAWWZvtGpo.pov2zO6+n4usMnAWbM3Y53ZU4SW7Mfzg5u.t

hOcz3YXoceX4a.zkXHQcmqblZricNEDcmi2vgUTaPmpTnta3LrhRBBvCrwyP

Ce.CrwiKQ0hFfimtwxtSNZboU4pc2kErd8CxzM4ellQxnUA+ps+Ovu071vX6

aM6tvQoRcqOvd8lVivnfzoKCyjSy1lZ2DaeUX62Vlc.ZZ71v7tjfBCdW9lc6

Y6nsx8Z2pGs6ENyfub2rtGLs2SgghFTg5G8yEm7mmBv8aPEkM0BceaPQsxW7

HN+4OMDlmDW1kHT.8eNINXZxnJOHHlGFEUNLptUZK15iiVjFLKb2yshaFs2y

NBjuJhGySLBLvWXd3oqdk5P6OzyuKXwsInPfoObHHHDw9JL.PPG5tPm6g9fZ

pKdgciGh3U2QsoIqSRK2Lsiw9kW+1rjRBqfgpbKydaN2cp5rO+whsce6WbOU

kFJm6s6O6+lTuiiuCdJVffoSkwY6AnBjJlEMdvEPAz1pR7oD5KmW0aH2h.3z

WD.HJd1YekDvWzhAdqRhS1rtrcc3NE3zb6HYjb0yuUt.3CM7PB..AE1OELjI

1+de1CvDeAxmfsM3EQwSibNBAQuMkebQYw9xNJXDh4.C+j.wYXKHqB3D42Rh

REsr3pTq56SneJwOFIDvbhkBw3hgd84dU2y+9c++.F.odkB

-----------end_max5_patcher-----------

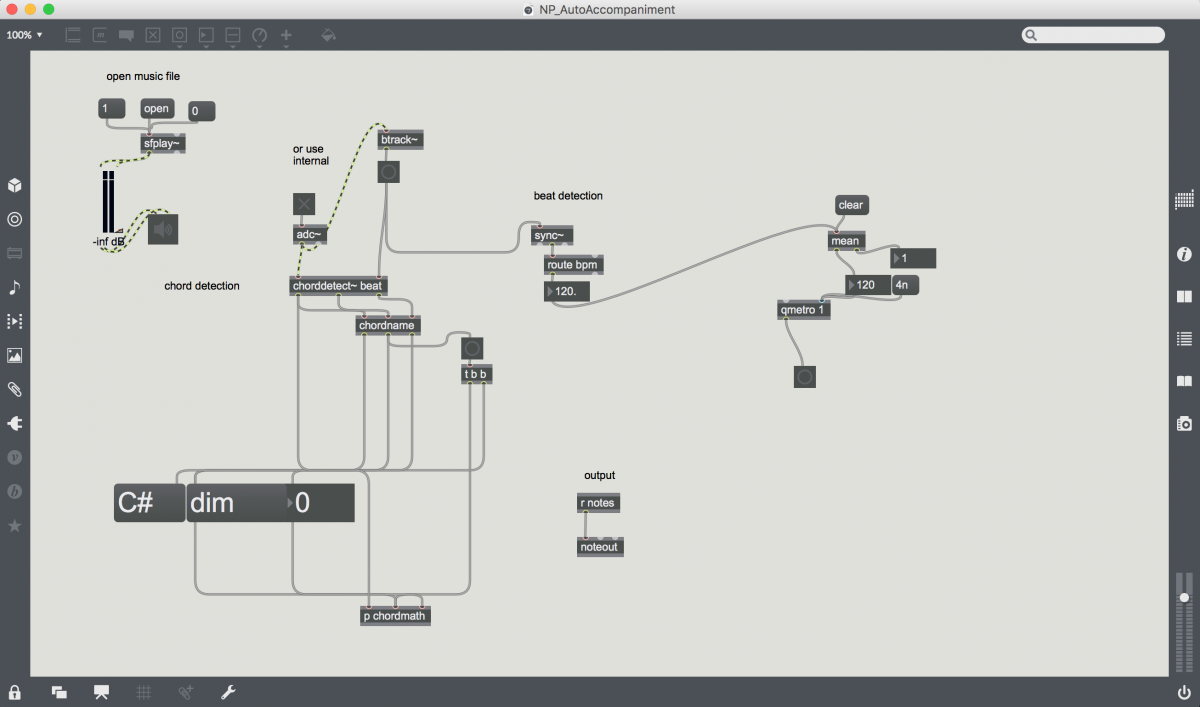

Auto-Accompaniment

Self Directed: 2HandedGrainThang

My goal for this project was to use the Leapmotion controller to create a rhythmic composition. I’d tried a few different kinds of drum sequencers with varied and less than musical results, and was on to trying to use it to ‘play drums in the air’ when i got the idea of using the Grabstrength and Pinchstrength measurements from the Leapmostion object to edit parameters.

I mapped the angular position of my hand to the playback position of a pinch of audio I’d loaded into Grainstretch~ and used Grabstrength to select a point to Jump back to periodically. I could then Pinch and drag vertically to lengthen or shorted that period.

I threw that on top of a rhythmic delay loop I’d built earlier in the semester and just jammed. The controls are still a little sketchier than I’d like. Future development will include different systems for monitoring data from pinch and grab strength and maybe more specific machine learning for gestural control. (apologies for the glitchyness of the video, I couldn’t hear the output and my computer my computer was running a little too hot) https://gist.github.com/anonymous/8bbfc42c4f1f2dc5ec2c6e0205e1258d

Personal Research Project

Due: Wed, March 29

This shall be an experimental sound synthesis project that you execute independently. It may take the form of a live performance, an audio/video/audio-video recording that is presented in class, an installation that is set up in the Media Lab (or some nearby location), or a research presentation. You will have five minutes total in which to present so make sure you are ready to rock at the drop of a hat. If you choose to present research you will be expected to present a tight, compelling, informative, and insightful slideshow and discussion.