Writeup

[ Find my full portfolio at izzystephen.com.]

In my work, I want to depict the degradation of memories over time despite the seeming permanence of technology. Out of Memory is intended to turn a series of technical failures into something meaningful and aesthetically pleasing.

Part of the human condition is to have emotional attachments to mortal beings and ephemeral situations, which compels us to avoid loss by all means possible. Our collective fear of loss begets unique features of the human psyche, like the fear of dementia and obsession with documentation. Now more than ever, photography is seen as a tool for ‘capturing’ memories in ‘high fidelity,’ ‘lossless’ formats. But unless we were to create a simulation of equal size to our universe, a virtual space will always be a compressed version of its real-life counterpart. Like the human brain, which transforms memories with every re-encoding and imbues them with the context of their recollection, there is no perfect way to digitally recover a memory. Having experimented with photogrammetry over the course of my time in Experimental Capture, I realized that this method is a good visual and conceptual representation of my ideas about memory.

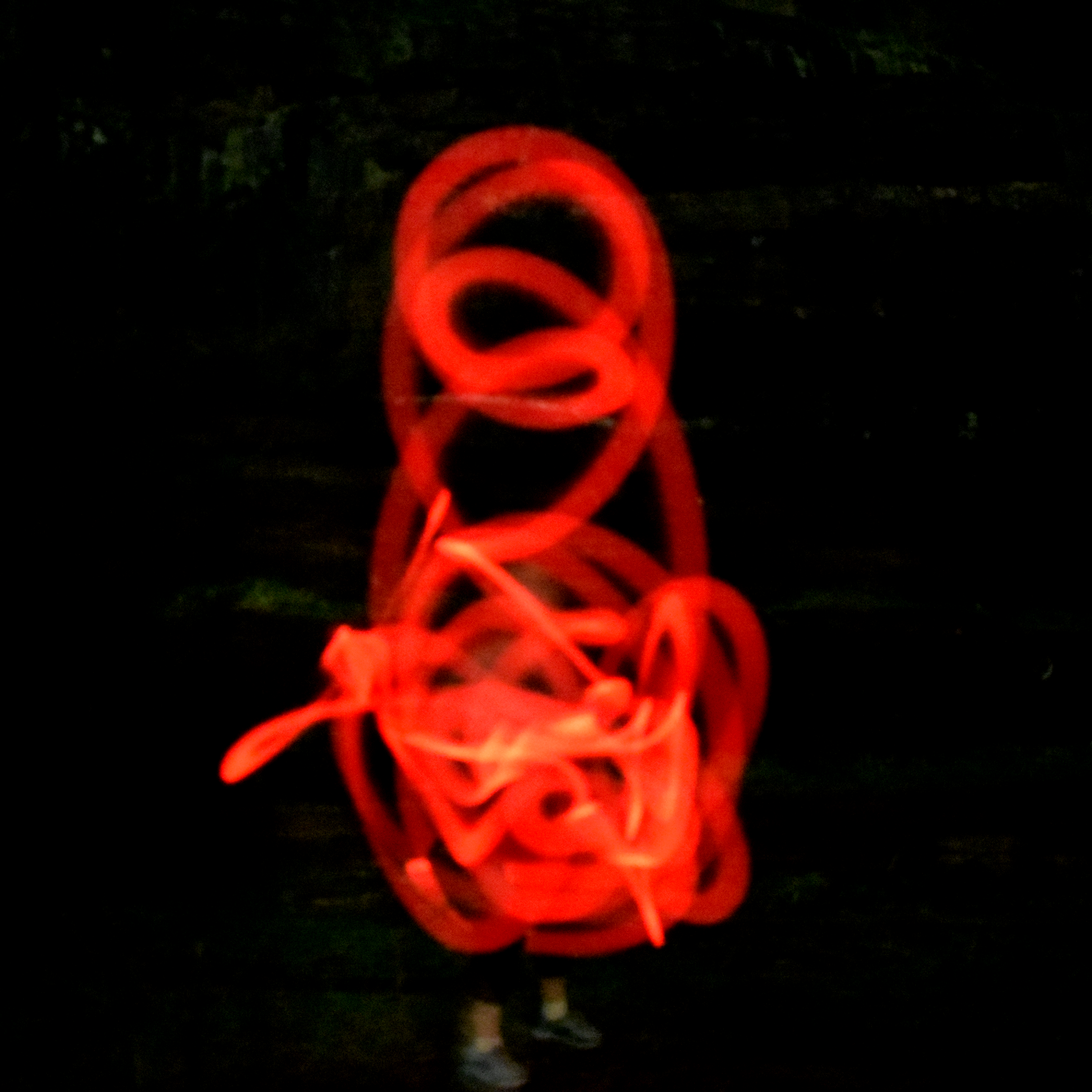

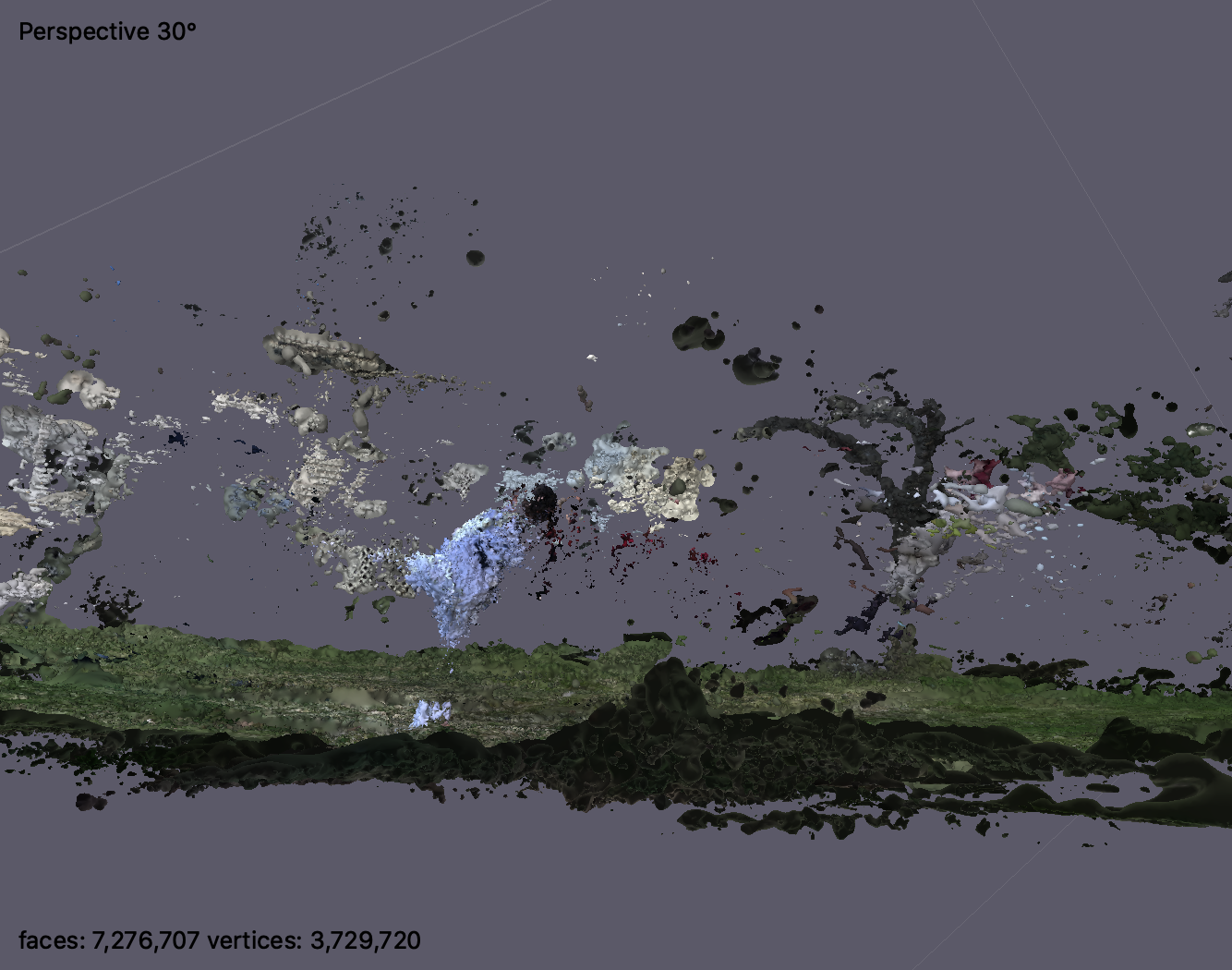

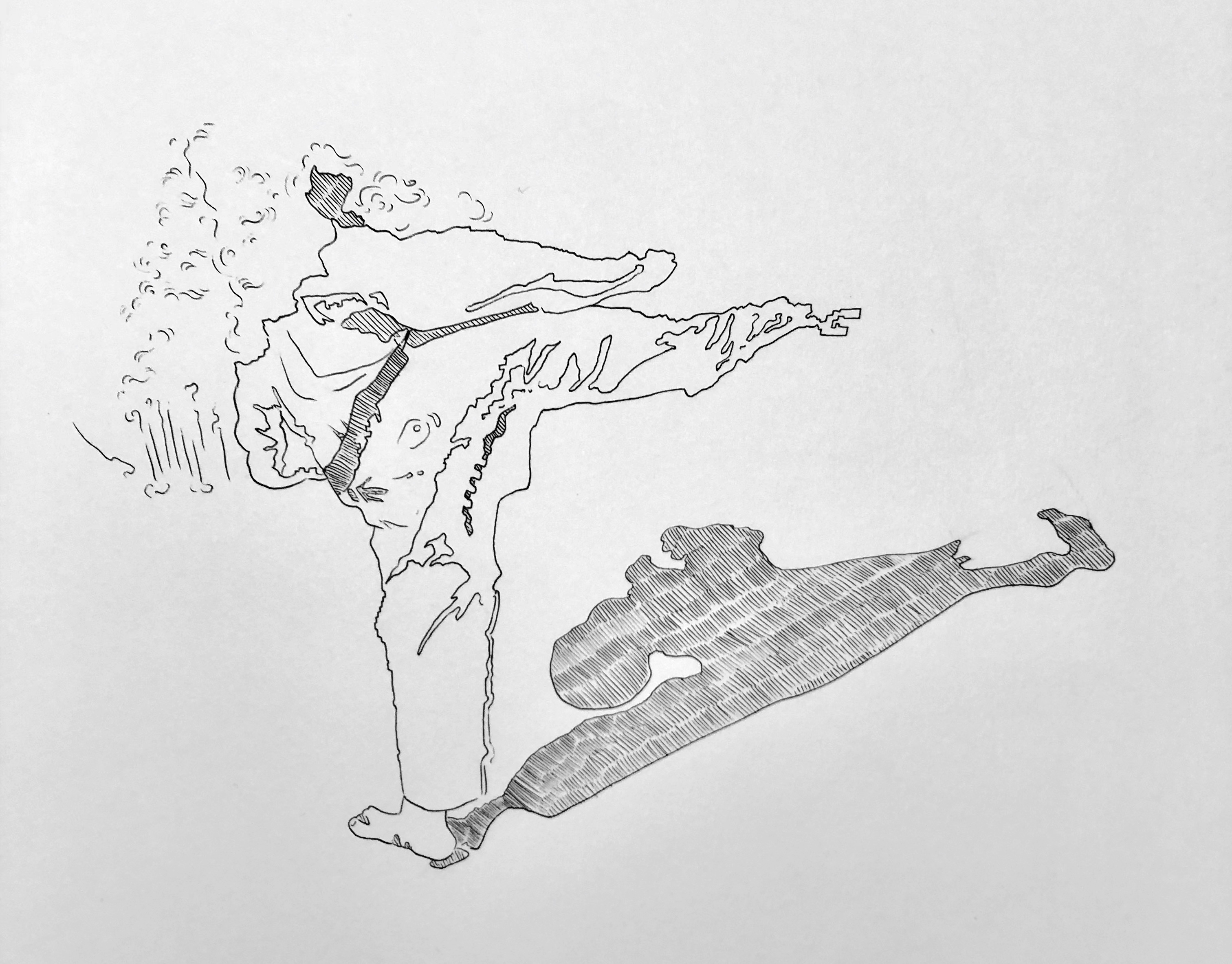

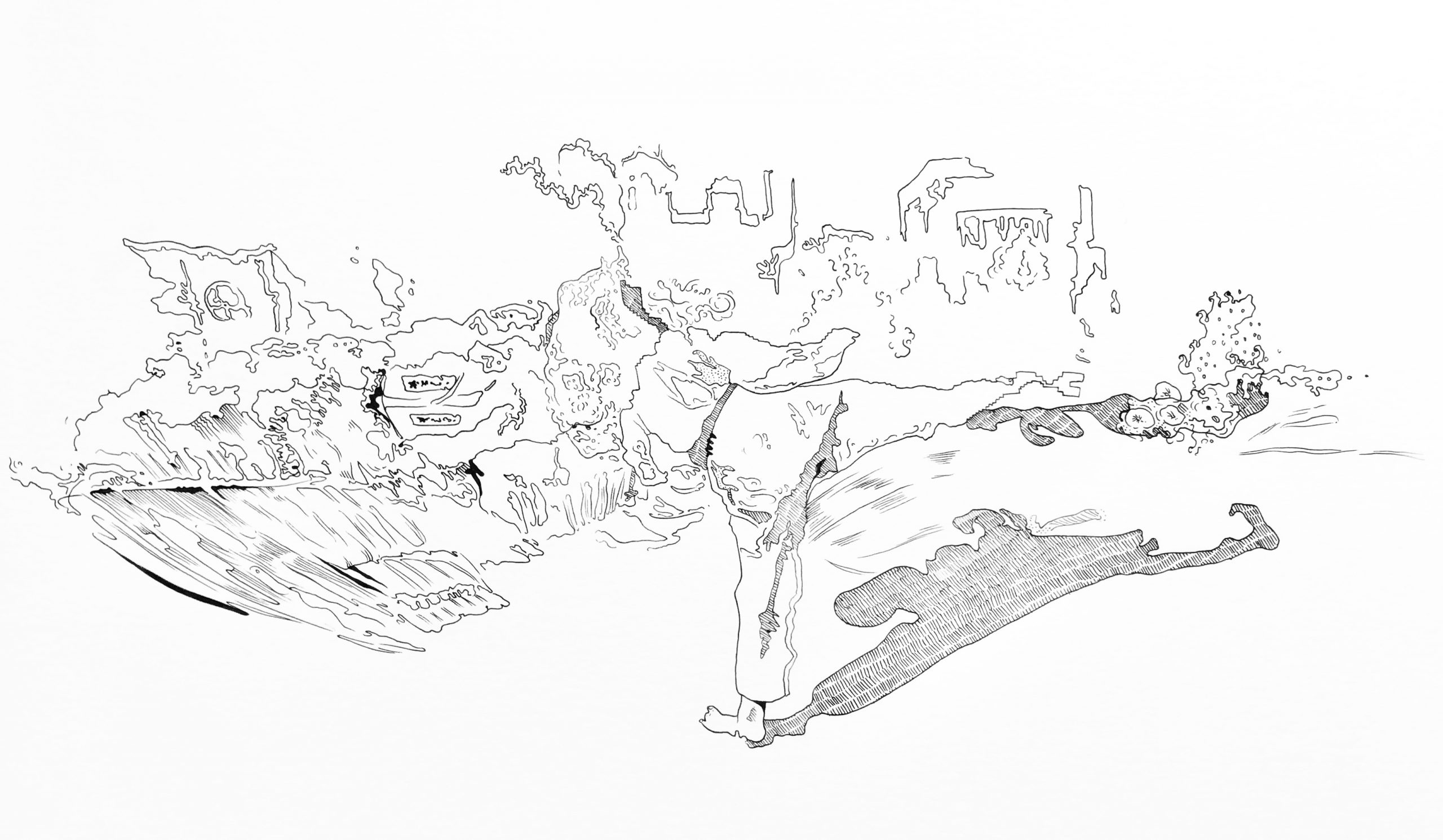

The three subjects I illustrated in this project are intended to represent three facets of emotional connection: the construct of the self (Self Portrait,) relationships and environments (The Late Spring,) and personal/family histories (Light room.) The first is an attempt from back in February to make a 3D model of myself using photogrammetry. Due to my imperfect technique, the model was full of aberrations and my head split into four. The second image documents my attempt to model my housemate striking a Tae Kwon Do form in the backyard of our house in Vermont, a place I never would have stayed for so long if it weren’t for the pandemic. The third shows the favorite stuffed animals of my little brother, who died when I was 9, posed in what used to be his bedroom. The room has since been renewed by years of my other little brother’s life there and neutralized by generic guest room decor once he moved out, but the emotional presence remains. The room is full of bright, reflective surfaces that caused the model to glitch and shafts of light to take physical form.

Below, you can see the original, uncolored pen drawings I made from the photogrammetry. Below that are screenshots of the photogrammetry models themselves.

Lineart

Pen on paper

References

Photogrammetry screenshots from Agisoft Metashape