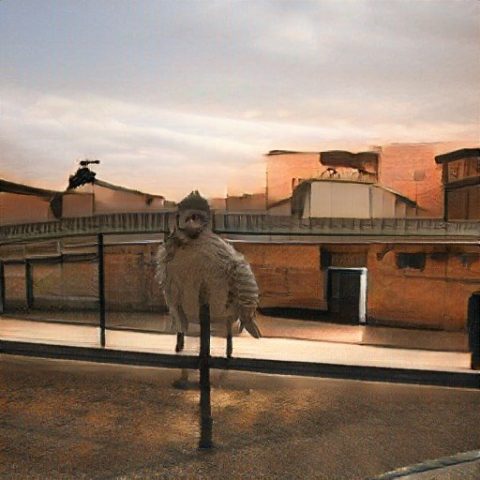

My project was mainly a learning experience pointed at learning in the ins and outs of Unity. Because of this, a lot of my time was spent doing tutorials and getting used to the new interface and programming language (C#). I chose to insert objects from a Blender scene to make my job a bit easier, but it didn’t actually end up helping that much because I had to spend a lot of time baking the textures from the objects and remapping the objects’ UV’s. There were two main complex problems I sought to solve in my project. 1st was to create a player character that moved coherently in relation to a third person character. The 2nd issue was to create a shader system so that parts of objects only within a transparent cube would be rendered. The latter proved especially hard to figure out. I was able to implement both of these things, which I was pretty satisfied with.

Here is a video showing more clearly the capability of the cube-clipping shader system.

Where to go from here:

I had a lot of ideas for where I could take my project further, and I plan on implementing at least some of them before the submission. Here are some of them:

-make the camera moveable with mouse control

-make the player be able to ride the train

-create an above-ground environment the player can travel to

-implement better lighting

-improve materials (the trains especially)

-add other characters into the scene, perhaps with some simple crowd simulation thar allows the characters to get on and get off the train

-implement simple character animations