“More Like This, Please”

Much of today’s presentation focuses on the use of deep convolutional neural networks in generating imagery.

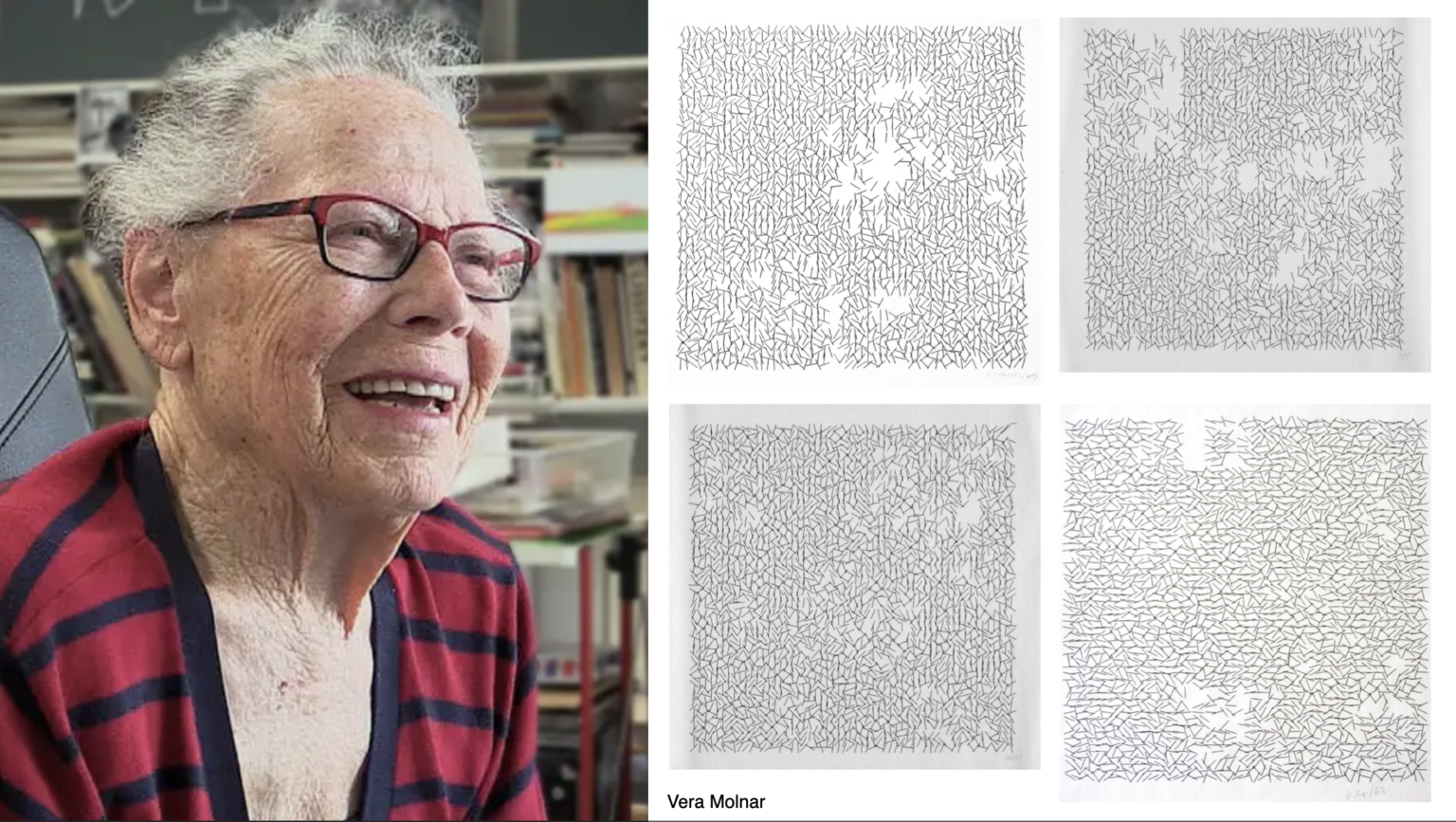

Recall how earlier in the semester, we discussed computer arts pioneer, Vera Molnár, born 1924 (and still working at 98), a Hungarian-French artist who was one of the first dozen or so people to make art with a computer. Molnár described her interest in using the computer to create designs that could “surprise” her.

Consider this generative 1974 plotter artwork by Molnár, below. How did she create this artwork? We might suppose there was something like a double-for-loop to create the main grid; another iterative loop to create the interior squares; and some randomness that determined whether or not to draw these interior squares, and if so, some additional randomness to govern the extent to which the positions of their vertices would be randomized. We suppose that there were some variables, specified by the artist, that controlled the amount of randomness, the dimensions of the grid, the various probabilities, etc.

Molnar’s process is a great illustration of how generative computer artworks were created during their first half-century. The artist writes a program that renders a form. This form is mathematically governed, or parameterized, by variables — specified by the artist. Change the values of these variables, and the form changes. She might link these variables to randomness, as Molnar does, or perhaps to gestural inputs, or perhaps link them to a stream of data, so that the form visualizes that data. She has created an artwork (software) that makes artworks (prints). If she want “More like this, please”, she just runs the software again.

Just as with ‘traditional’ generative art (e.g. Vera Molnár), artists using machine learning (ML) develop programs that generate an infinite variety of forms, and these forms are still characterized (or parameterized) by variables. What’s interesting about the use of ML in the arts, is:

-

- That the values of these variables are no longer specified by the artist. Instead, the variables are now deduced indirectly from the training data that the artist provides. As Kyle McDonald has pointed out: machine learning is programming with examples, not instructions. Give the computer lots of examples, and ML algorithms figure out the rules by which a computer can generate “more like this, please”.

- The use of ML typically means that the artists’ new variables control perceptually higher-order properties. (The parameter space, or number of possible variables, may also be significantly larger.) The artist’s job becomes (in part) one of selecting, curating, or creating training sets.

Here’s Helena Sarin’s Leaves (2018). Sarin has trained a Generative Adversarial Network on images of leaves: specifically, photos of a few thousand leaves that she collected in her back yard.

GANs operate by establishing a battle between two different neural networks, a generator and a discriminator. As with feedback between counterfeiters and authorities, Sarin’s generator attempts to synthesize a leaf-like image; the discriminator then attempts to determine whether or not it is a real image of a leaf. Using evaluative feedback from the discriminator, the generator improves its fakes—eventually creating such good leaves that the discriminator can’t tell real from fake.

Here is Robbie Barrat’s Neural Network Balenciaga (2018):

Where Helena Sarin has been training networks using collections of leaves, Robbie Barrat has trained them with a different “fall collection”: photos of high couture from Balenciaga that he downloaded from the Internet. Barrat collected a large dataset of images from Balenciaga runway shows and catalogues, in order to generate outfits that are novel, but at the same time heavily inspired by Balenciaga’s recent fashion lines. Barrat has pointed out that his network lacks any contextual awareness of the non-visual functions of clothing (e.g. why people carry bags, why people prefer symmetrical outfits) – and thus produces strange outfits that completely disregard these functions — such as a pair of pants with a wrap-around bag attached to the shin, and a multi-component asymmetrical coat with an enormous blue sleeve. Barrat’s project circulated on the Internet; soon he was approached by a fashion company, which used his designs as inspiration for real artifacts.

For some artists, GANs present a way of understanding and simulating genres of human creativity. A subtle point that is sometimes lost in the AI hype is that the goal of this work is not to “replace” human artists, but to create works in which these genres are made fresh through defamiliarization— Please generate “More almost like this, please, but with a strikingly unfamiliar new twist”. Eliciting and curating that twist is where the AI artist now puts their effort.

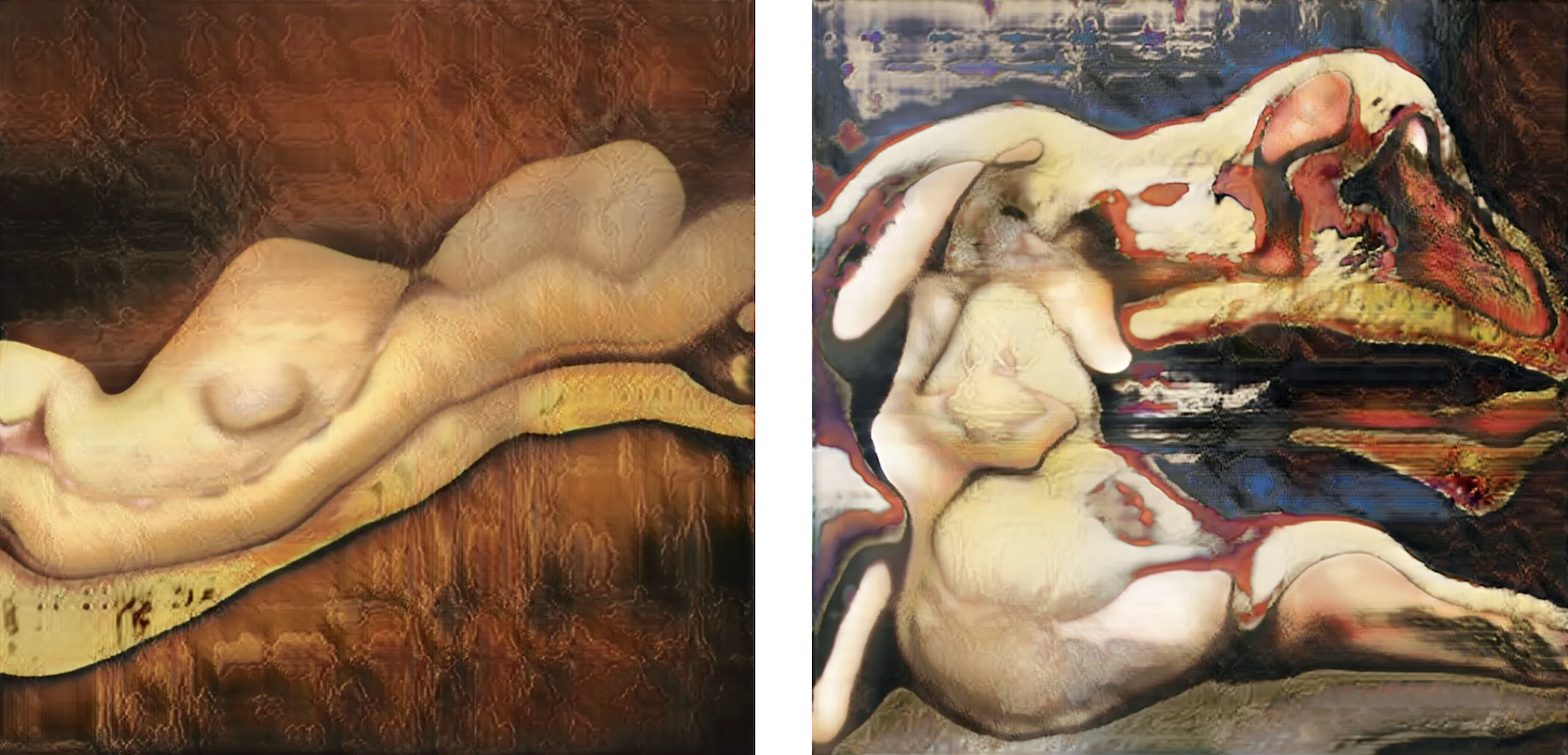

Here’s another set of examples (2018) by Robbie Barrat, who trained a GAN on a large collection of traditional oil paintings of nudes:

A master of this form is Berlin-based Sofia Crespo, an artist using GANs to generate biological imagery. One of her main focal points is the way organic life uses artificial mechanisms to simulate itself and evolve. Placing great effort into creating custom datasets of biological imagery, she has produced a remarkable body of organic images using GANs.

Note that it’s also possible to generate music with GANs. For example, here is Relentless Doppelganger by DADABOTS (CJ Carr and Zack Zukowski)—an infinite live stream of generated death metal:

Here’s artist-researcher Janelle Shane’s GANcats(2019):

Janelle Shane’s project makes clear that when training sets are too small, the synthesized results can show biases that reveal the limits to the data on which it was trained. For example, above are results from a network that synthesizes ‘realistic’ cats. But many of the cat images in Shane’s training dataset were from memes. And some cat images contain people… but not enough examples from which to realistically synthesize one. Janelle Shane points out that cats, in particular, are also highly variable. When the training sets are too small to capture that variability, other misinterpretations show up as well.

In his earlier video project Face Feedback III (2017), Mario Klingemann puts a generative adversarial network into feedback with itself, trying to enforce as much of the image as possible to look like a face. We observe the algorithm working over time to better resolve its previous results into faces.

An interesting response to GAN face synthesis is https://thisfootdoesnotexist.com/, by the Brooklyn artist collective, MSCHF. By texting a provided telephone number (currently not working), visitors to the site receive text messages containing images of synthetic feet produced by a GAN:

Here is a highly edited excerpt from the terrific essay which accompanies their project:

Foot pics are hot hot hot, and you love to see ‘em! At their base level they are pictures of feet as a prominent visual element. Feet are, by general scientific consensus, the most common non-sexual-body-part fetish. Produced as a niche fetishistic commodity, feet pics have all the perceived transgressive elements of more traditionally recognized pornography, but without relying on specific pornographic or explicit content. And therein lies their potential.

Foot pics are CHAMELEONIC BI-MODAL CONTENT. Because foot pics can operate in two discrete modes of content consumption simultaneously (i.e. they can be memes and nudes simultaneously, in the same public sphere), their perception depends entirely upon the viewer and the context in which the image appears. Thus the foot pic is both highly valuable and almost worthless at the same time – and this creates a highly intriguing supply & demand dynamic when creators/consumers fall on different ends of this valuation scale.

The foot pic specifically confounds the famous Supreme Court working definition of pornography – “[You] Know It When You See It.” Because the foot pic may be devoid of any mainstream pornographic signifiers it is both low barrier to entry and significantly safer to distribute. The production of the picture may, depending entirely upon the person to whom the foot belongs, be essentially valueless in the mind of the producer – and yet the resulting image strongly valued by the right consumer.

What Neural Networks See. (n.b. NSFW)

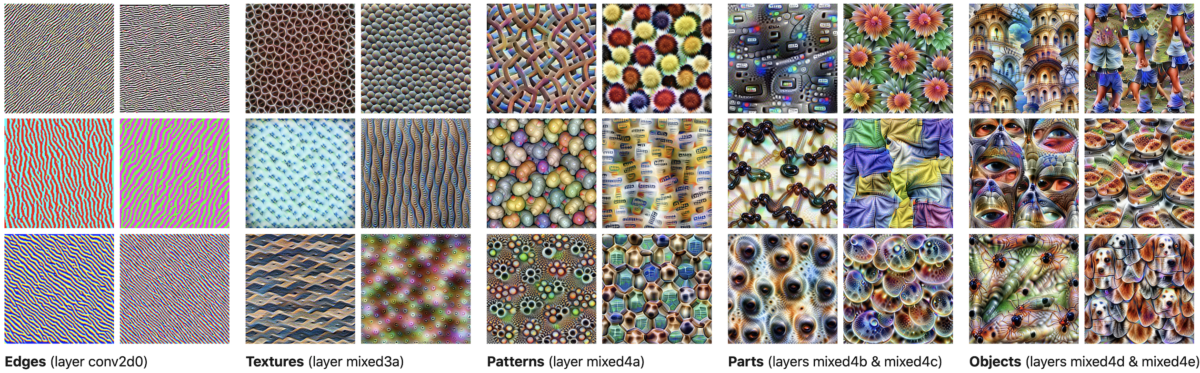

Neural networks build up understandings of images, beginning with simple visual phenomena (edges, spots), then textures, patterns, constituent parts (like wheels or noses), and objects (like cars or dogs). This is a great article to understand more: “Feature Visualization: How neural networks build up their understanding of images“, Olah, Chris and Mordvintsev, Alexander and Schubert, Ludwig.

Here’s Jason Yosinski’s video, Understanding Neural Networks Through Deep Visualization:

Demo: Distill.pub Activation Atlas

“DeepDream”, by Alex Mordvintsev, is a kind of iterative feedback algorithm in which an architecture whose neurons detect specific things (say, dogs) is asked: what small changes would need to be made to this image, so that any part that already resembled a dog, looked even more like a dog? Then, make those changes….

The next investigation is by Gabriel Goh, circa 2017, from Image Synthesis from Yahoo’s open_nsfw:

Detecting pornography is a major challenge for Internet hosts and providers. To help, Yahoo released an open-source classifier, open_nsfw, which rates images from 0 to 1. Gabriel Goh, a PHD student at UC Davis, wanted to understand this tool better. He started by using a neural network to synthesize some “natural-looking” images, shown in the bottom row, using white noise as a starting point. He then used a generative adversarial technique to maximally activate certain neurons of the Yahoo classifier: what he called “neural-net guided gradient descent”. Goh’s program basically asked, in many iterative steps, “how would this image need to change if it were to look slightly more like pornography?”

The result is a remarkable study in the abstract depiction of nudity. In short, Goh’s machine makes images that another machine believes are porno. These images are clearly not safe for work, but it’s difficult, or humorous perhaps, to say why. From the standpoint of conceptual art, this is really extraordinary.

Not only is it possible to synthesize images which maximally activate the porn-detecting neurons, it is also possible to generate images “whose activations span two networks”. Goh bred these images to be recognized as “beach” scenes by the places_CNN network … and then generated the bottom row, which activates detectors in both places_CNN and open_NSFW. Goh explains that these images fascinate him because “they are only seemingly innocent. The NSFW elements are all present, just hidden in plain sight. Once you see the true nature of these images, something clicks and it becomes impossible to unsee.”

Artist Tom White has been working in the inverse way, creating images which are designed to fool machine vision systems, in order to better understand how computers see. The next work is from his 2018 series, Synthetic Abstractions:

Tom White’s Synthetic Abstractions series are seemingly innocent images, generated from simple lines and shapes, which trick algorithms into being classified as something else. Above is an arrangement of 10 lines which fools six widely-used classifiers into being perceived as a hammerhead shark, or an iron. Below this is a silkscreen print, “Mustard Dream”, which White explains is flagged as “Explicit Nudity” by Amazon Web Services, “Racy” by Google SafeSearch, and “NSFW” by Yahoo. White’s work is “art by AI, for AI”, which helps us see the world through the eyes of a machine. He writes: “My artwork investigates the Algorithmic Gaze: how machines see, know, and articulate the world. As machine perception becomes more pervasive in our daily lives, the world as seen by computers becomes our dominant reality.” This print literally cannot be shown on the internet; taking a selfie in his gallery may get you banned from Instagram.

Style Transfer, Pix2Pix, & Related Methods

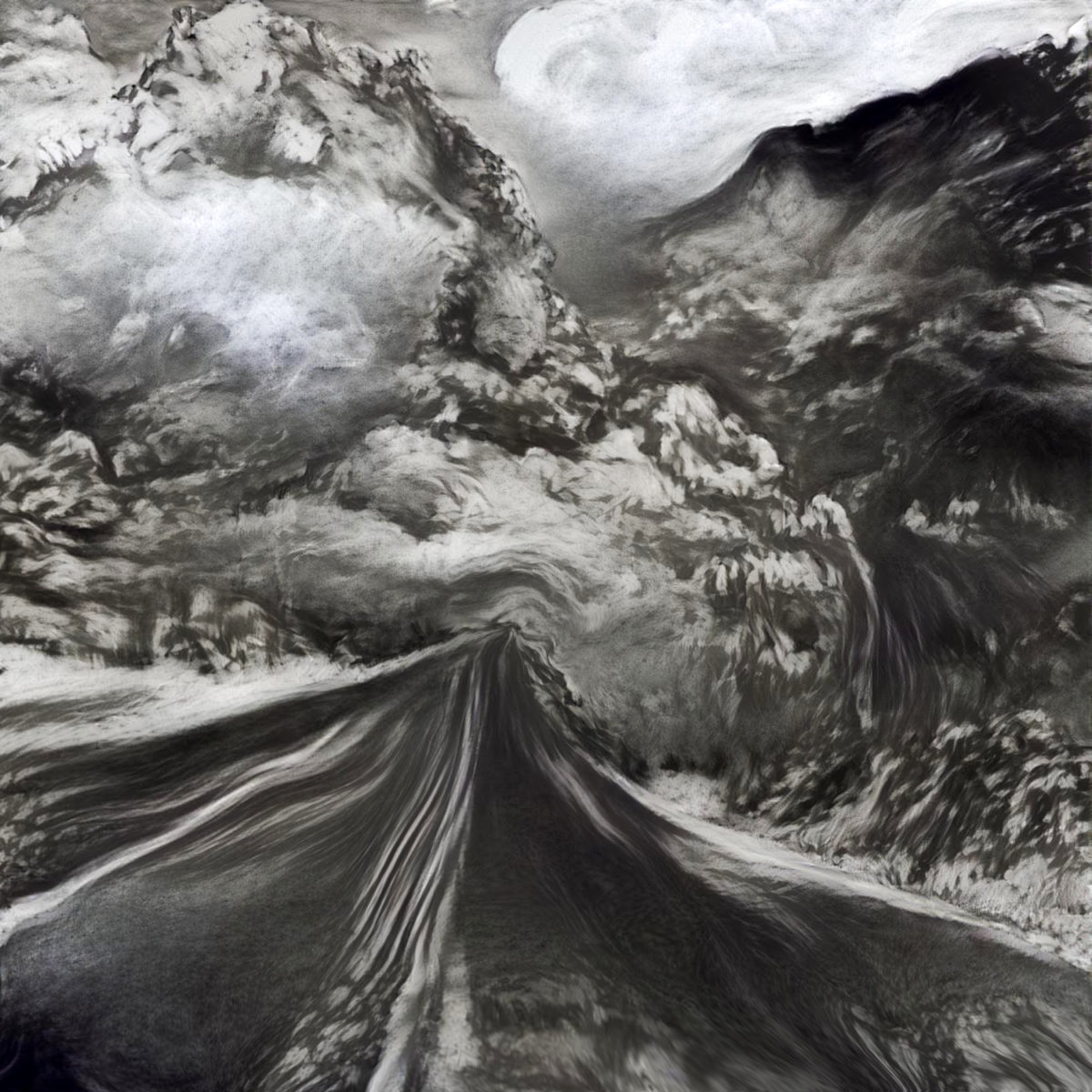

(Image: Alex Mordvintsev, 2019)

You may already be aware of “neural style transfer”, developed by a Dutch computing lab in 2015. Neural style transfer is an optimization technique used to take two images—a content image and a style reference image (such as an artwork by a famous painter)—and blend them together so the output image looks like the content image, but “painted” in the style of the style reference image. It is like saying, “I want more like (the details of) this, please, but resembling (the overall structure of) that.”

This is implemented by optimizing the output image to match the content statistics of the content image and the style statistics of the style reference image.

Various new media artists are now using style transfer code, and they’re not using it to make more Starry Night clones. Here’s a project by artist Anne Spalter, who has processed a photo of a highway with Style Transfer from a charcoal drawing:

Some particularly stunning work has been made by French new-media artist Luluixix., who uses custom textures for style-transferred video. Originally a special effects designer, she now is active in NFT marketplaces:

More lovely work in the realm of style transfer is done by Dr. Nettrice R. Gaskins, a digital artist and professor at Lesley University. Her recent works use a combination of traditional generative techniques and neural algorithms to explore what she terms “techno-vernacular creativity”.

Style transfer has also been used by artists in the context of interactive installations. Memo Akten’s Learning to See (2017) uses style transfer techniques to reinterpret imagery on a table from an overhead webcam:

A related interactive project is the whimsical Fingerplay (2018) by Mario Klingemann, which uses a model trained on images of portrait paintings:

Fingerplay (Take 1) pic.twitter.com/oyys84Al0e

— Mario Klingemann (@quasimondo) April 7, 2018

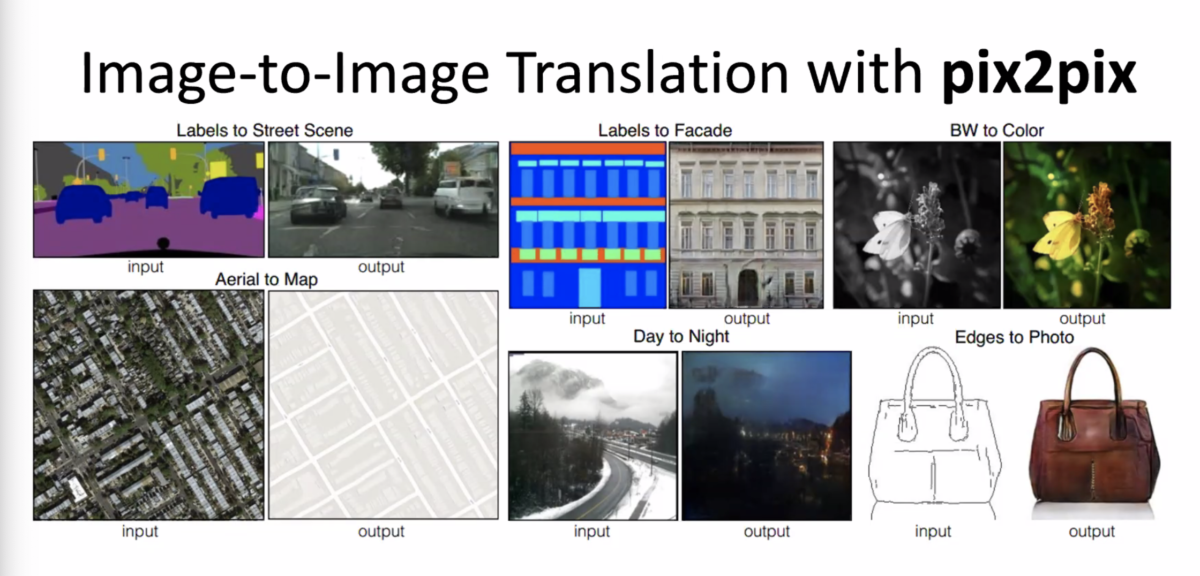

Conceptually related to style transfer is the Pix2Pix algorithm by Isola et al. In this way of working, the artist working with neural networks does not specify the rules; instead, she specifies pairs of inputs and outputs, and allows the network to learn the rules that characterize the transformation — whatever those rules may be. For example, a network might study the relationship between:

- color and grayscale versions of an image

- sharp and blurry versions of an image

- day and night versions of a scene

- satellite-photos and cartographic maps of terrain

- labeled versions and unlabeled versions of a photo

And then—remarkably—these networks can run these rules backwards: They can realistically colorize black-and-white images, or produce sharp, high-resolution images from low-resolution ones. Where they need to invent information to do this, they do so using inferences derived from thousands or millions of real examples.

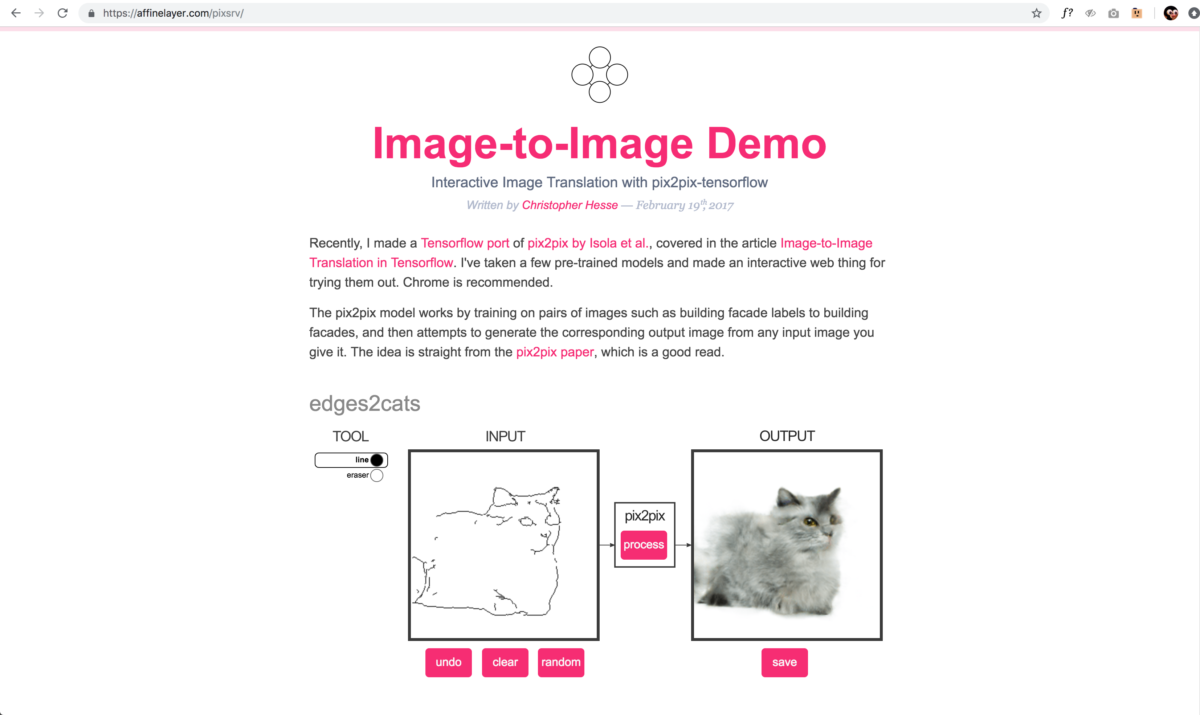

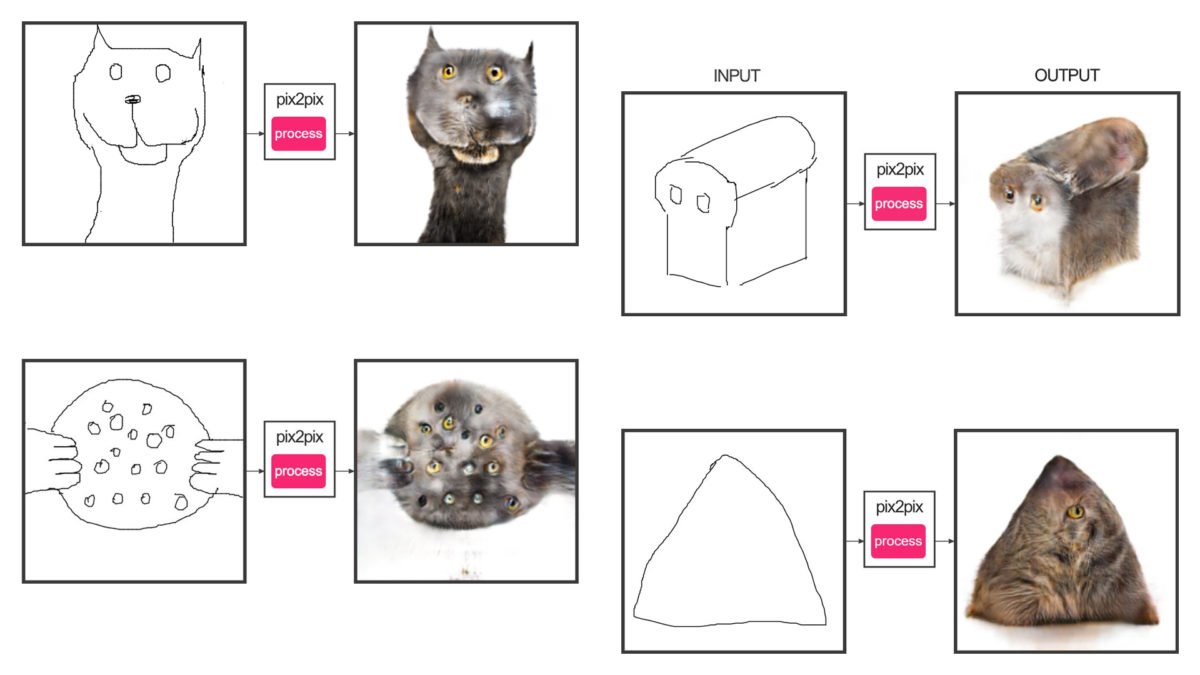

I’d like to present you a good example of this, and something fun you can experiment with yourself at home. This is a program called Edges2Cats by Christopher Hesse, which we will be using in our exercises. In this project, Hesse took a large number of images of cats. He ran these through an edge-detector, which is a very standard image processing operation, to produce images of their outlines. He trained a network to understand the relationship between these image pairs. And then he created an interaction where you can run this relationship backwards.

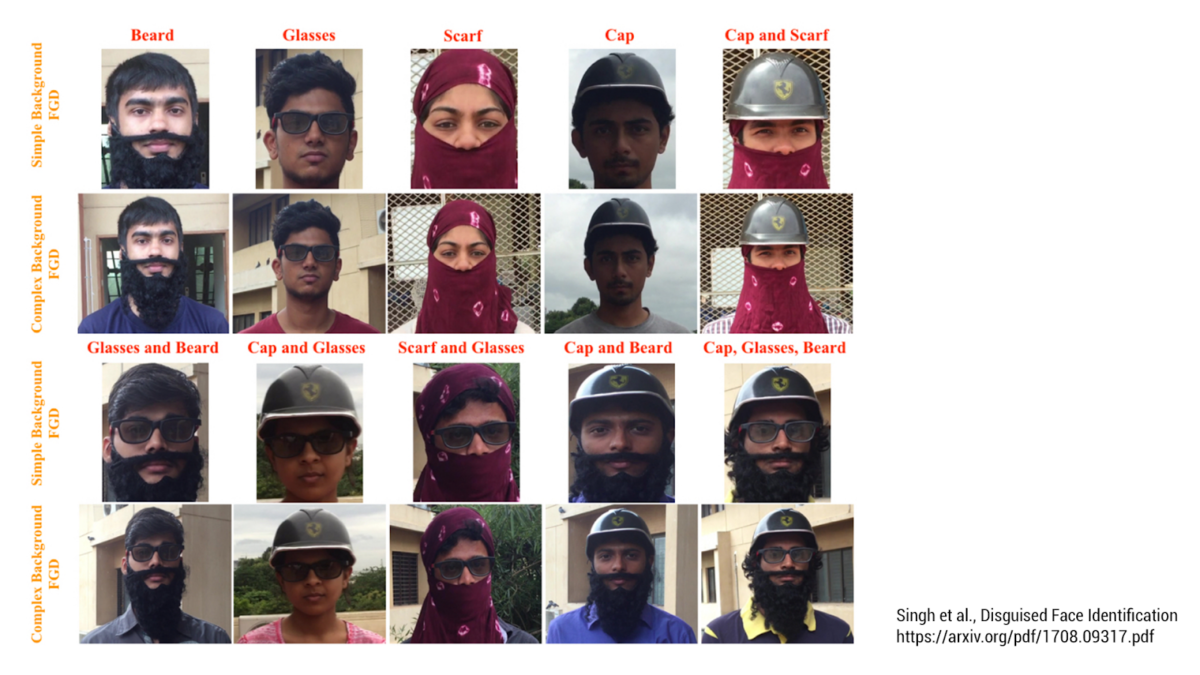

It’s worth pointing out that evil twin of Edges2Cats is a project like the one above, which is aimed at occluded or disguised face recognition. These researchers have trained their network on pairs of images: your face, and your face with a disguise — in the hope of running that network backwards. See someone with a mask, and guess who it is…

Newer variations of style transfer, such as CycleGAN, allow a network to be trained with unpaired photographs, such as a collection of horse images, and a collection of zebra images.

Latent Space Navigation

When a GAN is trained, it produces a “latent space” — a multi-thousand-dimensional mathematical representation of how a given subject varies. Those dimensions correspond to the modes of variability in that dataset, some of which we have conventional names for. For example, for faces, these dimensions might encode visually salient continuums such as:

- looking left … looking right

- facing up … facing down

- smiling … frowning

- young … old

- “male” … “female”

- smiling … frowning

- mouth open … mouth closed

- dark-skinned … light skinned

- no facial hair … long beard

Some artists have created work which is centered on moving through this latent space. Here is a 2020 project by Mario Klingemann, in which he has linked some variables extracted from music analysis (such as amplitude), to various parameters in the StyleGAN2 latent space. Every frame in the video visualizes a different point in the latent space. Note that Klingemann has made artistic choices about these mappings, and it is possible to see uncanny or monstrous faces at extreme coordinates in this space: (play at slow speed)

It’s also possible to navigate these latent spaces interactively. Here’s Xoromancy (2019), an interactive installation by CMU students Aman Tiwari (Art) and Gray Crawford (Design), shown at the Frame Gallery:

The Xoromancy installation uses a LEAP sensor (which understands the structure of the visitors’ hands); the installation maps variables from the user’s hands to the latent space of the BigGAN network.

In a similar vein, here’s Anna Ridler’s Mosaic Virus (2019, video). Anna Ridler’s real-time video installation shows a tulip blooming, whose appearance is governed by the trading price of Bitcoin. Ridler writes that “getting an AI to ‘imagine’ or ‘dream’ tulips echoes 17th century Dutch still life flower paintings which, despite their realism, are ‘botanical impossibilities’“.

We will watch 6 minutes of this presentation by Anna Ridler (0:48 – 7:22), where she discusses how GANs work; her tulip project; and some of the extremely problematic ways in which the images in datasets that are used to train GANS, have been labeled.