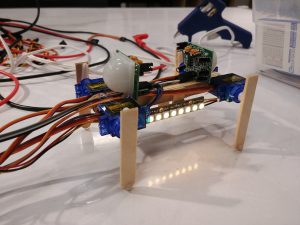

The concept that I set out to convey with my project was distractions and apathy preventing you from achieving what you want/need.

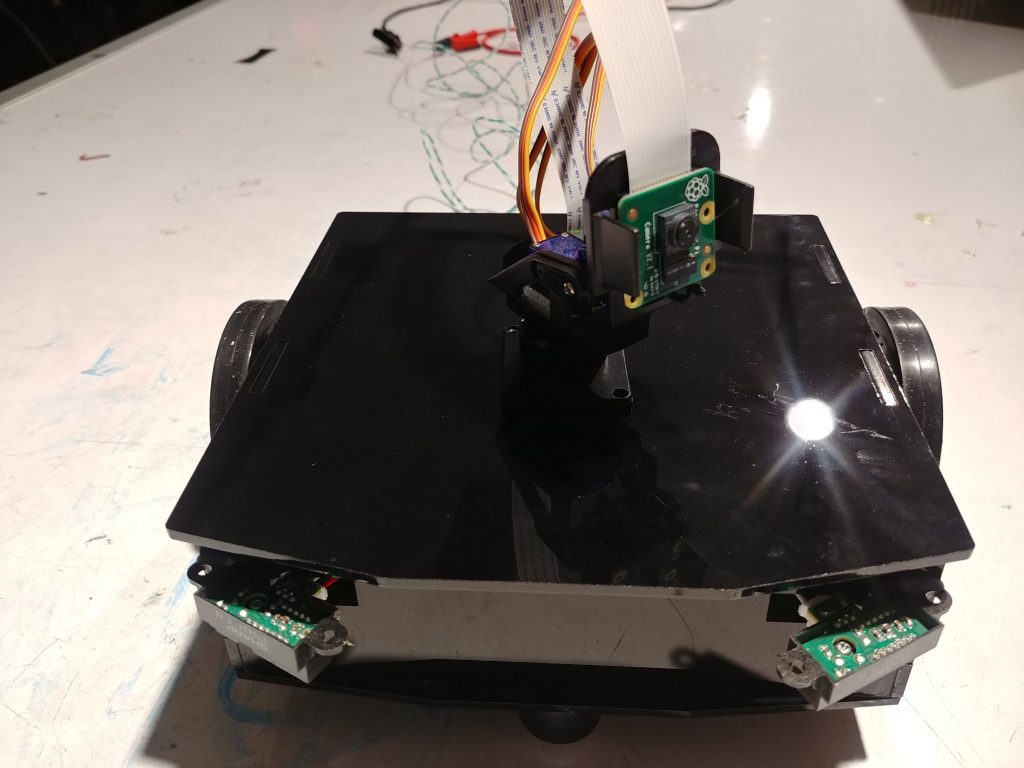

I set about doing this by creating a robot that would meander towards various targets, and you can interfere and distract it along the way. In the end there is some overly interesting behavior, but for the most part it works.

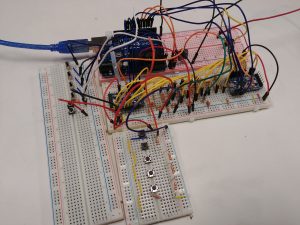

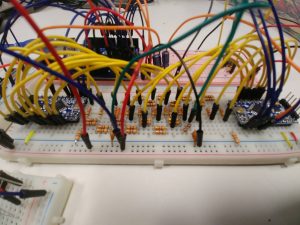

The bot was controlled by a raspberry pi using openCV, and each target had an arduino pro mini with a neopixel and an IR range sensor.

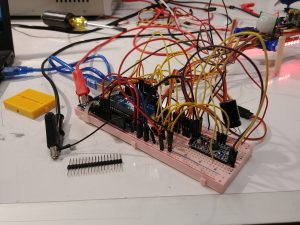

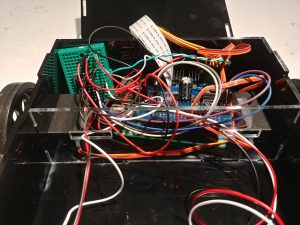

While it mostly worked, there were still a couple of issues that I ran into. The internals of the bot are a mess:

With most of the space allocated for a battery that I’m still having power regulation issues with, so it may remain tethered to a power supply.