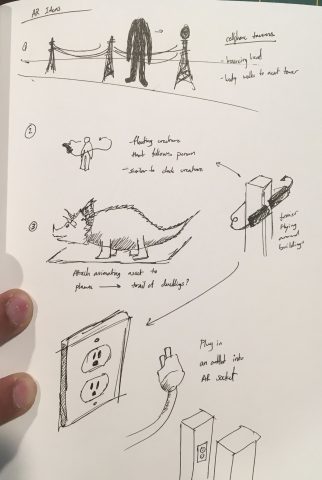

Augmented Creatures

I ended up using Blender for the entirety of this assignment instead of Unity. It is not really AR in that it is not realtime. Instead, I used image tracking within Blender to map certain image targets. Then, I rendered the animation I wanted superimposed over the original video. I definitely think using Unity probably would have been easier to do than what I did. However, I still learned a lot about FBX files (the main reason I did use Unity is because I could not properly make an FBX of the creature asset I made).

Process

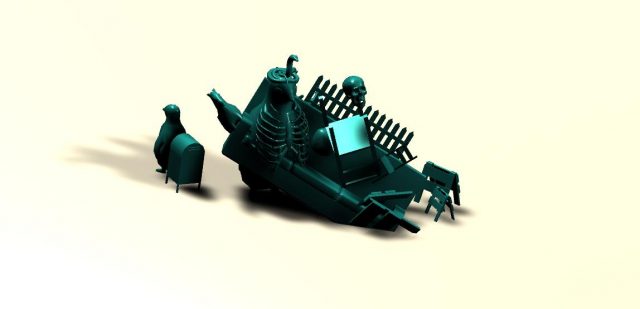

Creating the creature: I made one generic creature in Blender using a series of pentagonal toruses. I animated them as I wanted and the style is inspired by my Creature Clock for deliverable03.

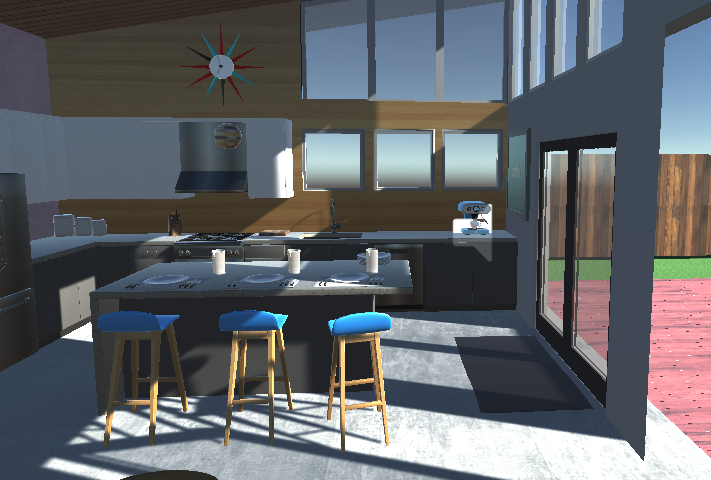

Tracking the video: I prerecorded this rather mundane scene of my family’s dining table (honestly, we never eat here tho). Using the Movie Clip Editor within Blender, I detected the features and tracked them throughout the clip. Then, I assigned a plane and a scene using the trackers so that I could map my assets onto that plane.

Arranging the scene: I then created multiple copies of the original asset. Here, you can see the camera movement is calculated based on the previously mentioned tracking.

Time to render: Because I wanted the shadows of the objects to be realistic, I used the cycles renderer. Unfortunately, these measly 372 frames took close to 12 hours to render. Each image here is actually a PNG (ie. it has a clear background)

Putting the two parts together: This was honestly a tad disappointing because after 12 hours of waiting, seeing that black border was not a fun time. I still think it looks cool but, no need to worry because cropping is a thing.

Cropping: After the final cropping, there is a bit of compression, so the quality is a bit reduced, but I am super satisfied with how the shadows appear on the table and the wall.