var renderer;

var starSize = 10;

var stars = [];

var launchSpeed = 40;

var starDuration = 1000;

var starLimit = 10;

var starGrowth = .5;

var starMoveFade = 20;

var persX = 30;

var persY = 30;

var timerMultiplier = 0.2;

var particles = [];

var particleSize = 5;

var particleSpeed = 2;

var limitZ = -7500;

var starWiggle = 4;

var bgStars = [];

var bgStarNumber = 100;

function setup() {

renderer = createCanvas(windowWidth, windowHeight, WEBGL);

for (var i = 0; i < 100; i++) {

bgStars.push(makeBackgroundStars());

bgStars[i].x = random(-15000, 16000);

}

frameRate = 60;

}

function draw() {

background(0);

fill(255);

translate(-width / 2, -height / 2, 0);

noCursor();

ellipse(mouseX, mouseY, 25);

//drawing background stars

if (bgStars.length < bgStarNumber) {

bgStars.push(makeBackgroundStars());

}

for (var i = 0; i < bgStars.length; i++) {

bgStars[i].draw();

bgStars[i].move();

if (bgStars[i].x < -15000) {

bgStars.splice(i,1);

}

}

//drawing particles

if (mouseIsPressed) {

particles.push(makeParticle());

for (var i = 0; i < particles.length; i++) {

particles[i].draw();

particles[i].move();

particles[i].remove();

if (particles[i].opq == 0){

particles.splice(i,1);

}

}

}

//drawing stars

for (var i = 0; i < stars.length; i++) {

stars[i].draw();

stars[i].count();

stars[i].move();

if (stars[i].timer > starDuration) {

stars.shift();

}

if (stars[i].x < -15000) {

stars.splice(i,1);

}

}

//removeStars

if (stars.length > starLimit) {

stars.shift();

}

}

//when the mouse is pressed, it starts creating a star

function mousePressed() {

stars.push(makeStar());

}

//as soon as the stars are done being created, they are not the newest

function mouseReleased() {

for (var j = 0; j < stars.length; j++) {

stars[j].newest = false;

}

particles = [];

}

function makeStar() {

var star = {x: mouseX, y: mouseY, z: 0,

size: starSize, newest: true,

perspectiveX: width/2 - mouseX,

perspectiveY: height/2 - mouseY,

// evolve: evolveStar,

count: countTimeStar, timer: 0,

flashX: mouseX, flashY: mouseY, flashZ: -1000,

draw: drawStar, move: moveStar, sizeDown: 20}

return star;

}

//drawing the star

//controls the growth of the star

function drawStar() {

// translate(this.x, this.y, this.z);

push();

translate(this.x, this.y, this.z);

strokeWeight(0);

stroke(200);

//radiance shown by multiple circle

for (var i = 0; i < 3; i++) {

fill(255, 255, 255, 40);

sphere(this.size * (1 + (i / 2)), 15, 15);

}

pop();

if (mouseIsPressed & this.newest == true) {

this.size += starGrowth;

}

if (mouseReleased) {

strokeWeight(0);

push();

translate(this.flashX, this.flashY, 0);

fill(255, 255, 255, 100 - 5 * (this.timer))

sphere(this.size * ((this.timer) ^ 2 / 10));

// sphere((this.size * ((this.timer)/10))/2 * 10);

pop();

}

}

//controls the movement of the star

function moveStar() {

//if it has been released,

if (this.newest == false) {

if (this.z <= limitZ) {

this.y += starWiggle * sin(millis()/300);

this.x -= starMoveFade;

while (this.sizeDown > 1) {

this.size = this.size * .99;

this.sizeDown -= 1;

print("hi")

}

}

else if (this.z > limitZ) {

this.z -= launchSpeed * this.timer * .25;

this.x -= this.perspectiveX / persX * (this.timer * timerMultiplier);

this.y -= this.perspectiveY / persY * (this.timer * timerMultiplier);

}

else {this.y -= launchSpeed * (this.timer * .05);

}

}

}

function countTimeStar() { //works!

if (frameCount % 2 == 0 & this.newest == false) {

this.timer++;

}

}

//creating particles that will gather towards the touch point

function makeParticle() {

var particle = {OriginX: mouseX, OriginY: mouseY, OriginZ: 0,

awayX: mouseX - random(-500, 500),

awayY: mouseY - random(-500, 500),

awayZ: 0 + random(-200, 200), opq: 20,

move: moveParticle, count: countTimeParticle,

draw: drawParticle, remove: removeParticle,

timer: 0}

return particle;

}

function drawParticle() {

push();

translate(this.awayX, this.awayY, this.awayZ);

fill(255, 255, 255, this.opq);

sphere(particleSize);

sphere(particleSize / 2);

pop();

}

function removeParticle() {

if (dist(this.OriginX, this.OriginY, this.OriginZ,

this.awayX, this.awayY, this.awayZ) <= 50) {

this.opq = 0;

}

}

function moveParticle() {

var distX = this.OriginX - this.awayX;

var distY = this.OriginY - this.awayY;

var distZ = this.OriginZ - this.awayZ;

this.awayX += distX * .03;

this.awayY += distY * .03;

this.awayZ += distZ * .03;

this.opq += 50 / dist(this.OriginX, this.OriginY, this.OriginZ,

this.awayX, this.awayY, this.awayZ);

}

function countTimeParticle() {

if (frameCount % 2 == 0 & this.newest == false) {

this.timer++;

}

}

function makeBackgroundStars() {

var bgStar = {x: 14000, y: random(-5000, 5000), z: limitZ,

move: moveBackgroundStars, draw: drawBackgroundStars,

size: random(20, 60), fluctuate: random(400, 800)}

return bgStar;

}

function moveBackgroundStars() {

this.x -= starMoveFade;

this.y += 2 * sin(millis() / this.fluctuate);

}

function drawBackgroundStars() {

push();

translate(this.x, this.y, this.z);

noStroke();

sphere(this.size);

fill(255, 255, 255, 30 * (sin(millis() / this.fluctuate) / 2));

sphere(2 * this.size);

pop();

}

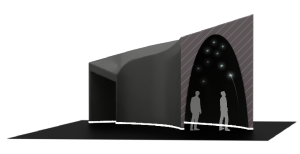

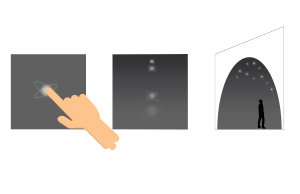

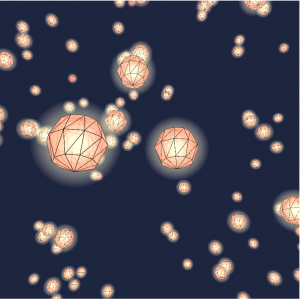

I created an interactive star generator as a simulation for the design project, “Tesla’s Sunlit Night”. The concept is that people entering this brand pop-up shop will be able to interactive the inner walls the way this visualization allows the audience to. The only major difference in the interaction would be the clicks translated as touches.

It is meant to emphasize through experience what Tesla’s solar energy products can do. The reason this pop up is called, “Sunlit Night” is because the experience of creating stars in the dark ceiling , representing the night sky will be powered by the solar energy.

To interact, just click anywhere on the screen. The longer you hold, it will increase in size. I wanted to push further in the growth of star and how each differ in their appearance, but it was computationally too expensive, and could not be realized.

![[OLD FALL 2019] 15-104 • Introduction to Computing for Creative Practice](../../wp-content/uploads/2020/08/stop-banner.png)

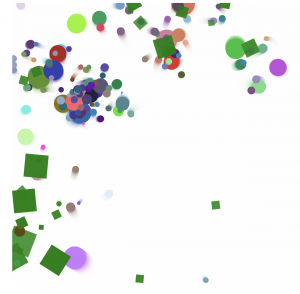

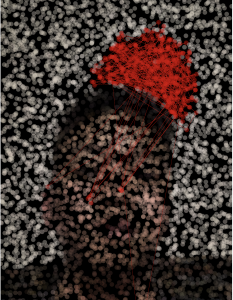

This is the original image

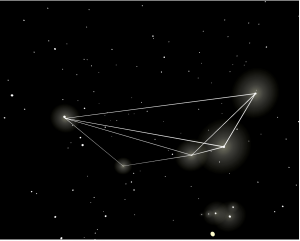

This is the original image This is what has been generated by the algorithm

This is what has been generated by the algorithm