The Weather Thingy is a custom built device that translates weather information into sound. I think this is a very crucial piece to have in this time and age to remind us of the importance of the climate. I think it is very innovative how they turned the climate into sound. While we can feel the climate through all of our senses, I feel that the auditory senses feel the least; therefore, it is important to give the auditory senses. There are also amplifier adjustments that allow the user to adjust how calm or violent it perceives the climate. I feel that the system randomizes how they perceive sounds and offset certain qualities and turn them into sounds. By rescripting nature, the artists have the ability to convey how they perceive nature and share it with others.

Category: LookingOutwards-04

LO 04- Sound Art

This interactive sound art exhibition made in 2016 is created by Anders Lind, a Swedish composer. The exhibition is called LINES, which is connected to the floors, walls, and ceiling to create sensors that allow the audience to be able to make music with the movement of their hands along the walls. While no musical experiences are required, this project brings novelty and inspiration to those who are new to music, allowing them to interact with musical notes with their own bodies. I am inspired by the exhibition because LINES creates a unique form of musical instrument using computer interaction and programming.

Looking Outwards – 04

The project I chose to learn more about was a project called “Plant Sounds” by TomuTomu . The project looks at the sounds various plants emit by “translating the electrical micro-voltage fluctuations” generated by the plants. This signal is then coded and used to produce a soundscape. What’s so interesting about this design is that the sounds come out so smooth and beautiful and that the plant is able to create that. I think for them to build this they would’ve needed to code the input values of the plant, from their electrical micro-voltage fluctuations, and assign values to them that would then relate to the code what time of sound output matches each value. I think the creator’s sensibilities lie in the sounds that are outputted because though the plant gives a specific value for the sounds, the creator decides which pitch is assigned to which value. I also linked another project regarding a similar project but one that experiments with adding voltage to plants and graphing out their molecular DNA changes to this signal. This is the TED talk by Greg Gage.

Link for “Plant Sounds”: //www.youtube.com/watch?v=VvWPT4VhKTk&ab_channel=TomuTomu

Link for TED talk: //www.youtube.com/watch?v=pvBlSFVmoaw&t=1s&ab_channel=TED

Looking Outwards: 04

I really like the album Monolake Silence, by Robert Henke. The premise of the album is to create sound, but also a statement about how we currently listen to sound. Instead of working with different levels of compression and mixing, the entire cast of instruments has been set to the maximum at all times. This is because the way we listen to music in the modern world has changed. Instead of creating music with dynamics or elaborate compositions, current laptop, phone, and radio speakers are tuned to sound best when the music is mixed as loud as possible. This means that when songs of more dynamic genres are played, such as classical music, it sounds nowhere near as well as it might if it had been recorded live or if you were listening through headphones. In the final form you can very clearly tell that every track it at it’s maximum, but the arrangements have been left bare in that there are not too many instruments at a single time, such that we can hear all of the noises despite their volume.

LookingOutwards – 04

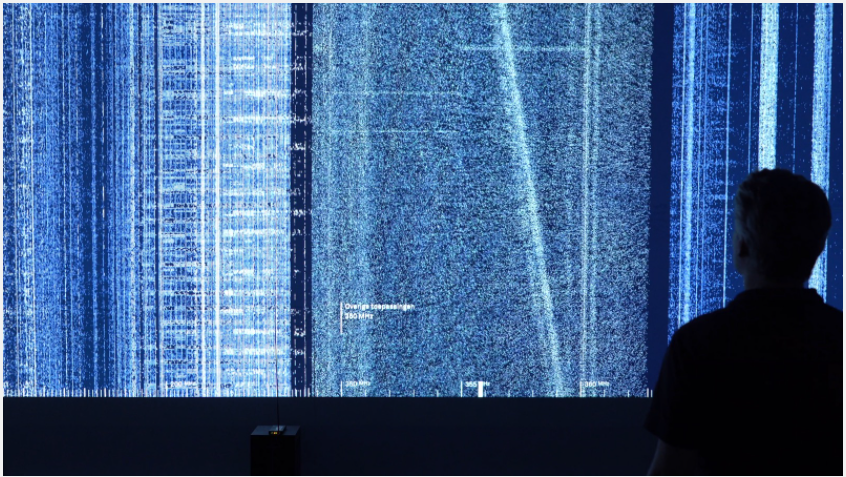

This project Hertzian Landscapes is created by Richard Vijgen. It reflect the invisible sound in a scientific and visual way.

This project uses a very scientific way to create reliable interaction between human and sound. The project setting is well thought and effective.

Also this project thoughtfully categorizes sound frequencies into familiar sub-groups, so that participants can relate the abstract waves into something daily and understandable.

I guess, the program maybe collect spatial data in certain frequencies then transmit them into program then translate them into graphic patterns. When people move, the spatial data is changing at the same time, which will result in the graphical changes.

I don’t think the aim of this project is to demonstrate the author’s art taste, but the project demonstrates a new way of people interacting with sound.

It is very innovative.

Hertzian Landcape #1 from R Vijgen on Vimeo.

Looking Outwards 04 – Sound Art

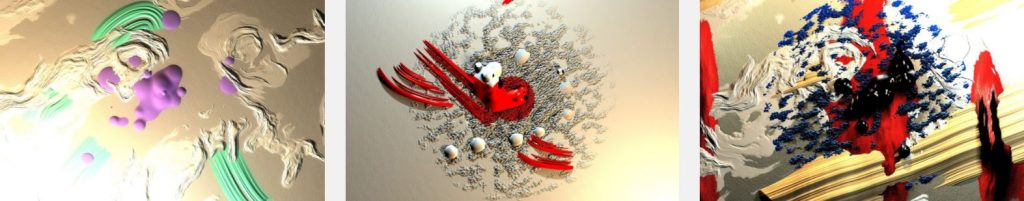

‘Expressions’ is a series of artworks that were created through a collaboration with Kynd and Yu Miyashita (sound). This project explored the physical aspects of thick and bold paint that appear from digital space. This exploration allowed the artist to play with the shapes, light, and shadows that were made from the paint.

Through construction, Kynd combined 2D and 3D graphics that enabled him to create real-time graphics and renderings that had depth along with the interplay of light and shadow. With this method, there were many advantages. It was easier to create intricate shapes and details without the need to manage geometries. Kynd was able to use many 2D image processing techniques that included deformation, blurring, and so much more. It was also very “fast and lightweight” on the CPU. This project consisted of 2 graphical elements: autonomous elements and reactive elements. The tools that were used for ‘Expressions’ included TouchDesigner, openFrameworks, and WebGL. The music that was used for the video created different scenes that almost told a unique story due to its sequence.

The correlation between the 2D/3D graphics and the music were able to express the mood/emotion of the scene. With that said, Kynd has always been inspired by digital paint. As an art student with an interest in Expressionism and Neo-Expressionism, Kynd played around with many oils and other substances that we’re able to achieve unique characteristics and expressions. Even today, it constantly amazes me how intertwined technology and art have become over time.

Looking Outwards 04: Sound Art

Christine Sun Kim’s Elevator Pitch is an interactive art installation that

celebrates the Deaf community of New Orleans and their vitality to a city

that’s world-renowned as the birthplace of jazz music. Kim, who is Deaf,

created the piece to reference her childhood memories of shouting with her

Deaf friends in elevators in order to feel the vibrations and echoes of

their voices in the confined space.

Participants can press buttons in the elevator that feature the voices

of thirteen different people from New Orleans’ Deaf community. The

elevator is a thoughtful structure that challenges the idea of “awkward

silence” in elevators and highlights how ableism can permeate in even the

most innocuous spaces.

Looking Outwards 4

Weather Thingy, Adrien Kaeser, 2018

The Weather Thingy is a custom made sound controller that uses climate events to control the settings of the musical instrument. The piece has 2 parts,

one being a weather station on a tripod microphone, and one being a sound controller connected to the weather station. The station senses wind with an anemometer, rain, and brightness. The station turns this data into “midi data” which can be understood by instruments.

Adrian wanted to make the instrument play live based on the current climate, and have the weather affect the melodies. For example, if the weather station measures rain, the chorus of the melody will change. If the weather station measures wind, the pan of the music will change.

The project used hardware including environmental sensors, weather meters, SparkFun MIDI Shield, encoder rotary knobs, Arduino mega, and Arduino Ienardo. Software that was used in the creation included C++. MIDI protocol, and Arduino.

Looking Outwards-04

The SoundShirt is a project created by CuteCircuit, a company co-founded by Francesca Rosella and Ryan Genz. This company was founded on the desire to use technology to amplify haptic senses and interactions. In 2002, they made the HugShirt which is a shirt with sensors that recreate the sensation of touch. 14 years later in 2016, they branched off this concept to develop the SoundShirt. The SoundShirt contains even more sensors that connect to different sound frequencies and instruments to deliver a specialized haptic experience designed to allow people who cannot hear to experience music. The shirt is designed to work in different music scenarios including live orchestras, concerts, raves, listening to music on your phone, and even playing video games. The shirt is able to adapt to the different scenarios to provide an experience that allows you to feel the music in the most authentic way possible.

I first learned about this shirt when I saw it in the Access+Ability show at the CMOA in 2019. I instantly fell in love with the project the first time I saw it. I admire the focus on accessibly and utilization of technology and computation to transform sound for a community heavily left behind. Although different than what we might be doing in this class, the similarities are clearly evident. While we might be taking sound and finding a way to convert the experience to something visual, this project takes that same idea and theory of computation to translate sound into something tactile. In both scenarios we are using computation to visualize senses in different ways which I find beautiful. While I believe this project is currently only available for select testing and presentations, I hope it finds a way into the public market because I think it is something really important for people who are deaf to have the chance to experience.

Looking Outwards 4: Audio-driven digital art

It is known that simple algorithms can generate complex visual art. Audio-driven digital art, a form of audiovisual art, is similar to generative art in that an algorithm is still responsible for generating the art, however the algorithm only converts audio input into a visual output. Sound visualization techniques are nothing new; they have been used for music videos, desktop backgrounds, and screen savers for quite some time already. Common sound visualization techniques like waveform visualization are used to add visual flare to an app or a music video, or even something as simple as a volume indicator.

Only recently has sound visualization become prevalent in art and design. Audiovisual art allows for the synthesis of both physical sensations to create fully immersive, unique experiences. Nanotak Studio’s Daydream is a mesmerizing example of audiovisual art being utilized to create a unique spatial experience. The installation consists of two series of glass panels that have images projected onto them from behind. The resulting effect creates the illusion of one being in a larger space, with the echoing sounds making the whole experience more immersive. The pushing and pulling of the projected abstract shapes and spaces coincide with the humming and soothing sounds, creating the sensation of being detached from reality. The choreography of sound and light with the use of algorithms is able to create art that provides an experience unlike any other.

![[OLD FALL 2020] 15-104 • Introduction to Computing for Creative Practice](../../wp-content/uploads/2021/09/stop-banner.png)