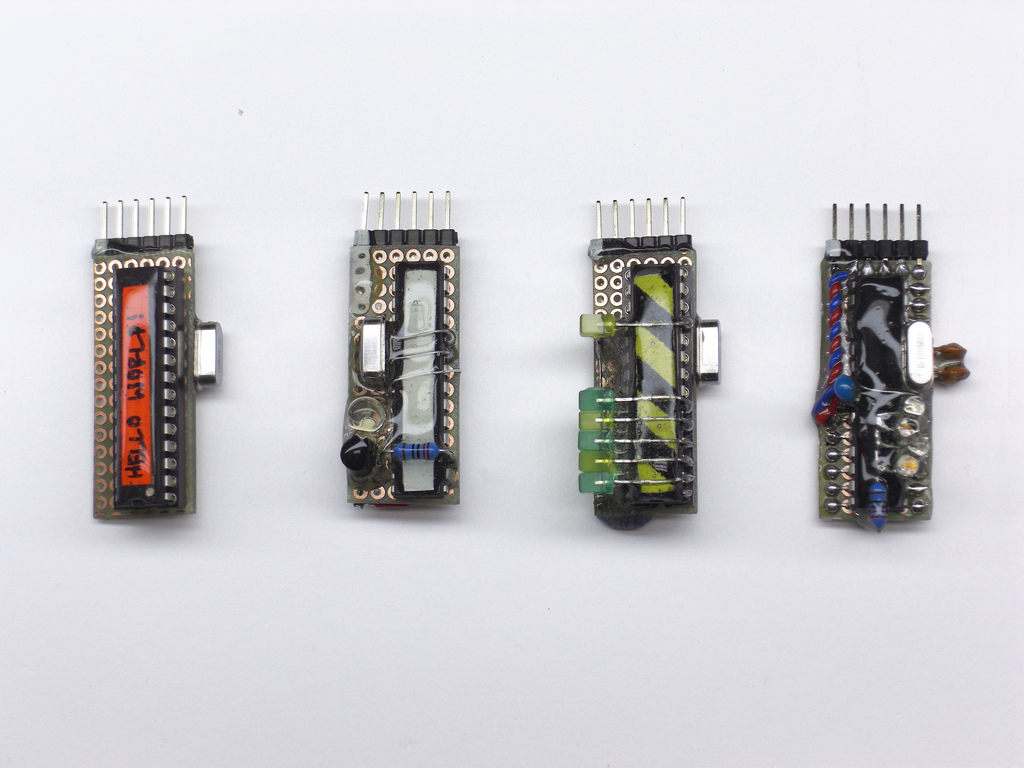

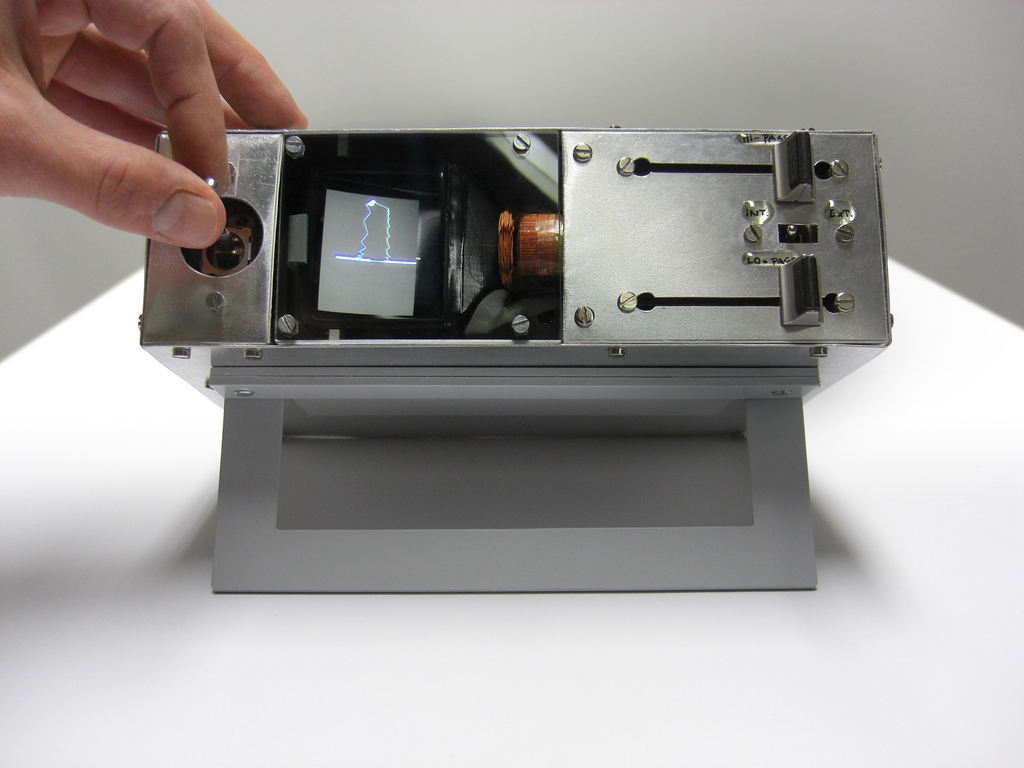

The wheel in general is a soothing object as it follows the shape of the circle which is a strong geometric figure in the design world. A wheel/ shape of a circle is used in many different mechanisms allowing many machines to be invented and working. I was interested in how they took the wheel of a bicycle and decided to make it a sound. A bicycle’s wheel is used mainly for movement, but it also a satisfying movement. It just kept me wondering in how they would change a subject like a wheel into sounds? The way they included the lights as they played with the sound is visually helpful. The algorithm that is used is primarily concerned with manipulating sound, lights, pitch, volume, and speed of sound all with the movement of the bicycle wheel. They used a combination of programs and tools, which focused on each variable. Due to the combination of the variables they were able to make the wheels act as instruments and with the inclusion of lights it caused it to become almost like a show, it was fitting that they called the project “the orchestra”

.

![[OLD FALL 2018] 15-104 • Introduction to Computing for Creative Practice](https://courses.ideate.cmu.edu/15-104/f2018/wp-content/uploads/2020/08/stop-banner.png)