sketch

//Ean Grady

//Section A

//egrady@andrew.cmu.edu

//Project-02

var x = 0

var y = 0

var z = 0

var x2 = 0

var y2 = 0

var z2 = 0

var b = 0

var n = 0

var m = 0

var trix1 = 160

var trix2 = 440

var rx1 = 205

var ry1 = 220

var rx2 = 315

var ry2 = 220

var w = 0

var e = 0

var r = 0

var i = 0

var o = 0

var p = 0

var size = 200

function setup() {

createCanvas(640, 480);

}

function draw() {

background (255, 255, 200);

noStroke();

//hair petals

fill (x2, y2, z2)

ellipse (215, 210, 70, 60)

fill (x2, y2, z2)

ellipse (272, 180, 70, 60)

fill (x, y, z)

ellipse (180, 265, 70, 60)

fill (x2, y2, z2)

ellipse (180, 330, 70, 60)

fill (x, y, z)

ellipse (215, 385, 70, 60)

fill (x2, y2, z2)

ellipse (280, 420, 70, 60)

fill (x, y, z)

ellipse (360, 405, 70, 60)

fill (x2, y2, z2)

ellipse (405, 355, 70, 60)

fill (x, y, z)

ellipse (415, 290, 70, 60)

fill (x2, y2, z2)

ellipse (395, 230, 70, 60)

fill (x, y, z)

ellipse (344, 185, 70, 60)

//purple body

fill (120, 20, 200)

ellipse(300, 500, size, 200)

//face

fill (200, 205, 255)

ellipse (300, 300, 250, 250)

//eyes

fill(w, e, r)

arc(240, 260, 80, 40, 150, PI + QUARTER_PI, CHORD);

arc(360, 260, 80, 40, 150, PI + QUARTER_PI, CHORD);

fill(i, o, p)

arc(240, 260, 80, 40, 20, PI + QUARTER_PI, CHORD);

arc(360, 260, 80, 40, 20, PI + QUARTER_PI, CHORD);

fill(255, 255, 255)

ellipse(360, 260, 20, 20)

ellipse(240, 260, 20, 20)

fill(0, 0, 0)

ellipse(360, 260, 10, 10)

ellipse(240, 260, 10, 10)

//mouth

fill(255, 20, 123)

rect(270, 300, 60, 100)

fill(0, 0, 0)

rect(275, 305, 50, 90)

//eyebrows

fill (0, 0, 0)

rect (rx1, ry1, 80, 20)

rect (rx2, ry2, 80, 20)

//triangle hat

fill(b, n, m)

triangle(trix1, 210, trix2, 210, 300, 100);

}

function mousePressed() {

x = random(0, 255);

y = random(0, 255);

z = random(0, 255);

x2 = random(0, 255);

y2 = random(0, 255);

z2 = random(0, 255);

b = random(0, 255);

n = random(0, 255);

m = random(0, 255);

trix1 = random(100, 160)

trix2 = random(440, 500)

ry1 = random(220, 200)

rx1 = random(200, 220)

ry2 = random(220, 200)

rx2 = random(310, 320)

w = random(0, 255)

e = random(0, 255)

r = random(0, 255)

i = random(0, 255)

o = random(0, 255)

p = random(0, 255)

size = random(200, 250)

}

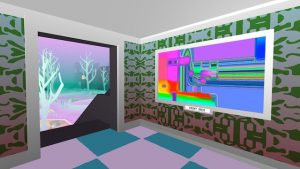

For this project, I didn’t use a pre-drawn sketch of mine, I instead went straight into programming which proved to be the main problem. I don’t mind how my variable face turned out, but in the future, I want to start employing the use of drawings more to make it more organized.

![[OLD FALL 2018] 15-104 • Introduction to Computing for Creative Practice](../../wp-content/uploads/2020/08/stop-banner.png)