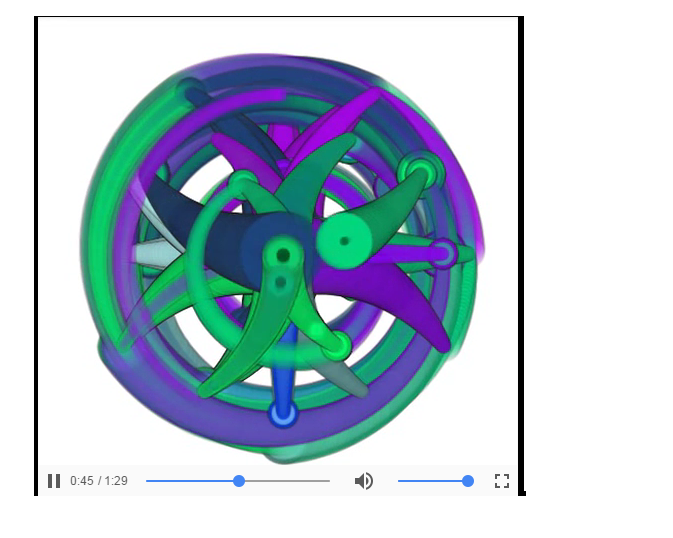

Google has created a virtual reality paintbrush called the TiltBrush, which now is able to react to sound. This means artists can create works that react to different sounds, and literally create musical paintings. Artists are able to create within the 360 VR experience and create layered sound reactive experiences. I find this project super interesting and inspiring because this is something I never imagined that would exist. I am not able to find the algorithm that creates these works, but through a bit of research I would assume that it has to do with code driven visuals that automatically adapt to a live audio input.

Here is a link to the project:

https://www.tiltbrush.com/

![[OLD – FALL 2016] 15-104 • COMPUTING for CREATIVE PRACTICE](../../wp-content/uploads/2020/08/stop-banner.png)