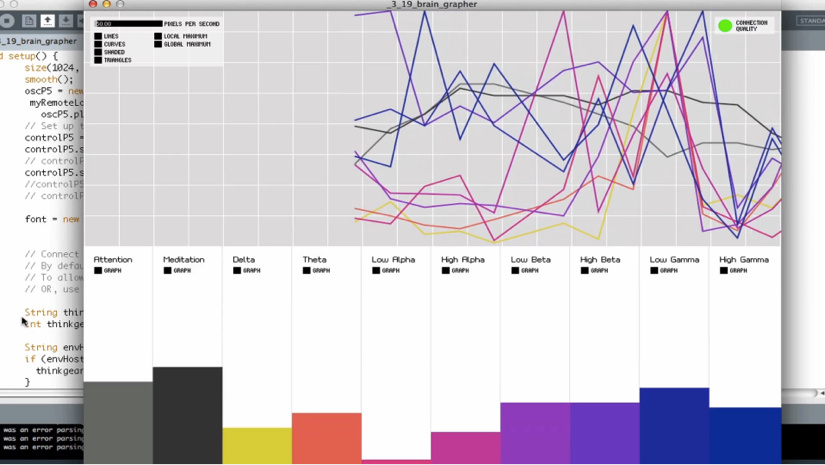

Ian Chang’s “Spiritual Leader” is an interesting sound art/music project because he uses both mechanical drum sounds as well as human produced drum sounds to create a percussive piece where the mechanical and human sounds blend together. This creates an effect where sonically it can be unclear what is the computer and what is Ian.

The collaboration of Chang with Endless Endless is a video where they created a light projection installation based off of the drum beats that shines different lights and projections on to Chang as he plays the drums. This creates an environment that is simultaneously sonically and visually percussive. The effect is interesting and I found really successful because while the human and mechanical sounds are blending, the lights only turn on through the human interaction that creates the beats. You can really feel the presence of the artist, although the environment and many of the sounds are produced by a computer.

http://thecreatorsproject.vice.com/blog/sample-based-drums-psychedelic-light-show

![[OLD – FALL 2016] 15-104 • COMPUTING for CREATIVE PRACTICE](../../wp-content/uploads/2020/08/stop-banner.png)