Because it can’t be viewed properly on WordPress, please download the file.

I was always fascinated in animation ever since I was little and I always wanted to create something that would help introduce people to making animations. I made this so anyone at any age can know or get the idea and possibly get inspired.

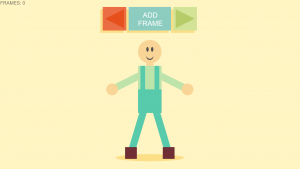

SAMPLE:

Instructions:

- Adjust your character to your preferred position. Please move the character slowly.

- Save the frame

- Adjust your character very slightly little by little and save the frame every time

- You can view your animation forward and backward by pressing and holding one of the arrow buttons.

//Sheenu You

//Section E

//sheenuy@andrew.cmu.edu;

//Final Project

//Initial X and Y coordinates for the Ragdoll Bodyparts

var charx = 640;

var chary = 380;

var hedx = 640;

var hedy = 220;

var arlx = 640 - 160;

var arly = 380;

var arrx = 640 + 160;

var arry = 380;

var brlx = 640 - 80;

var brly = 650;

var brrx = 640 + 80;

var brry = 650;

//Arrays that register all the X Y coordinates of all the bodyparts

var xb = [];

var yb = [];

var xh = [];

var yh = [];

var xal = [];

var yal = [];

var xar = [];

var yar = [];

var xbl = [];

var ybl = [];

var xbr = [];

var ybr = [];

//Variables that track animation frames

var frameNumber = -1;

var frames = 0;

//Variables that test if functions are running.

var initialized = 0;

var forward = 0;

var backward = 0;

function setup() {

createCanvas(1280, 720);

background(220);

frameRate(24);

}

function draw() {

noStroke();

//Controls drawing of buttons

var playing = 0;

var blockyMath = 100;

var distance = 150;

background(255, 245, 195);

body();

fill(124, 120, 106);

textSize(20);

text("FRAMES: " + frames, 5, 20);

//Draws NewFrame Button

fill(252, 223, 135);

rect(width/2 - 10, 80 + 10, 180, 100);

fill(141, 205, 193);

rect(width/2, 80, 180, 100);

//Draws Play/Stop Button

fill(252, 223, 135);

rect((width/2) - distance - 10, 80 + 10, blockyMath, blockyMath);

fill(235, 110, 68);

rect((width/2) - distance, 80, blockyMath, blockyMath);

//Fills triangle if one frame is made

if (frames >= 1){

fill(255);

} else {

fill(231, 80, 29);

}

triangle((width/2) - distance + 40, 40, (width/2) - distance + 40, 120, (width/2) - distance - 40, 80);

push();

fill(255);

textSize(30);

text("ADD", 610, 75);

text("FRAME", 590, 110);

pop();

//Draws Rewind/Stop Button

fill(252, 223, 135);

rect((width/2) + distance - 10, 80 + 10, blockyMath, blockyMath);

fill(211, 227, 151);

rect((width/2) + distance, 80, blockyMath, blockyMath);

if (frames >= 1){

fill(255);

} else {

fill(182, 204, 104);

}

triangle((width/2) + distance - 40, 40, (width/2) + distance - 40, 120, (width/2) + distance + 40, 80);

//PLAY/Stop Button Functions-Cycles forward through frames if mouse is pressing the button

if (mouseX >= ((width/2) + distance - blockyMath/2) & mouseX <= ((width/2) +

distance + blockyMath/2) && mouseY >= 30 && mouseY <= 135 && mouseIsPressed && frames >= 1){

frameNumber += 1

//Cycles through all arrays

charx = xb[frameNumber];

chary = yb[frameNumber];

hedx = xh[frameNumber];

hedy = yh[frameNumber];

arlx = xal[frameNumber];

arly = yal[frameNumber];

arrx = xar[frameNumber];

arry = yar[frameNumber];

brlx = xbl[frameNumber];

brly = ybl[frameNumber];

brrx = xbr[frameNumber];

brry = ybr[frameNumber];

playing = 1;

initialized = 1;

forward = 1;

//Goes back to latest frame when mouse is released.

} else if (forward == 1){

frameNumber =xb.length - 1

playing = 0;

initialized = 0;

forward = 0;

}

//REWIND/Stop Button Functions-Cycles backward through frames if mouse is pressing the button

if (mouseX >= ((width/2) - distance - blockyMath/2) & mouseX <= ((width/2) - distance +

blockyMath/2) && mouseY >= 30 && mouseY <= 135 && mouseIsPressed && frames >= 1){

frameNumber -= 1;

//Cycles through all arrays

charx = xb[frameNumber];

chary = yb[frameNumber];

hedx = xh[frameNumber];

hedy = yh[frameNumber];

arlx = xal[frameNumber];

arly = yal[frameNumber];

arrx = xar[frameNumber];

arry = yar[frameNumber];

brlx = xbl[frameNumber];

brly = ybl[frameNumber];

brrx = xbr[frameNumber];

brry = ybr[frameNumber];

initialized = 1;

playing = 1;

backward = 1;

//Goes back to latest frame when mouse is released.

} else if (backward == 1){

frameNumber = xb.length - 1

playing = 0;

initialized = 0;

backward = 0;

}

//Allows frames to loop when animation is going forward

if (frameNumber >= xb.length - 1 & playing == 1 && forward == 1){

frameNumber = -1;

}

//Allows frame to loop when animation is going backward

if (frameNumber <= 0 & backward == 1 && playing == 1){

frameNumber = xb.length;

}

}

//Draws Ragdoll

function body(){

//Shadow

fill(252, 223, 135);

ellipse(charx, 670, (chary/2) + 100, 30);

//Ligaments

//Neck

push();

stroke(249, 213, 151);

strokeWeight(30);

line(charx, chary - 80, hedx, hedy);

stroke(190, 228, 171);

//ArmLeft

line(charx, chary - 80, arlx, arly);

//ArmRight

line(charx, chary - 80, arrx, arry);

stroke(88, 203, 172);

//FootLeft

line(charx - 20, chary + 70, brlx, brly);

//FootRight

line(charx + 20, chary + 70, brrx, brry);

pop();

noStroke();

rectMode(CENTER);

//Head

fill(249, 213, 151);

ellipse(hedx, hedy, 100, 100);

fill(80);

ellipse(hedx - 10, hedy - 10, 10, 20);

ellipse(hedx + 10, hedy - 10, 10, 20);

ellipse(hedx, hedy + 20, 30, 30);

fill(249, 213, 151);

ellipse(hedx, hedy + 15, 30, 30);

//Left Arm

fill(249, 213, 151);

ellipse(arlx, arly, 50, 50);

//Right Arm

fill(249, 213, 151);

ellipse(arrx, arry, 50, 50);

//Left Foot

fill(112, 47, 53);

rect(brlx, brly, 50, 50);

//Right Foot

fill(112, 47, 53);

rect(brrx, brry, 50, 50);

//Character

fill(190, 228, 171);

rect(charx, chary, 100, 200);

fill(88, 203, 172);

rect(charx, chary + 50, 100, 100);

fill(88, 203, 172);

rect(charx + 30, chary - 50, 20, 100);

rect(charx - 30, chary - 50, 20, 100);

//MouseDrag Body

if (mouseX >= charx - 50 & mouseX <= charx + 50 && mouseY >= chary - 100 && mouseY <= chary + 100 && mouseIsPressed){

charx = mouseX;

chary = mouseY;

}

//MouseDrag Head

if (mouseX >= hedx - 50 & mouseX <= hedx + 50 && mouseY >= hedy - 50 && mouseY <= hedy + 50 && mouseIsPressed){

hedx = mouseX;

hedy = mouseY;

}

//MouseDrag Left Arm

if (mouseX >= arlx - 25 & mouseX <= arlx + 25 && mouseY >= arly - 25 && mouseY <= arly + 25&& mouseIsPressed){

arlx = mouseX;

arly = mouseY;

}

//MouseDrag Right Arm

if (mouseX >= arrx - 25 & mouseX <= arrx + 25 && mouseY >= arry - 25 && mouseY <= arry + 25 && mouseIsPressed){

arrx = mouseX;

arry = mouseY;

}

//MouseDrag Left Foot

if (mouseX >= brlx - 25 & mouseX <= brlx + 25 && mouseY >= brly - 25 && mouseY <= brly + 25 && mouseIsPressed){

brlx = mouseX;

brly = mouseY;

}

//MouseDrag Right Foot

if (mouseX >= brrx - 25 & mouseX <= brrx +25 && mouseY >= brry - 25 && mouseY <= brry + 25 && mouseIsPressed){

brrx = mouseX;

brry = mouseY;

}

}

function mousePressed(){

//Register/records character coordinates to new frame when "New Frame" button is pressed

if (mouseX >= (width/2) - 90 & mouseX <= (width/2) + 90 && mouseY >= 30 && mouseY <= 135 && mouseIsPressed){

frameNumber += 1

frames += 1

//Push character coordinates to x y arrays.

xb.push(charx);

yb.push(chary);

xh.push(hedx);

yh.push(hedy);

xal.push(arlx);

yal.push(arly);

xar.push(arrx);

yar.push(arry);

xbl.push(brlx);

ybl.push(brly);

xbr.push(brrx);

ybr.push(brry);

//Flash

background(255, 0, 0, 90);

}

}

//Resets all body parts to x y coordinates of last frame created when mouse is released

//and cycling is over.

function mouseReleased(){

if (initialized == 1){

charx = xb[xb.length - 1];

chary = yb[yb.length - 1];

hedx = xh[xh.length - 1];

hedy = yh[yh.length - 1];

arlx = xal[xal.length - 1];

arly = yal[yal.length - 1];

arrx = xar[xar.length - 1];

arry = yar[yar.length - 1];

brlx = xbl[xbl.length - 1];

brly = ybl[ybl.length - 1];

brrx = xbr[xbr.length - 1];

brry = ybr[ybr.length - 1];

}

}

Because it can’t be viewed properly on WordPress, please download the file.

![[OLD FALL 2017] 15-104 • Introduction to Computing for Creative Practice](../../wp-content/uploads/2020/08/stop-banner.png)