I feel really stupid for how long I spent trying to get Colab to work. If I wasn’t making this assignment up a few weeks later, I would’ve just headed over to office hours, but since I’m so late already, I just used Pixray instead.

My prompt was: “friendly elves frolicking in a meadow, strawberry picking with baskets, with a giant rainbow in the sky”

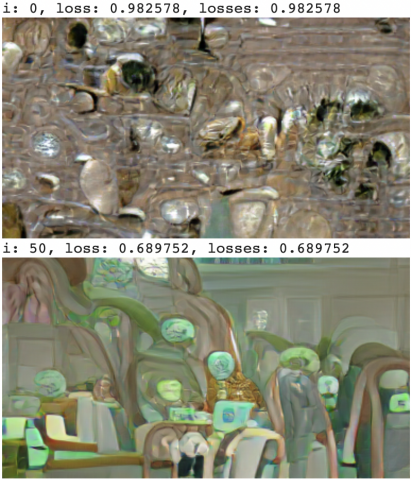

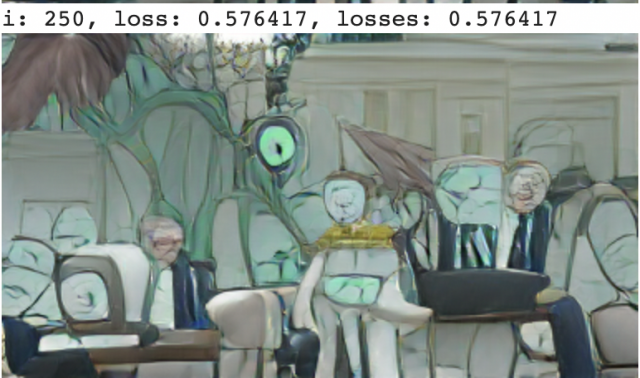

It was fun seeing the photo slowly develop through iterations. Here are some of the first ones:

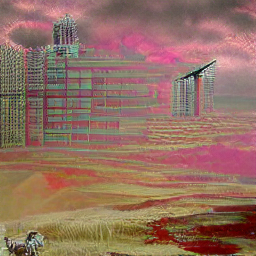

Compared to the end product, it’s so much different! Anyway, I really love the picture, especially how cute the elves are! I just kind of wish the rainbow was there!