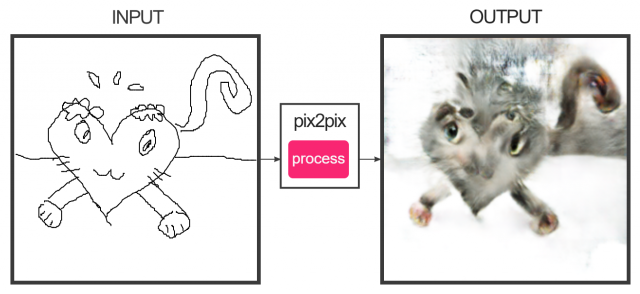

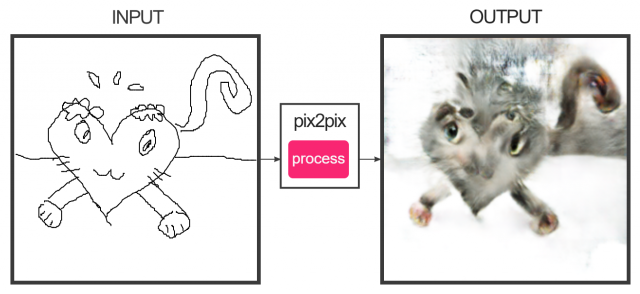

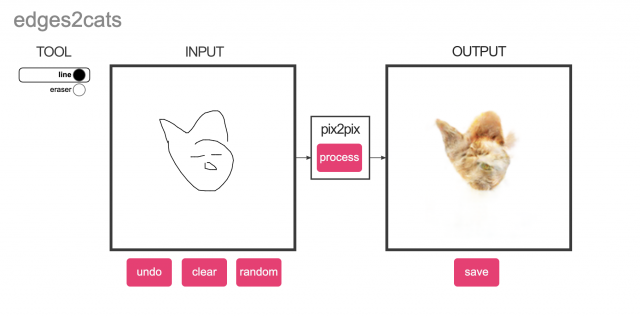

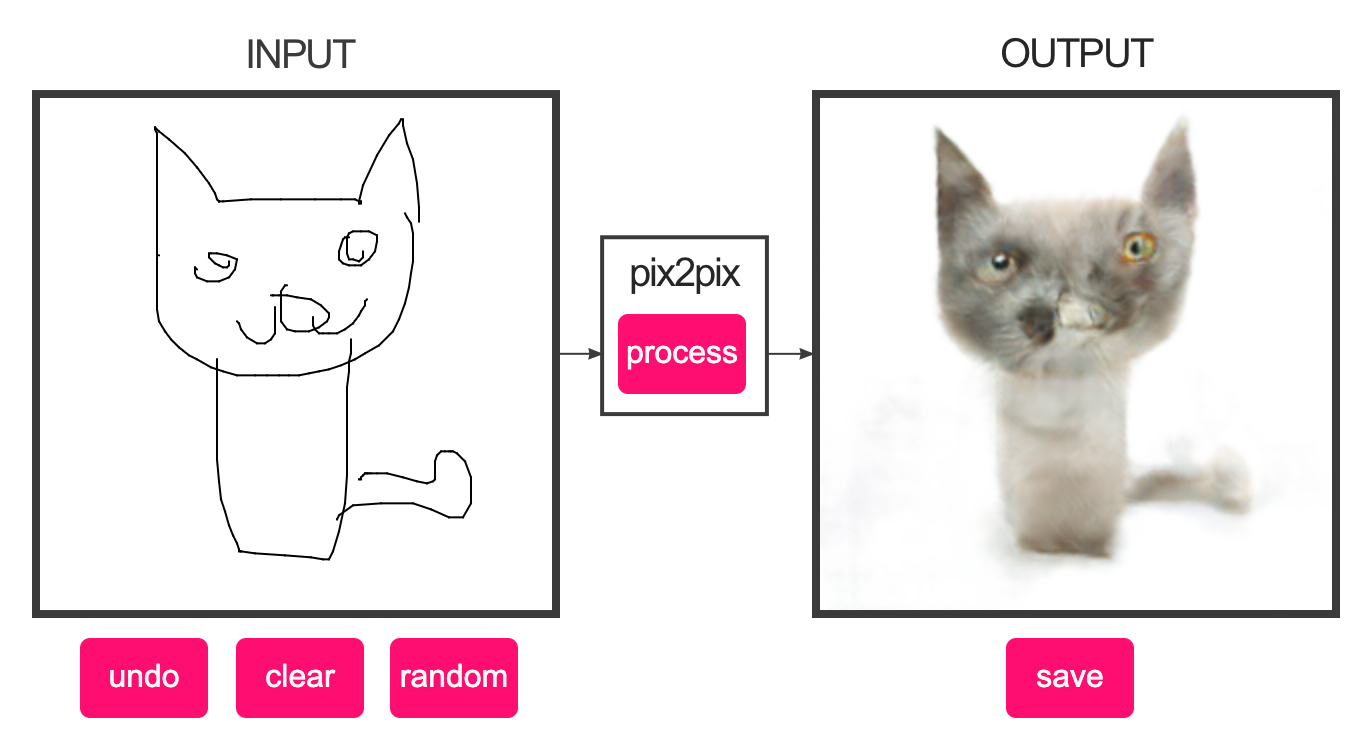

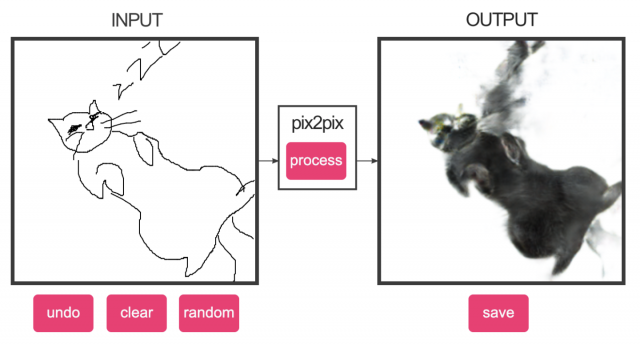

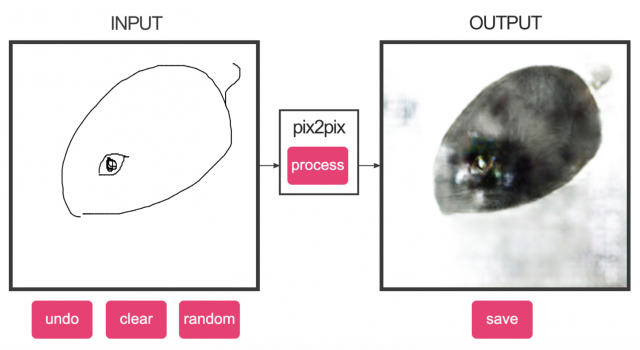

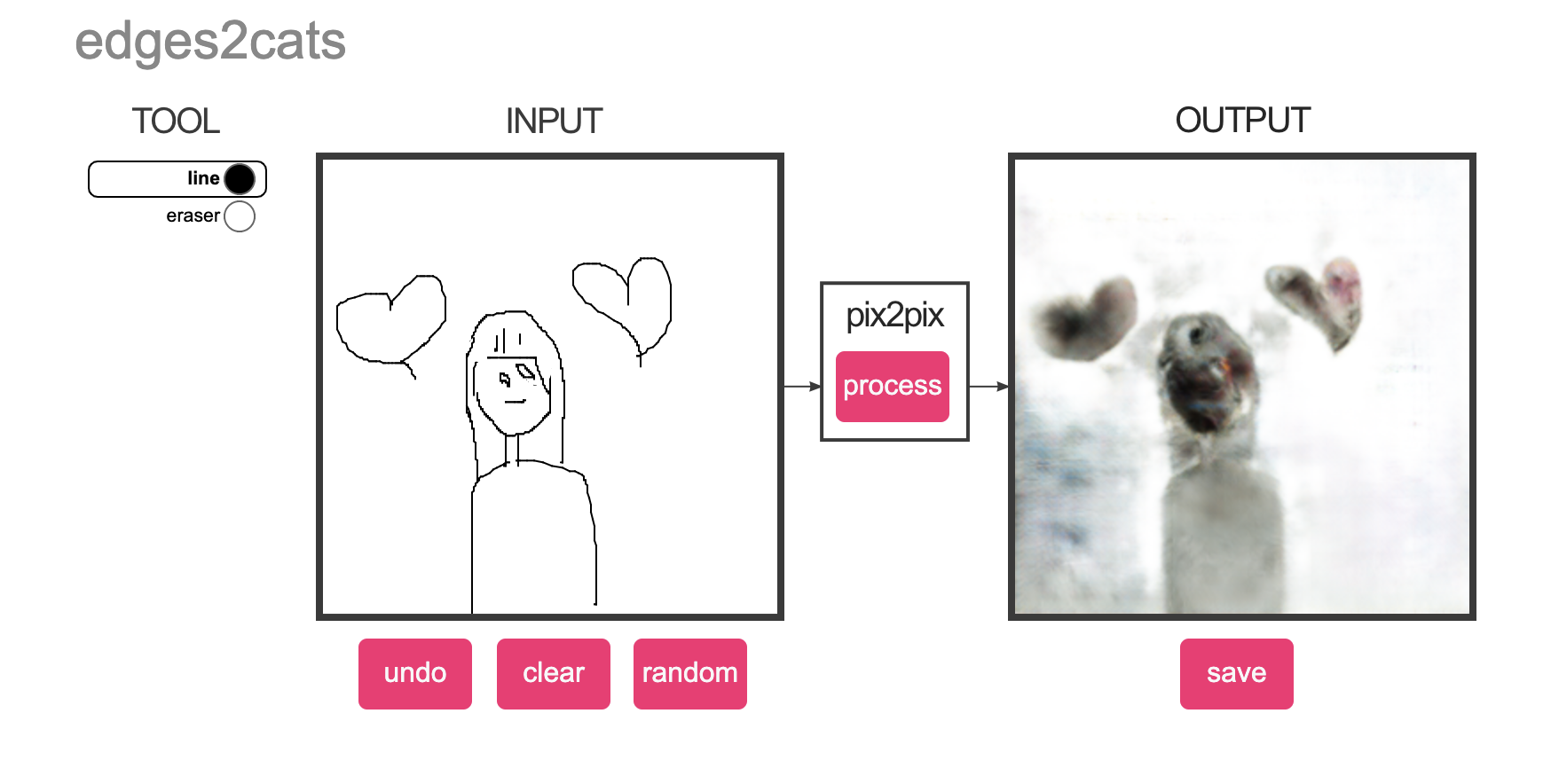

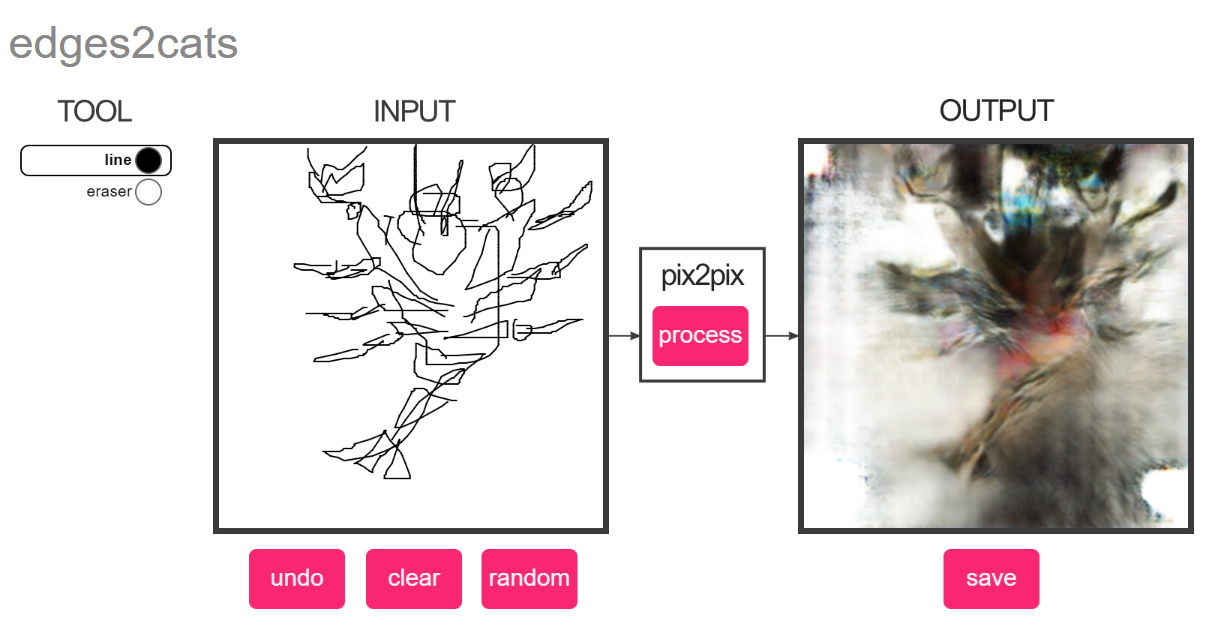

I wanted to see how Pix2Pix would generate images from sketches that do not look like cats. One of the things I tried drawing initially was a snake, it really did not turn out.

60-212: Interactivity and Computation for Creative Practice

CMU School of Art / IDeATe, Fall 2020 • Prof. Golan Levin

I wanted to see how Pix2Pix would generate images from sketches that do not look like cats. One of the things I tried drawing initially was a snake, it really did not turn out.

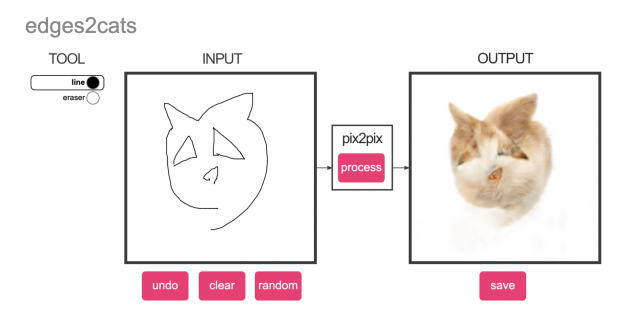

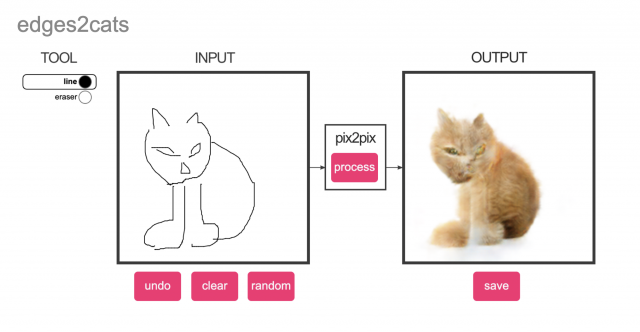

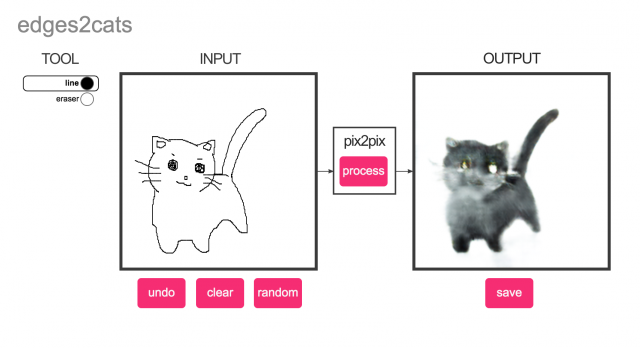

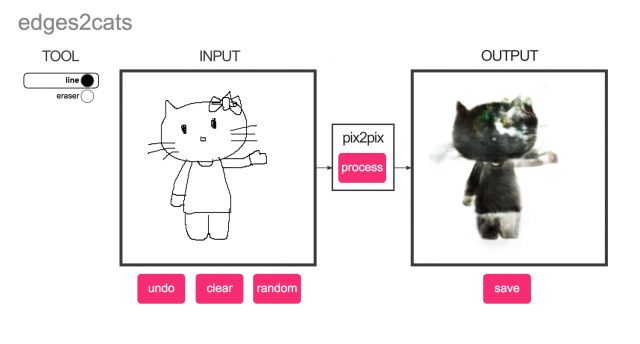

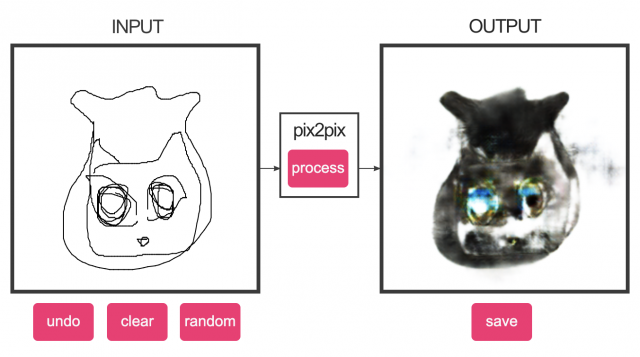

My guess on how these models were trained is that an edge detection algorithm was run on cat images and that input was fed into the model and the output was expected to be the original cat image. As such, this model won’t produce realistic cat features with hand drawings alluding to them. In the image below the triangle eyes are just converted to fur instead of eyes. The same lack of recognizing hand drawn features persists. Still a fun tool though.

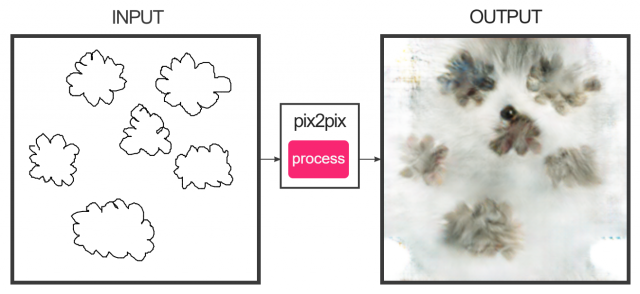

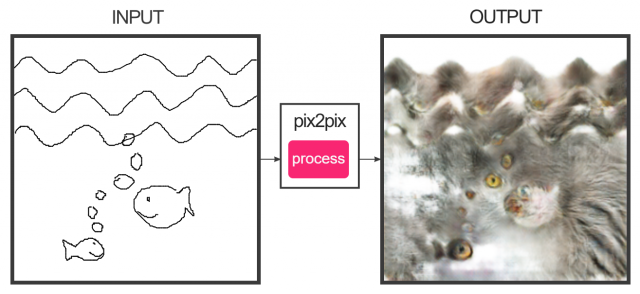

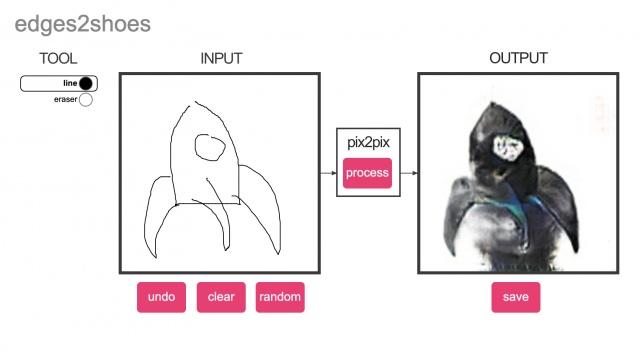

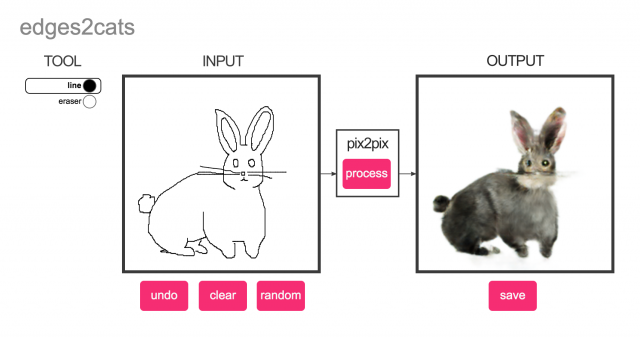

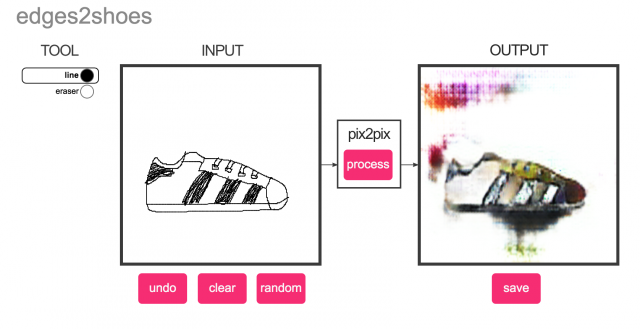

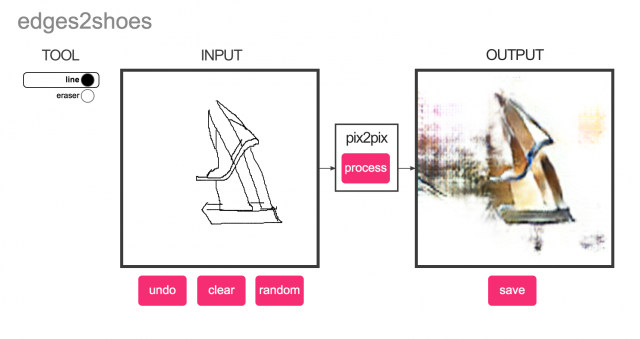

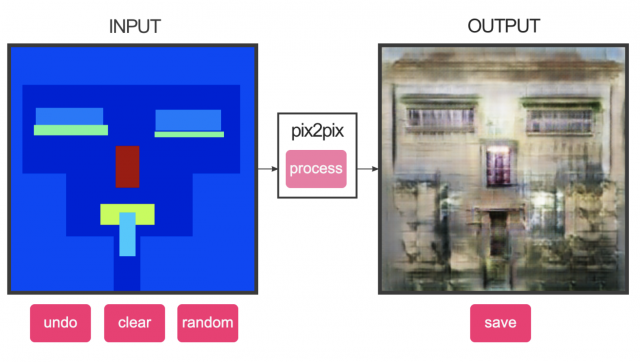

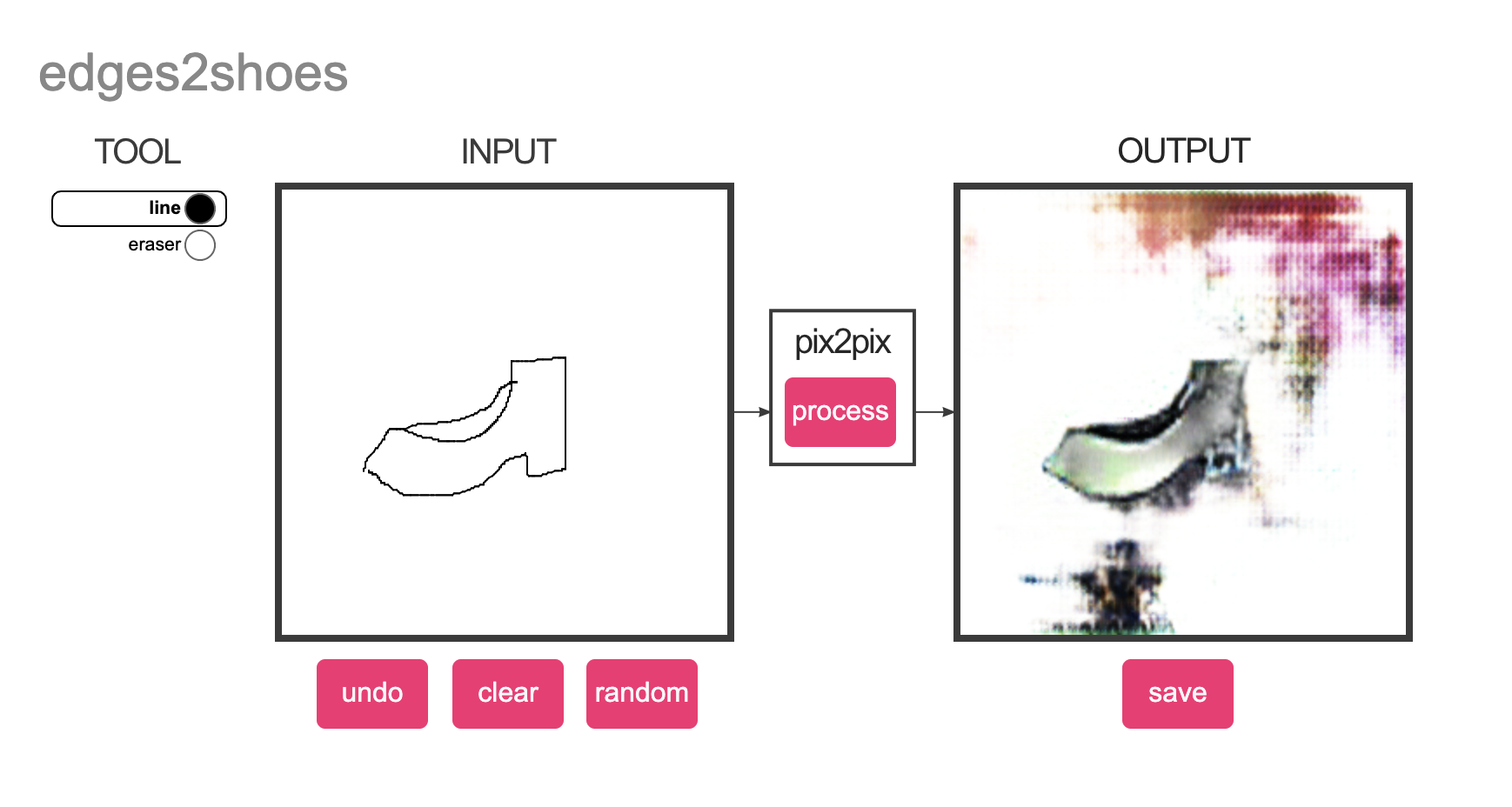

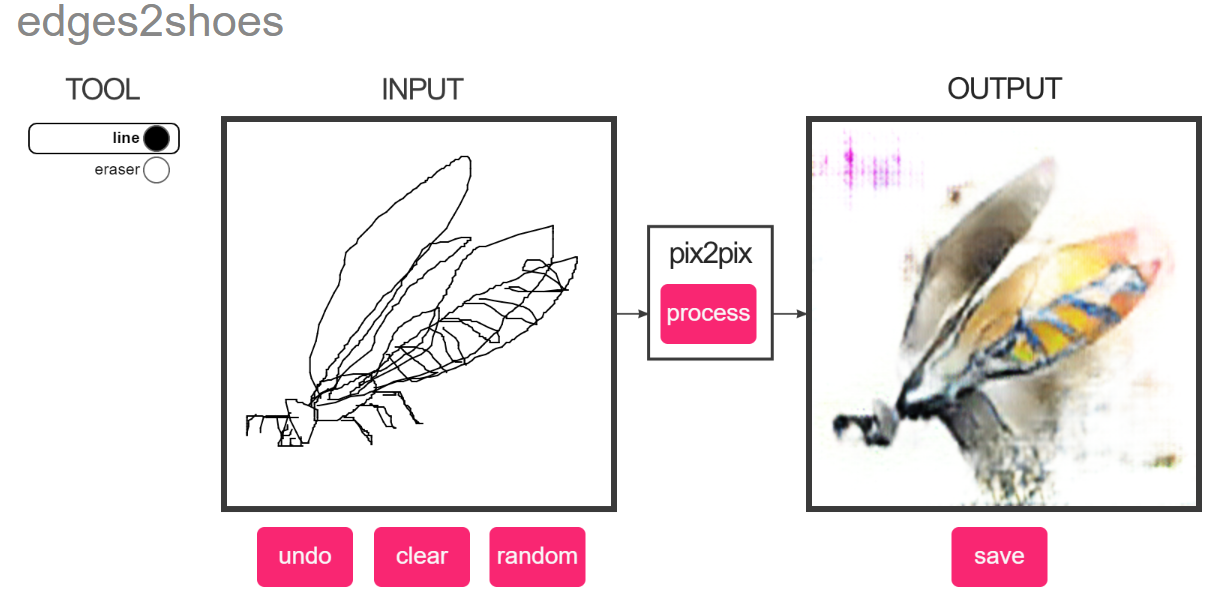

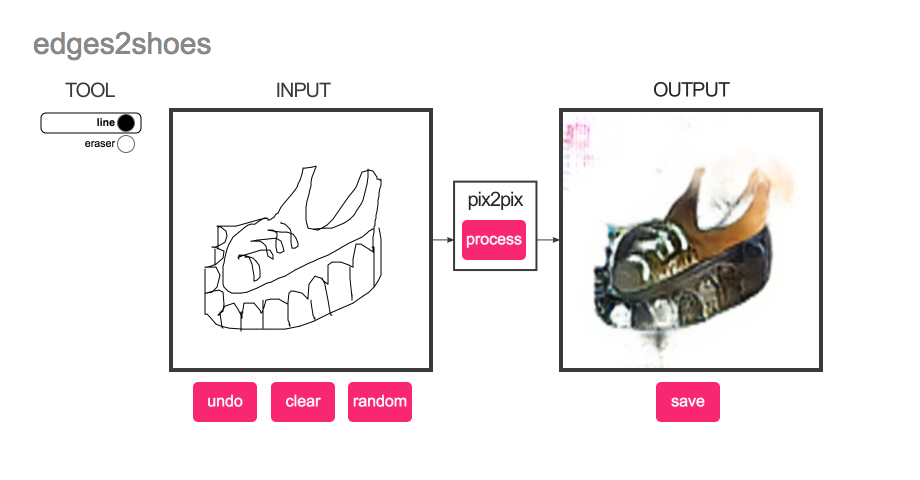

I thought it was interesting how these tools were looking for drawings of cats, bags, etc only at angles that one might take a photo of them. So, I thought it would be interesting to see what the tools output if the user actively tries not to draw the thing they expect. Honestly the first image with the rocket ship is kind of funny because, by trying to make it a shoe, the algorithm made it look like it is launching.

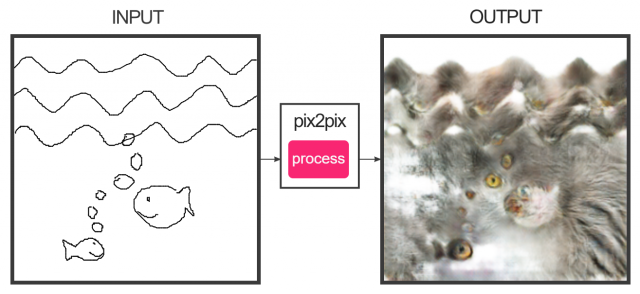

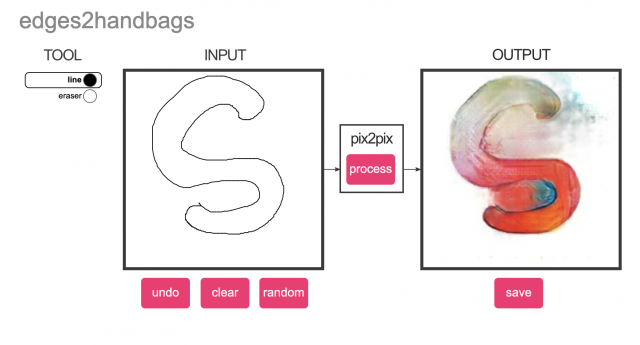

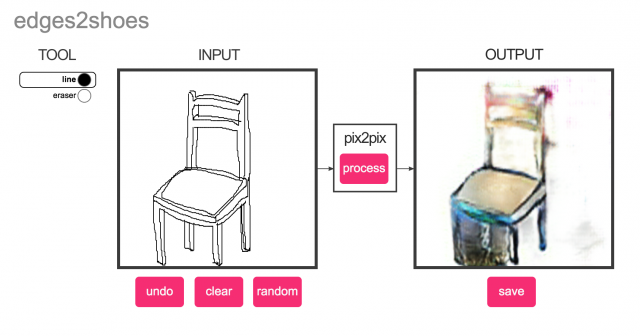

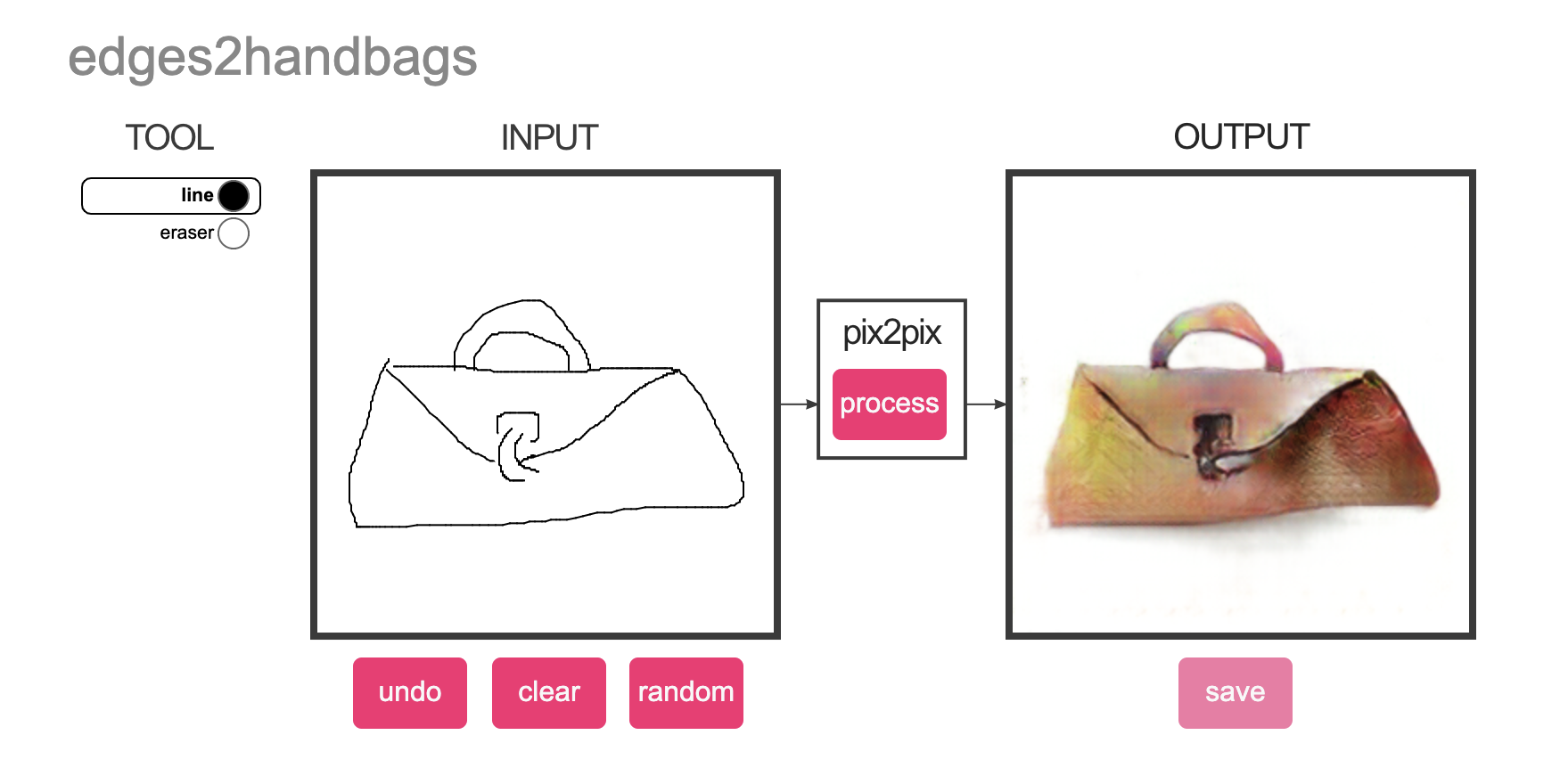

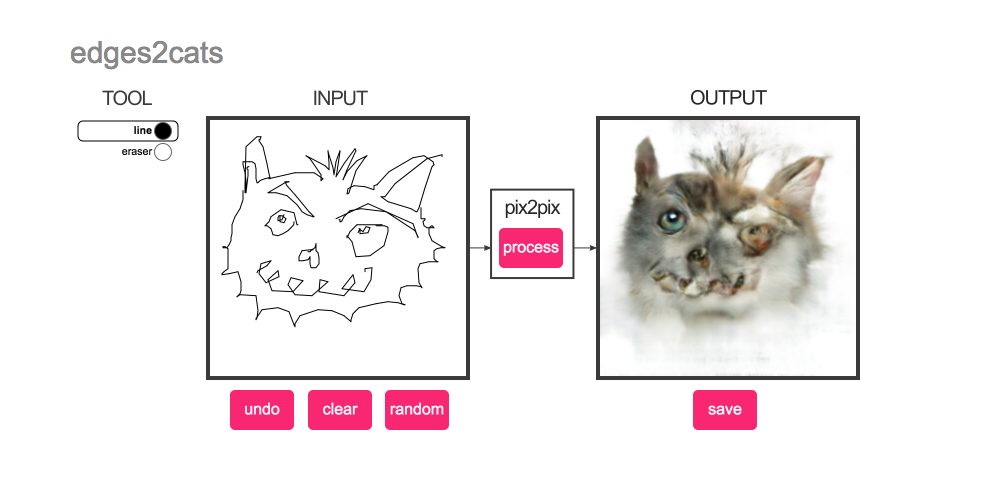

I really enjoyed exploring this tool! I tried drawing in three ways (something that fits my understanding of the object, a more stylized version, and unrelated object) for edges2cats and edges2shoes and got some interesting results.

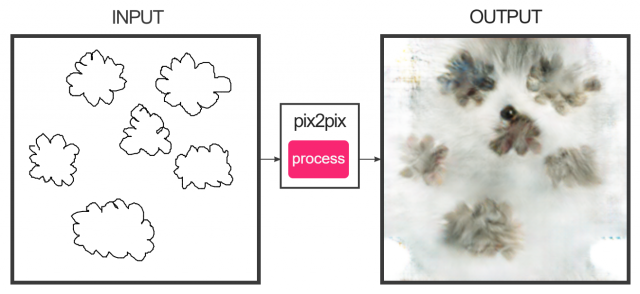

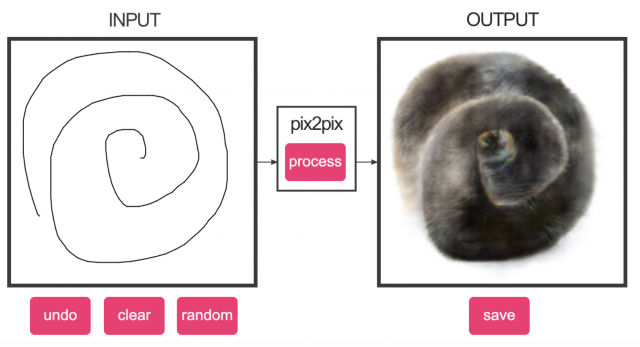

This is more interesting than I expected! I loved how the algorithm sees so much that I don’t see.

edges2cats:

(shiny blue eyes +_+)

edges2handbags:

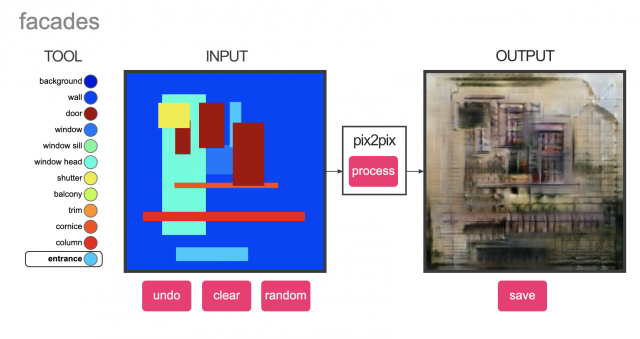

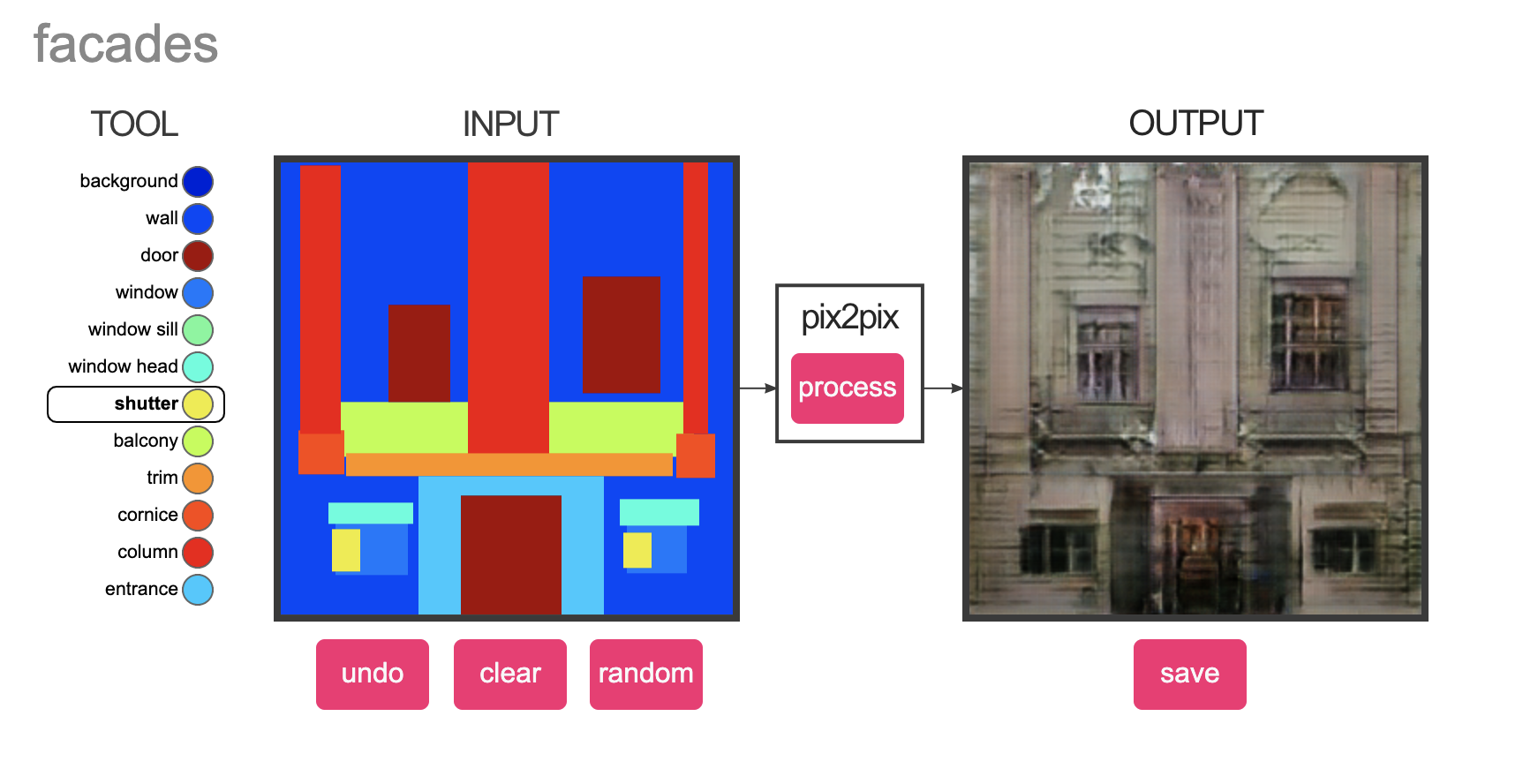

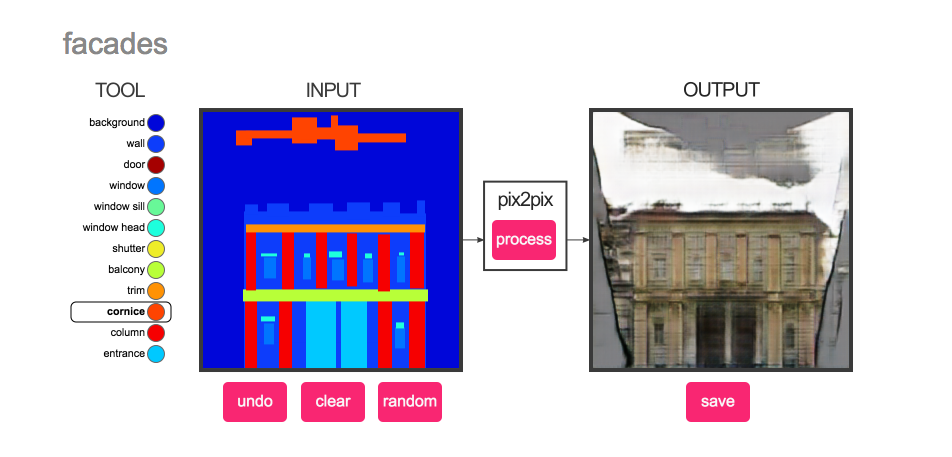

facades:

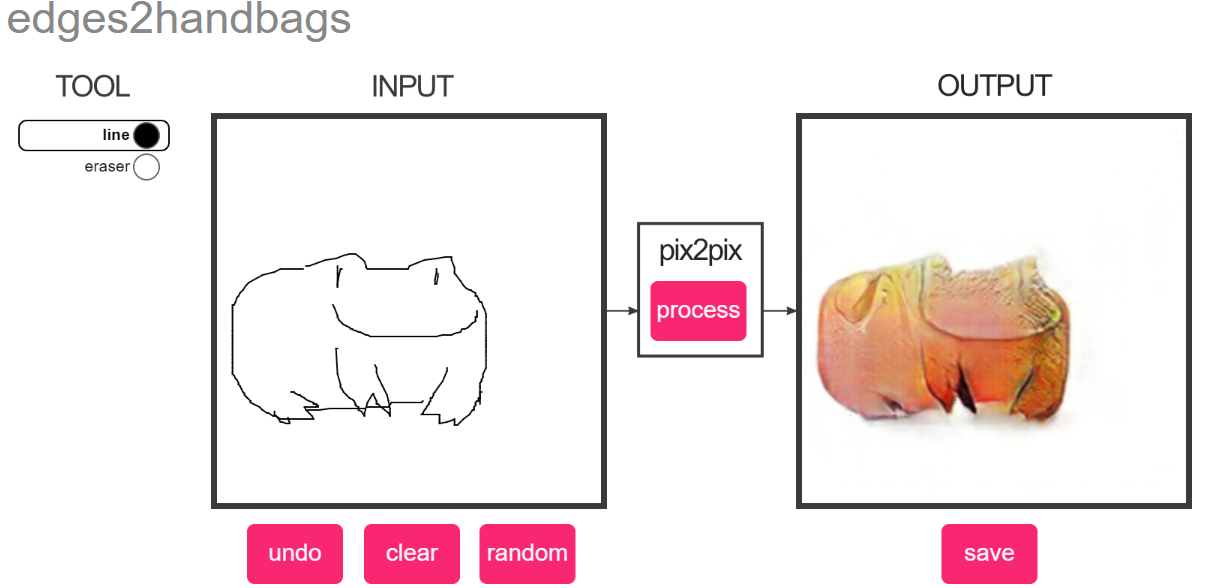

OMG this is so fun! I never used pix2pix before, so I really enjoyed playing around with these. I wished I knew about this when I was little — I definitely would have spent so much time playing with this.

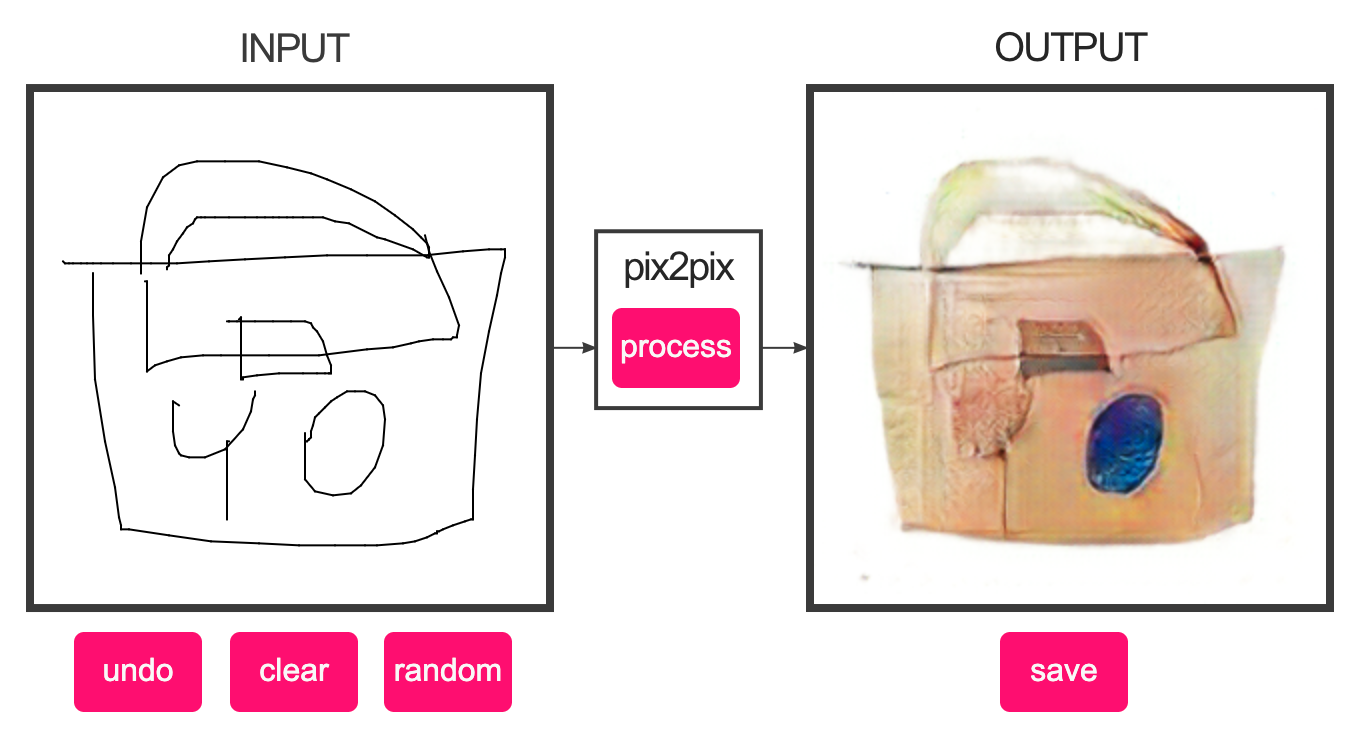

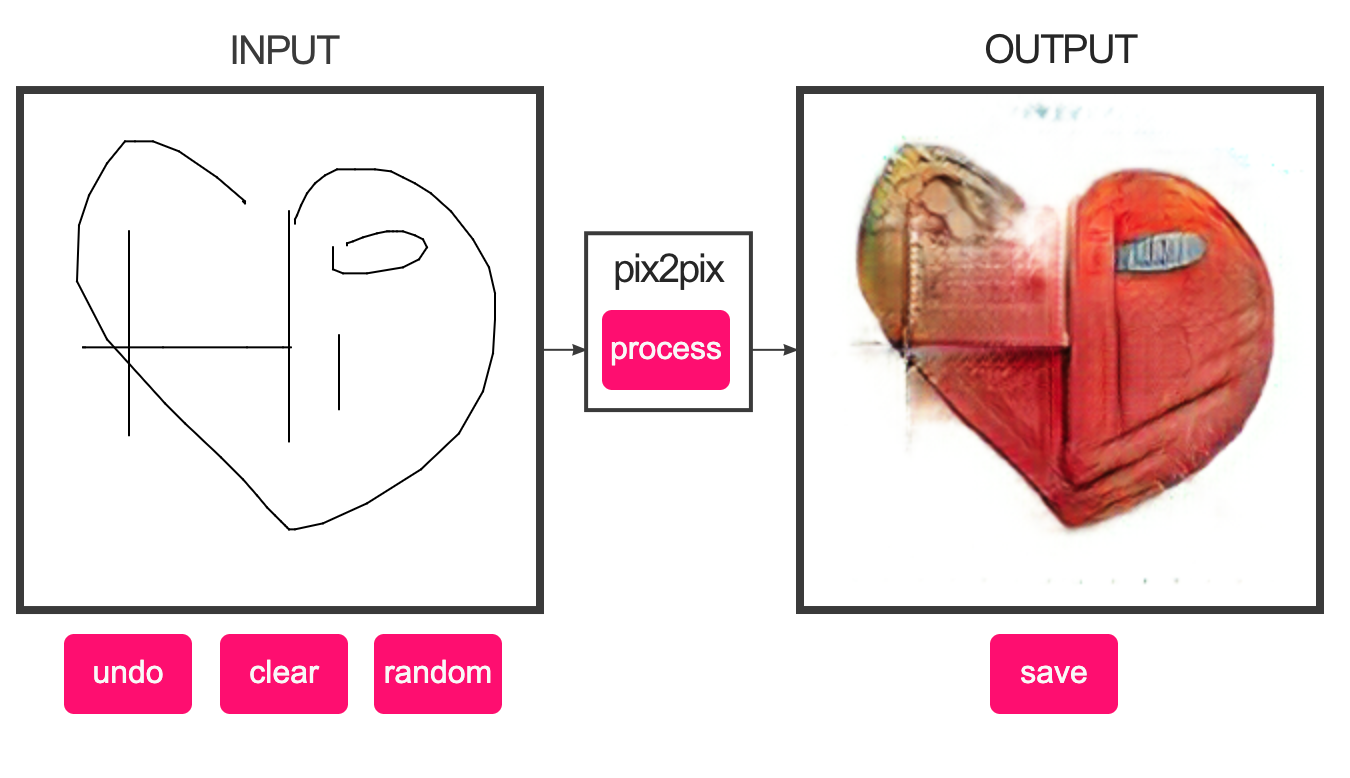

I loved how every time I generated something they all looked like rusted, old and lost items that was discovered by my very random drawings.

This was really fun to play around with and trying to create an output different from what the program was trying to process.

It was very fun to experience how they would respond to inputs I would expect to be tricky to work with.